🥇Top ML Papers of the Week

The Top ML Papers of the Week (June 10 - June 16)

1). Nemotron-4 340B - provides an instruct model to generate high-quality data and a reward model to filter out data on several attributes; demonstrates strong performance on common benchmarks like MMLU and GSM8K; it’s competitive with GPT-4 on several tasks, including high scores in multi-turn chat; a preference data is also released along with the base model. (paper | tweet)

2). Discovering Preference Optimization Algorithms with LLMs - proposes LLM-driven objective discovery of state-of-the-art preference optimization; no human intervention is used and an LLM is prompted to propose and implement the preference optimization loss functions based on previously evaluated performance metrics; discovers an algorithm that adaptively combined logistic and exponential losses. (paper | tweet)

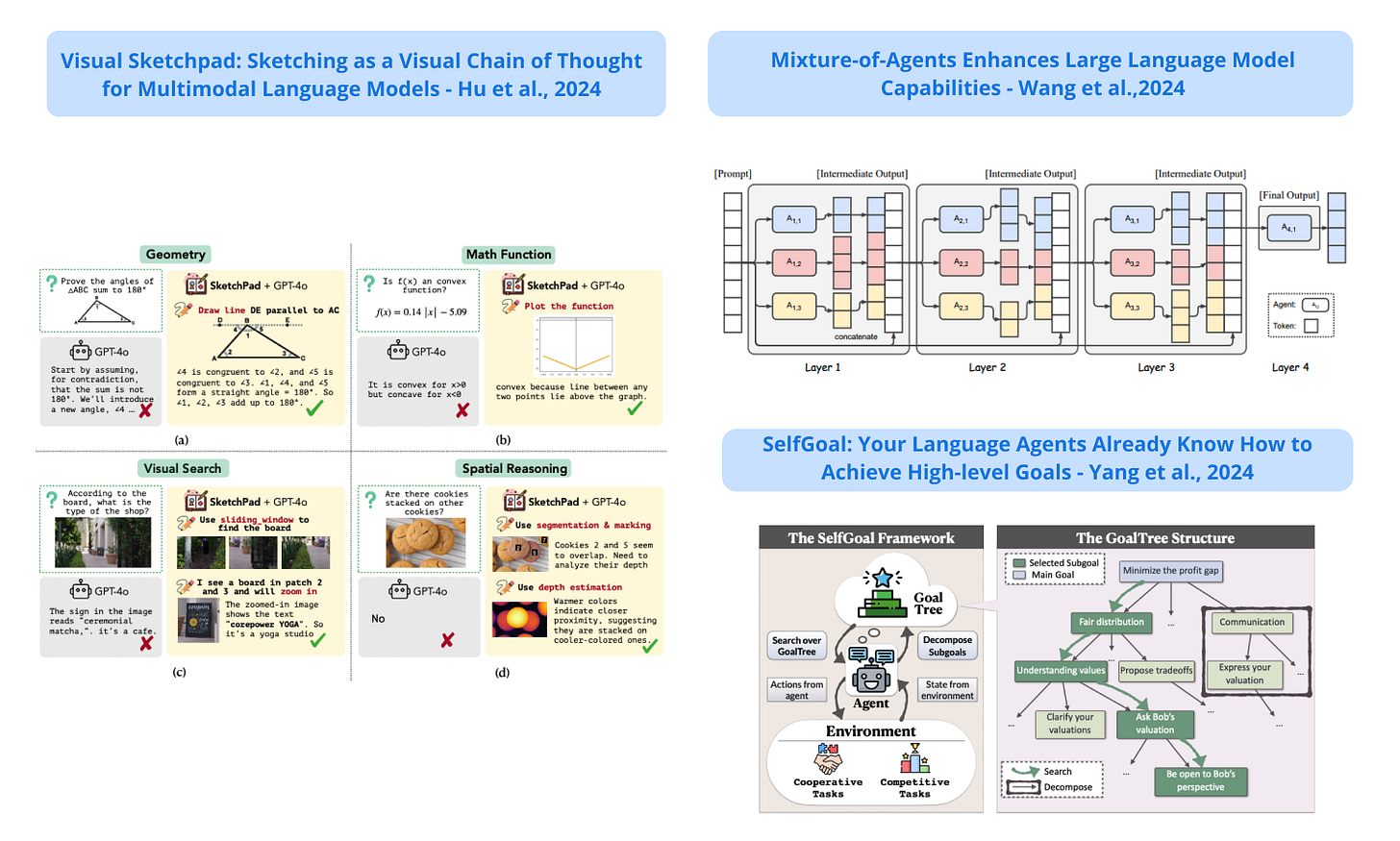

3). SelfGoal - a framework to enhance an LLM-based agent's capabilities to achieve high-level goals; adaptively breaks down a high-level goal into a tree structure of practical subgoals during interaction with the environment; improves performance on various tasks, including competitive, cooperative, and deferred feedback environments. (paper | tweet)

4). Mixture-of-Agents - an approach that leverages the collective strengths of multiple LLMs through a Mixture-of-Agents methodology; layers are designed with multiple LLM agents and each agent builds on the outputs of other agents in the previous layers; surpasses GPT-4o on AlpacaEval 2.0, MT-Bench and FLASK. (paper | tweet)

5). Transformers Meet Neural Algorithmic Reasoners - a new hybrid architecture that enables tokens in the LLM to cross-attend to node embeddings from a GNN-based neural algorithmic reasoner (NAR); the resulting model, called TransNAR, demonstrates improvements in OOD reasoning across algorithmic tasks. (paper | tweet)

Sponsor message

DAIR.AI presents a live cohort-based course, Prompt Engineering for LLMs, where you can learn about advanced prompting techniques, RAG, tool use in LLMs, agents, and other approaches that improve the capabilities, performance, and reliability of LLMs. Use promo code MAVENAI20 for a 20% discount.

6). Self-Tuning with LLMs - improves an LLM’s ability to effectively acquire new knowledge from raw documents through self-teaching; the three steps involved are 1) a self-teaching component that augments documents with a set of knowledge-intensive tasks focusing on memorization, comprehension, and self-reflection, 2) uses the deployed model to acquire knowledge from new documents while reviewing its QA skills, and 3) the model is configured to continually learn using only the new documents which helps with thorough acquisition of new knowledge. (paper | tweet)

7). Sketching as a Visual Chain of Thought - a framework that enables a multimodal LLM to access a visual sketchpad and tools to draw on the sketchpad; it can equip a model like GPT-4 with the capability to generate intermediate sketches to reason over complex tasks; improves performance on many tasks over strong base models with no sketching; GPT-4o equipped with SketchPad sets a new state of the art on all the tasks tested. (paper | tweet)

8). Mixture of Memory Experts - proposes an approach to significantly reduce hallucination (10x) by tuning millions of expert adapters (e.g., LoRAs) to learn exact facts and retrieve them from an index at inference time; the memory experts are specialized to ensure faithful and factual accuracy on the data it was tuned on; claims to enable scaling to a high number of parameters while keeping the inference cost fixed. (paper | tweet)

9). Multimodal Table Understanding - introduces Table-LLaVa 7B, a multimodal LLM for multimodal table understanding; it’s competitive with GPT-4V and significantly outperforms existing MLLMs on multiple benchmarks; also develops a large-scale dataset MMTab, covering table images, instructions, and tasks. (paper | tweet)

10). Consistent Middle Enhancement in LLMs - proposes an approach to tune an LLM to effectively utilize information from the middle part of the context; it first proposes a training-efficient method to extend LLMs to longer context lengths (e.g., 4K -> 256K); it uses a truncated Gaussian to encourage sampling from the middle part of the context during fine-tuning; the approach helps to alleviate the so-called "Lost-in-the-Middle" problem in long-context LLMs. (paper | tweet)

Reach out to hello@dair.ai if you would like to promote with us. Our newsletter is read by over 60K AI Researchers, Engineers, and Developers.

Am I missing something or does the paper not really say how? https://arxiv.org/pdf/2406.09403