🥇Top AI Papers of the Week

The Top AI Papers of the Week (April 19 - April 26)

1. DeepSeek V4

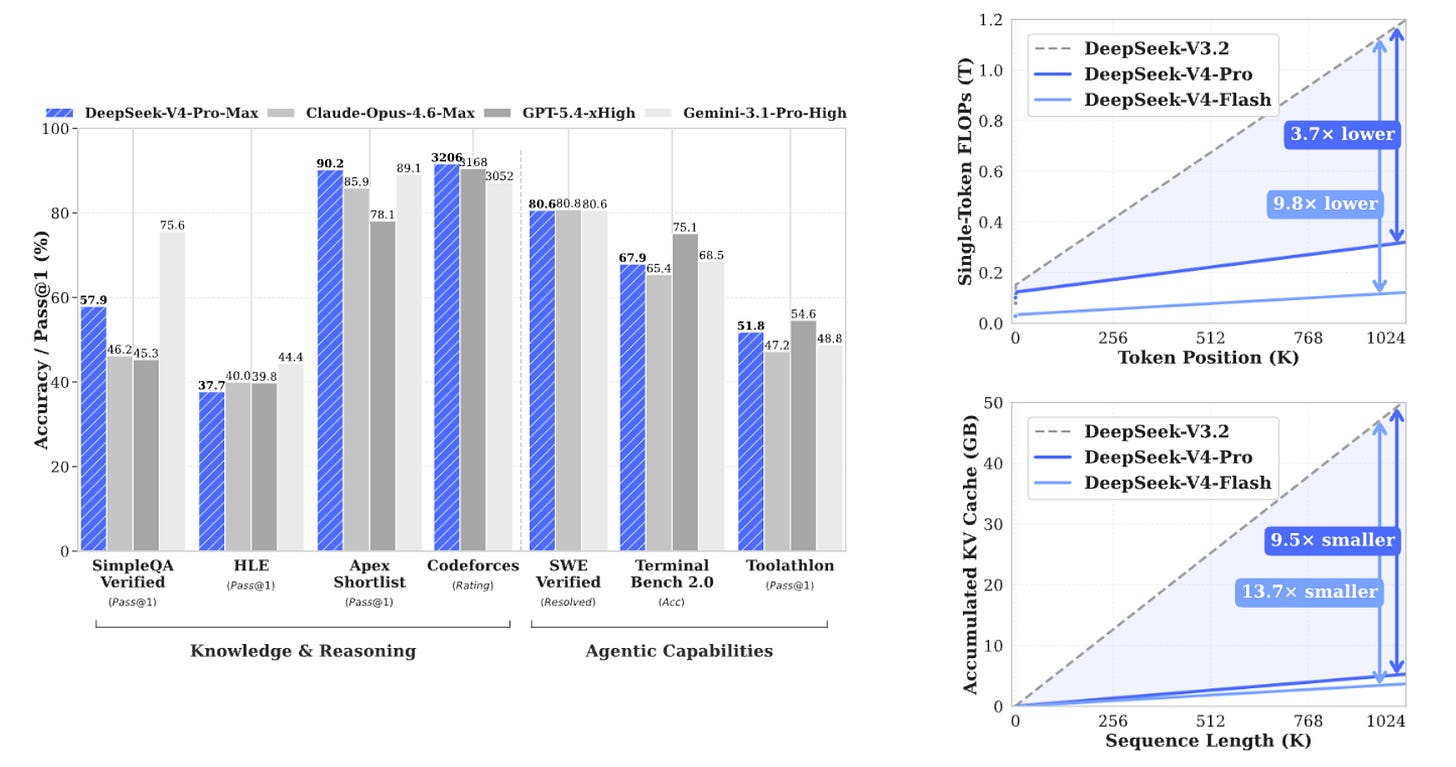

DeepSeek V4 is the first open model family built from the ground up around million-token contexts as a default rather than a bolt-on feature. The release includes DeepSeek-V4-Pro (1.6T total / 49B active) and DeepSeek-V4-Flash (284B total / 13B active), both trained natively at 1M context length. The tech report details a hybrid attention architecture, new training stability techniques, and a domain-specialist post-training pipeline that together push the open-source frontier much closer to GPT-5.2 and Gemini 3.0-Pro at a fraction of the cost.

Hybrid attention with CSA and HCA: DeepSeek V4 replaces a single attention stack with Compressed Sparse Attention (CSA) and Heavily Compressed Attention (HCA). CSA compresses KV entries, then applies DeepSeek Sparse Attention with sliding-window KV for fine-grained local dependencies. HCA aggressively compresses KV for extreme-context layers, keeping the model feasible at 1M tokens.

Training stability at trillion-parameter scale: The team introduces two techniques that materially cut loss spikes. Anticipatory Routing decouples backbone and router updates, using current weights for features but historical weights for routing indices. SwiGLU Clamping bounds the linear and gate components of SwiGLU to stabilize activations throughout pretraining.

Domain-specialist post-training: Instead of one large mixed-RL stage, DeepSeek trains a separate specialist expert per domain. Each expert goes through supervised fine-tuning on domain data, then Group Relative Policy Optimization (GRPO) RL with a domain-specific reward model. The specialists are merged into the final model, recovering capability without destabilizing the generalist.

Frontier-adjacent performance at open-source cost: DeepSeek-V4-Pro-Max beats GPT-5.2 and Gemini 3.0-Pro on standard reasoning benchmarks and lands just behind GPT-5.4 and Gemini 3.1-Pro, effectively trailing the closed frontier by roughly 3 to 6 months. For open-weights teams that need long-context reasoning without closed API pricing, this is the most important release of the week.

2. Autogenesis

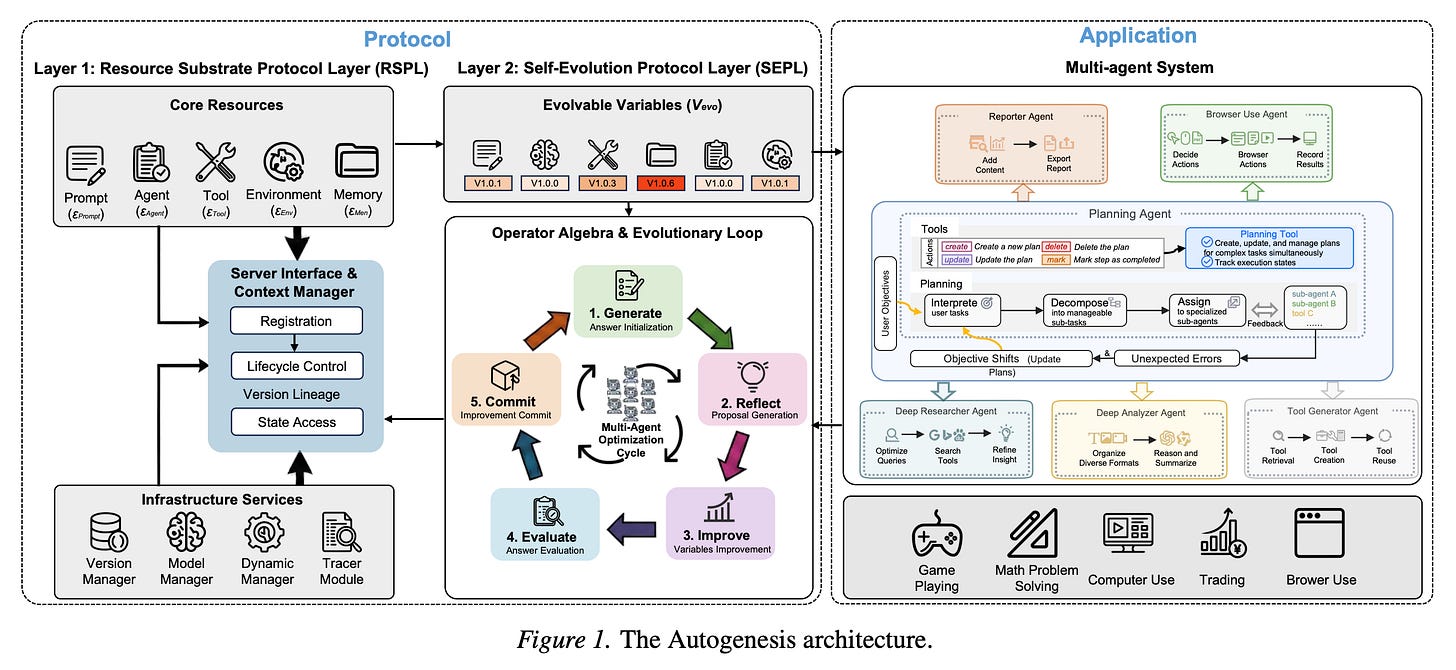

Static agents age quickly. As deployment environments change and new tools arrive, the agents that survive will be the ones that can safely rewrite themselves. This paper introduces Autogenesis, a self-evolving agent protocol where agents identify their own capability gaps, generate candidate improvements, validate them through testing, and integrate what works back into their own operational framework. No retraining and no human patching, just an ongoing loop of assessment, proposal, validation, and integration.

Two-layer protocol design: Autogenesis separates a Resource Substrate Protocol Layer (RSPL) that standardizes access to prompts, tools, environments, and memory from a Self-Evolution Protocol Layer (SEPL) that runs a Generate, Reflect, Improve, Evaluate, Commit loop over evolvable variables. The split keeps core capability registration stable while evolution happens on top.

Auditable lineage and rollback: Improvements are committed with version lineage, state access control, and reversible lifecycle operations. The protocol treats every self-modification as a first-class artifact that can be inspected, reproduced, or rolled back, which is what makes self-improvement safe enough to deploy.

Multi-agent applications: Autogenesis is demonstrated on multi-agent systems with planner, executor, and analyst roles. Agents evolve their own prompts, tool wrappers, and coordination routines using the shared protocol, showing that the abstraction is general enough to hold across roles rather than being tied to a single agent type.

Part of a broader self-improvement wave: The paper sits alongside Meta-Harness and the Darwin Gödel Machine as a concrete framework for operationalizing self-modification. Together they mark a shift from “agents that use tools” to “agents that edit their own tooling.”

3. Attention to Mamba

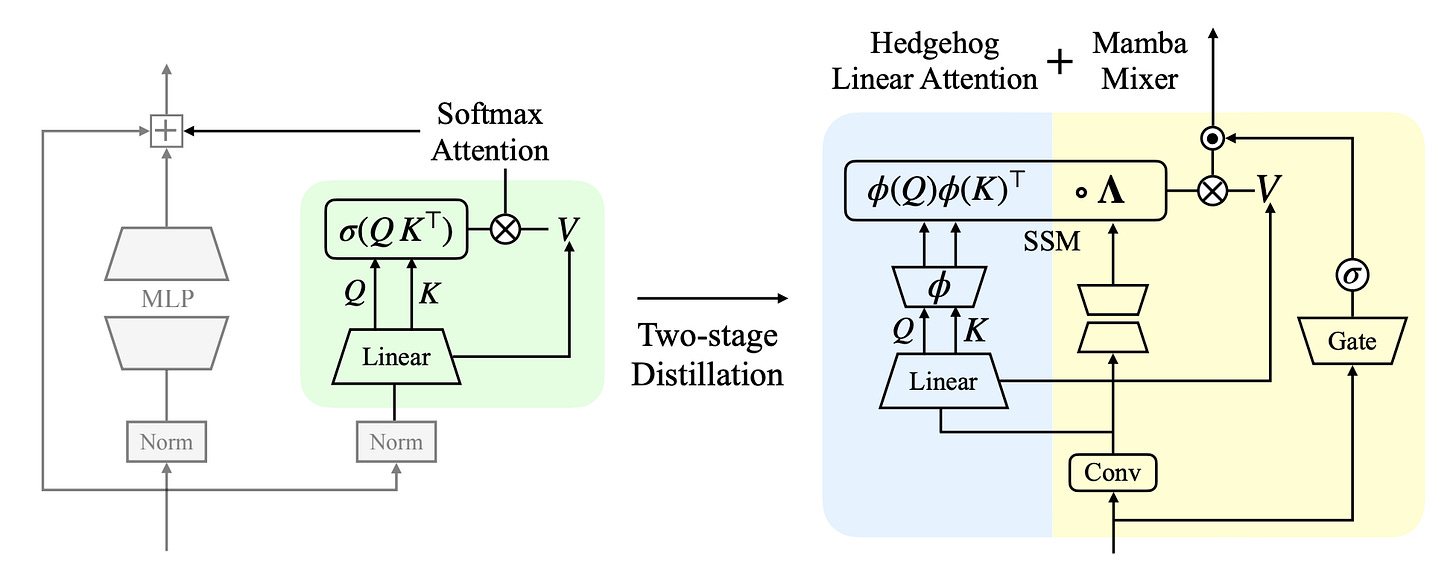

Apple proposes a two-stage recipe for cross-architecture distillation from Transformers into Mamba. Naive distillation collapses teacher performance because a Mamba student cannot directly imitate softmax attention. The fix is to distill the transformer into a linearized-attention student using a kernel adaptation first, then transfer that student into a pure Mamba with no attention blocks. On a 1B model trained on 10B tokens, the Mamba student hits 14.11 perplexity against a 13.86 Pythia-1B teacher, nearly matching quality at linear-time inference cost.

Stage 1, softmax to linear attention: The first stage replaces softmax attention with a Hedgehog-style linearized attention student, using a learnable kernel feature map that preserves the original attention scores while removing the softmax nonlinearity. This gives a strictly linear-complexity intermediate that stays close to the teacher.

Stage 2, linear attention to Mamba: The second stage transfers the linearized student into a HedgeMamba block, a hybrid SSM architecture that reuses the learned linear attention parameters and adds state-space components. The transition preserves quality because the two formulations are mathematically related, not just structurally similar.

Quality at long context: On downstream benchmarks, the distilled Mamba reaches 74.1% of the teacher’s accuracy, with the recipe generalizing to 1B and 3B scales. The key practical win is retaining Transformer-level quality on the sequence mixing block while moving to linear time at inference.

A cheaper path to SSM deployment: If trained Transformers can be reliably converted into state-space models without retraining from scratch, the entire open-weights ecosystem becomes cheaper to serve at long context. This is the kind of quiet infrastructure work that matters more than it looks.

4. Skill-RAG

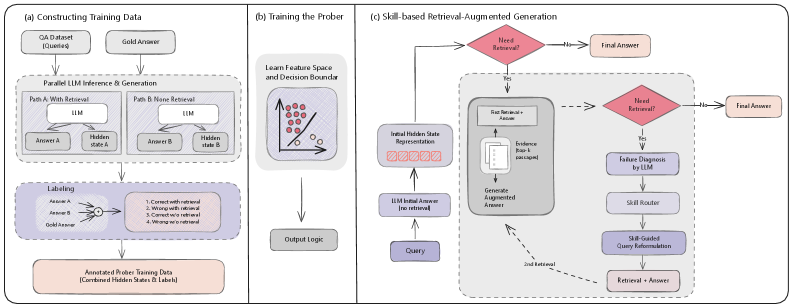

Most RAG systems retrieve on every query, whether the model needs help or not. This is wasteful when the model already knows the answer and often too late when it does not. This paper introduces Skill-RAG, a failure-state-aware retrieval system that uses hidden-state probing to detect when an LLM is approaching a knowledge failure, then routes the query to a specialized retrieval strategy matched to the gap.

Hidden-state probing as a retrieval trigger: Skill-RAG trains a lightweight probe on the LLM’s hidden representations that predicts whether the model is about to fail the query. Only queries that clear the probe’s failure threshold trigger retrieval, which cuts unnecessary search calls while preserving answers for the cases that actually need help.

Skill-matched retrieval strategies: Different failure modes (factual recall, multi-hop reasoning, temporal knowledge) are routed to different retrieval “skills” rather than a single generic retriever. Each skill is treated as a standalone component the agent can select between, echoing the broader trend of turning RAG into a collection of composable primitives.

Consistent gains across benchmarks: Evaluated on HotpotQA, Natural Questions, and TriviaQA, Skill-RAG improves over uniform RAG baselines on both efficiency and accuracy. The efficiency story matters as much as the accuracy: per-query retrieval cost drops significantly when the system skips retrieval for questions the model can already answer.

A shift in how RAG is designed: The work reinforces the direction RAG is heading: from a single monolithic pipeline to a suite of retrieval skills an agent selects between. Knowing when to retrieve and what kind of retrieval to run is becoming the central design question.

Message from the Editor

Excited to announce our new on-demand course “Vibe Coding AI Apps with Claude Code“. Learn how to leverage Claude Code features to vibecode production-grade AI-powered apps.

5. Self-Generated World Knowledge

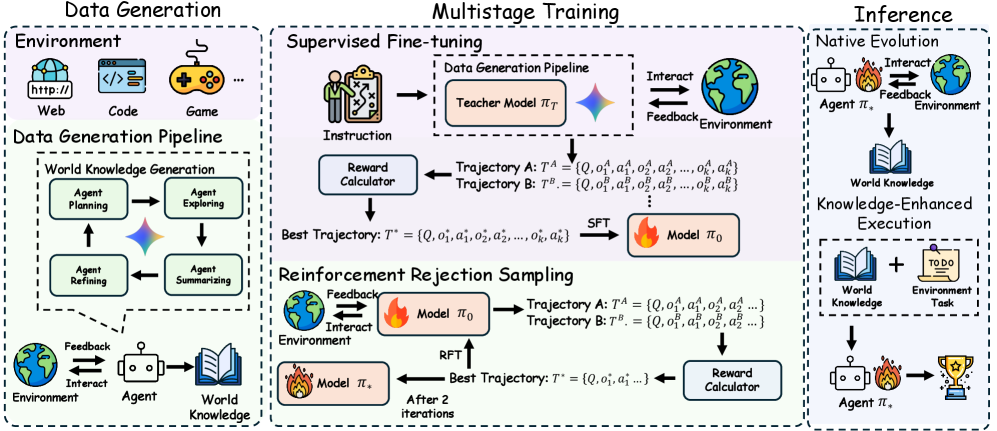

How far are we from agents that can self-generate world knowledge? This paper proposes an outcome-based reward that measures how much an agent’s self-generated world knowledge actually improves its task success rate, then trains with that signal and removes the external guidance at inference. The result is a 14B model that surpasses Gemini-2.5-Flash on web navigation and gains +20% on WebVoyager and WebWalker benchmarks.

Outcome-based reward for knowledge: Rather than scoring knowledge against a human-labeled reference, the reward is whether the generated knowledge measurably improves task success when the agent uses it. This lets the system learn which internally generated facts are worth keeping without an external oracle.

Multistage training pipeline: The method combines supervised fine-tuning on an instruction-and-trajectory dataset with reinforcement rejection sampling, where the best trajectories (ranked by the outcome reward) are used to update the policy. The training loop iterates between generation, reward scoring, and rejection sampling until the model internalizes effective knowledge-use behaviors.

Knowledge-enhanced execution at inference: At inference the external environment feedback loop is removed. The agent self-generates world knowledge, uses it to plan, and executes, without any human or reward signal in the loop. This is what makes the method deployable, not just measurable.

Environment design replaces labeling: If agents can reliably improve themselves by exploring the world rather than waiting for human-labeled rewards, the bottleneck for scaling agentic systems shifts from data curation to environment design. That matches the broader direction of the field and gives practitioners a concrete recipe to follow.

6. Self-Evolving Logic Synthesis

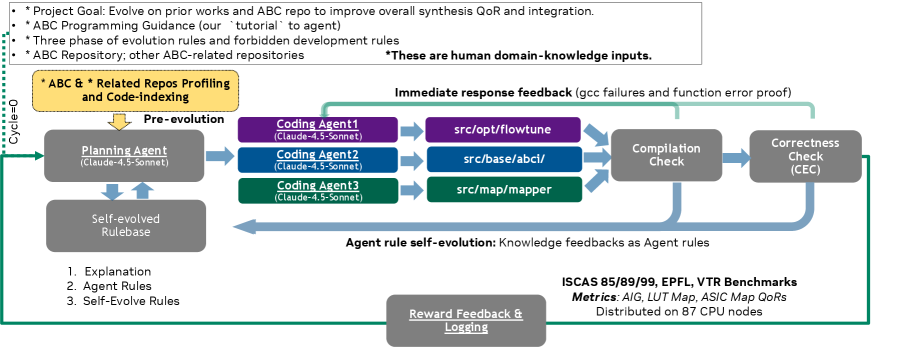

EDA tools like ABC have been hand-tuned by humans for decades. NVIDIA shows they can evolve themselves. This work introduces the first self-evolving logic synthesis framework, a multi-agent LLM system that autonomously refines the entire ABC codebase, generates and tests candidate optimization sequences against standard benchmark circuits, then merges improvements back into the base tool. No human engineer in the loop.

Multi-agent refinement of a real EDA toolchain: The framework assigns specialized agents to exploration, synthesis, and self-review tasks. Agents read and modify the ABC source directly, propose optimization flows, and run them against benchmark circuits such as EPFL, IWLS, and VTR, with three-pass human-domain knowledge injected through the pipeline.

Measured improvement over hand-tuned baselines: The evolved ABC variants produce better area, delay, and switching metrics than the hand-tuned reference on the benchmark suite, and the improvements persist under sensitivity analysis. This is a real gain on a tool the semiconductor industry depends on.

Codebase-level evolution, not just prompt tuning: The agents edit the ABC codebase itself, not just a configuration layer. That is a meaningful extension of the self-improving agent thread: the unit of improvement is real production code, not a prompt or policy.

Generalizable blueprint for domain tools: If agents can evolve a foundational semiconductor tool without manual engineering, the same pattern generalizes to any large, domain-specific codebase. It is a concrete extension of the self-improving agent thread, applied to infrastructure that shipping chips depend on.

7. Stateless Decision Memory

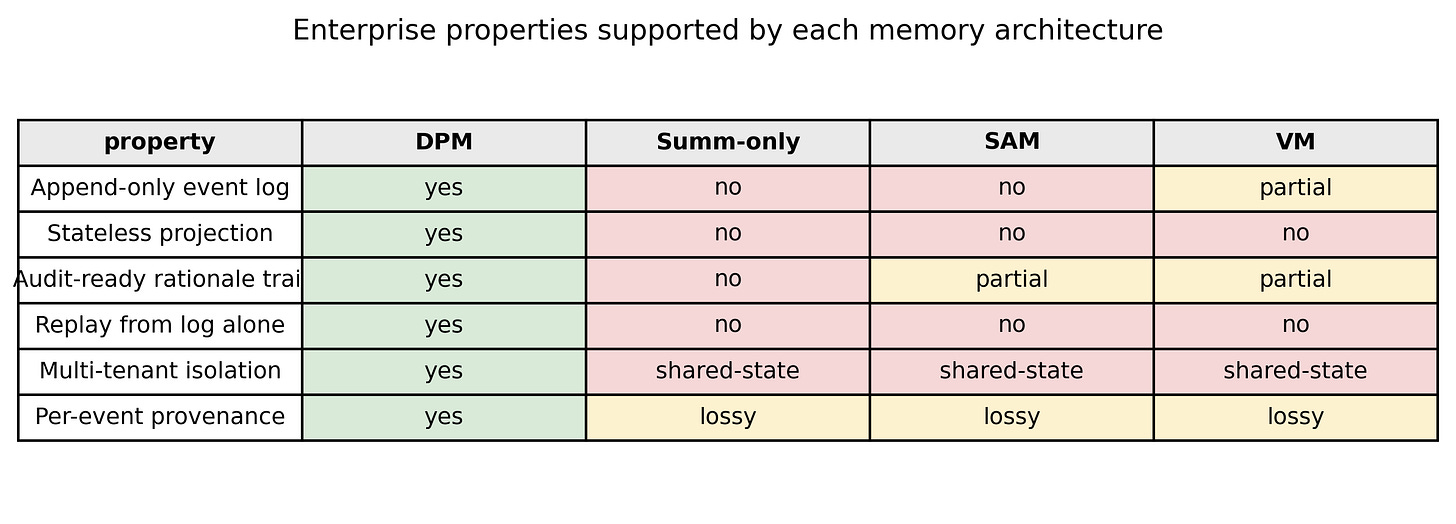

Most interesting AI agent papers right now are about capability. This one is about plumbing, and it is probably more important than it looks. Stateful agents do not scale horizontally. The moment you need thousands of concurrent agent instances running across containers, persistent per-agent state becomes the bottleneck. This paper proposes replacing active memory with immutable decision logs using event-sourcing principles from distributed systems.

Decision logs instead of live state: Every agent decision, tool call, and observation is appended to an immutable event log. Any instance can reconstruct context by replaying the log on demand, which decouples decision logic from storage and lets agents spin up anywhere with no warmup.

Enterprise properties by design: Compared to summary-only, SAM, and vector-memory baselines, Decision Process Memory (DPM) is the only architecture that supports append-only logging, stateless projection, audit-ready rationale trails, replay from log alone, multi-tenant isolation, and per-event provenance. Each of these is a hard requirement in regulated enterprise deployments.

Tight-budget performance wins: On FRP, RCS, and EDA evaluations under constrained memory budgets, DPM substantially outperforms summary-only memory, with the gap widening as the budget tightens. Under loose budgets the approaches converge, which is the expected pattern once scale is no longer the constraint.

A blueprint for regulated deployments: For teams operationalizing agents in finance, healthcare, or other compliance-heavy industries, the paper reads as a practical specification. It maps existing distributed-systems discipline onto agent memory instead of inventing a new category, which is why it is likely to age well.

8. There Will Be a Scientific Theory of Deep Learning

A position paper arguing that a genuine scientific theory of deep learning is already taking shape under the umbrella of “learning mechanics.” The authors identify five converging research directions (solvable idealized models, tractable mathematical limits, simple macroscopic laws, hyperparameter theories, and universal cross-system behaviors) that share a common signature: they describe training dynamics, target coarse aggregate statistics, and commit to falsifiable quantitative predictions. The framing pushes back on skepticism about whether deep learning can have fundamental theory and positions learning mechanics as a complement to mechanistic interpretability, not a competitor.

9. MASS-RAG

Most real-world RAG failures come from retrieving technically-relevant but contextually useless documents, then forcing a single model to reconcile them. MASS-RAG is a multi-agent synthesis framework for retrieval-augmented generation where specialized agents handle distinct roles: retrieving candidate documents, assessing their actual relevance to the query, and synthesizing the final answer from evidence that actually contributes. Instead of one model doing everything, responsibility is decomposed across coordinated evaluators, which fits the direction the field is heading for deep research agents.

10. Diversity Collapse in Multi-Agent LLMs

Every multi-agent system pitch assumes agents explore different solutions, but this paper shows they converge on near-identical outputs over time, even across different architectures and different starting prompts. The authors call it diversity collapse. The cause is structural coupling: shared context, shared task descriptions, and mutual feedback pull every agent toward the same attractor. They measure it formally with metrics like the Vendi score, and the homogenization is real. The practical consequence is that multi-agent setups for brainstorming, hypothesis generation, and ideation only work if teams explicitly engineer isolated reasoning phases, decoupled evaluation, and heterogeneous starting conditions.