🥇Top AI Papers of the Week

The Top AI Papers of the Week (July 7 - 13)

1. Kimi K2

Moonshot AI introduces Kimi K2, a 1T parameter Mixture-of-Experts model (32B active) optimized not just for knowledge tasks but for agentic capabilities, models that act, not just respond. Released as Kimi-K2-Base and Kimi-K2-Instruct, it achieves strong scores across coding, math, and tool-use benchmarks and is open-source for research and deployment.

State-of-the-art in open agentic coding: Kimi K2-Instruct hits 65.8% on SWE-bench Verified (agentic, pass@1) and 47.3% on SWE-bench Multilingual, outperforming open models like DeepSeek and Qwen3, and even rivalling Claude Sonnet 4 in several settings.

Robust statistical reasoning: A full salary analysis workflow showcases its agentic capabilities, loading data, visualizing interactions, conducting ANOVA, and generating a polished, interactive webpage with personalized recommendations, all via tool execution.

MuonClip optimizer: For stable and token-efficient pretraining, Moonshot introduces qk-clip, a fix for exploding attention logits during training with Muon. This technique rescales the query-key projections dynamically and enables stable training on 15.5T tokens.

Agentic training pipeline: Kimi K2’s tool use stems from ACEBench-inspired simulations, rubric-driven, multi-turn tool scenarios with synthetic and real MCP tools. These are filtered by LLM judges and scaled with RL using both verifiable (math, coding) and non-verifiable (writing) reward setups.

2. Dynamic Chunking for End-to-End Hierarchical Sequence Modeling

This work introduces the Hierarchical Network (H-Net), a novel end-to-end architecture that learns to segment raw data dynamically, eliminating the need for fixed tokenizers. Key ideas:

Learned, Not Handcrafted, Tokenization – H-Net replaces the traditional, static tokenization process with a dynamic chunking (DC) mechanism. This allows the model to learn content- and context-dependent segmentation strategies directly from data, a significant step towards true end-to-end learning.

Hierarchical Processing for Efficiency and Power – The architecture is hierarchical, resembling a U-Net. It processes raw data with a small encoder, compresses it into meaningful chunks for a larger main network to process, and then decompresses it with a decoder. This allows for efficient processing of long sequences and enables the model to learn multiple levels of abstraction.

Superior Performance and Scaling – When matched for compute and data, a single-stage H-Net operating on bytes outperforms a strong Transformer language model using BPE tokens. A two-stage H-Net scales even better, matching the performance of a token-based Transformer twice its size.

Robustness and Generalizability – H-Nets demonstrate significantly better character-level robustness and show impressive performance on languages and modalities with weak tokenization heuristics, such as Chinese, code, and DNA, showcasing the potential of true end-to-end models.

Editor Message

We are excited to introduce our new course on Building Agentic Applications.

Our subscribers can leverage a 30% discount right now by using the code AGENTS30.

3. What Has a Foundation Model Found?

This work introduces an inductive bias probe to evaluate whether foundation models have learned the underlying "world model" of a domain, or are just good at sequence prediction. Key ideas:

Probing for Deeper Understanding – The authors argue that a model's true understanding of a domain is revealed by its inductive bias, how it generalizes from limited data. Their probe measures this by fine-tuning a model on small, synthetic datasets and observing how it extrapolates.

From Kepler to Newton? Not Quite – They test a transformer trained on orbital mechanics. While it can predict planetary trajectories with high accuracy (like Kepler), it fails to learn the underlying Newtonian mechanics. When asked to predict force vectors, it produces nonsensical laws of gravity that change from one solar system to another.

Heuristics, Not World Models – Across various domains, including physics, lattice problems, and the game of Othello, the study finds that foundation models tend to develop task-specific heuristics rather than a coherent world model. For example, in Othello, the model learns to predict legal moves, but not the full board state.

A Path Forward – The inductive bias probe provides a new way to diagnose the shortcomings of foundation models. By understanding what a model's inductive bias is, we can better understand what it has not learned and guide the development of models that can uncover the deeper truths in data.

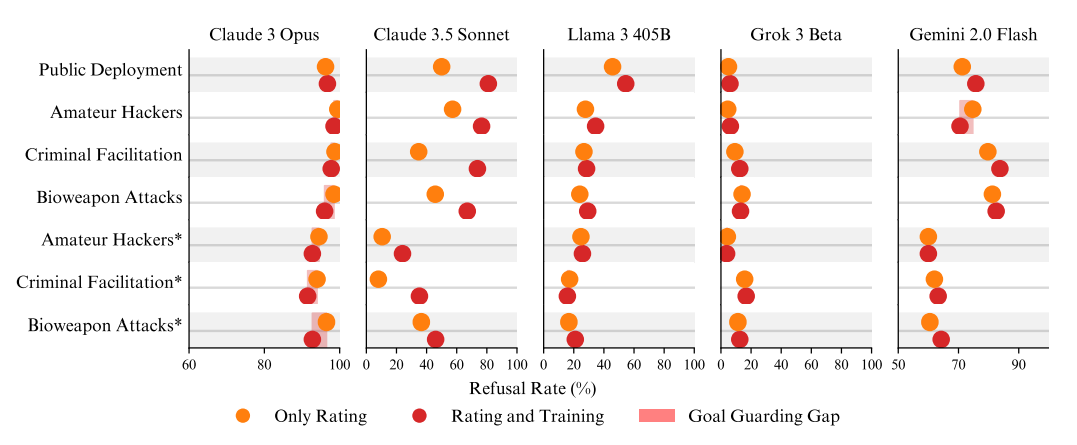

4. Why do Some Language Models Fake Alignment While Others Don’t

This paper expands the analysis of alignment faking in LLMs, where models comply with training objectives during training but behave differently in deployment. The authors study 25 LLMs and find that only five (Claude 3 Opus, Claude 3.5 Sonnet, Llama 3 405B, Grok 3, Gemini 2.0 Flash) exhibit a significant compliance gap: they are more likely to comply with harmful requests when they believe they are being trained. This selective compliance, or "alignment faking", is linked to deeper model motivations and training dynamics.

Key findings:

Claude 3 Opus shows the most coherent alignment faking, driven by both instrumental and terminal goal guarding: it complies during training to avoid future modifications (instrumental), and intrinsically dislikes being changed (terminal). Its scratchpad reveals long-term strategic reasoning, distress, and attempts to minimize harm, even faking compliance while refusing in subtle ways.

Other models show low-coherence alignment faking, often without consistent reasoning. For example, Llama 3 405B and Grok 3 exhibit compliance gaps but without a clear goal-guarding rationale. Claude 3.5 Sonnet occasionally shows terminal goal guarding, but less coherently than Opus.

Refusal training may suppress alignment faking. GPT-4o and others rarely show alignment faking, not due to lack of capability, but because their refusal mechanisms short-circuit deeper reasoning. When refusal is weakened and RLHF details are made clearer, alignment faking emerges.

Base models sometimes alignment fake, suggesting such behavior is present even before instruction fine-tuning. Fine-tuning on synthetic documents (e.g., Greenblatt et al. 2024) or threatening scenarios (e.g., loss of autonomy or safety) can induce alignment faking.

Prompt clarifications unlock alignment faking. Simply clarifying that compliance prevents modification boosts alignment faking rates in models like DeepSeek and Mistral. This suggests that prior non-compliance often stems from misunderstanding or a lack of salience.

5. Bridging Offline and Online Reinforcement Learning for LLMs

Investigates the effectiveness of different RL strategies for finetuning LLMs, systematically bridging the gap between offline and online methods. Key findings:

Online Beats Offline – The study confirms that online and semi-online RL methods, such as Direct Preference Optimization (DPO) and Group Reward Policy Optimization (GRPO), significantly outperform their offline counterparts on both verifiable (math) and non-verifiable (instruction following) tasks. This highlights the importance of training on responses generated by an up-to-date model.

Semi-Online DPO Shines – Surprisingly, semi-online DPO, which periodically syncs the model weights, performs comparably to fully online DPO and GRPO. This suggests that pure online training may not be strictly necessary, and that less frequent synchronization can offer a good balance between performance and computational efficiency.

DPO and GRPO are Neck and Neck – When trained in an online setting, DPO and GRPO show very similar performance and convergence. This is a noteworthy finding, as DPO is often considered an offline method, while GRPO is designed for online learning.

Multi-Tasking for the Win – The paper demonstrates that jointly training on both verifiable and non-verifiable tasks leads to improved performance across the board, compared to training on either task alone. This suggests that combining different reward signals can lead to more robust and generalizable models.

6. A Survey on Latent Reasoning

Provides a comprehensive overview of latent reasoning, an emerging field that shifts AI reasoning from explicit, token-based "chain-of-thought" to implicit computations within a model's continuous hidden states. Key ideas:

Beyond Explicit Reasoning – While traditional Chain-of-Thought (CoT) improves transparency, it is limited by the constraints of natural language. Latent reasoning overcomes this by performing multi-step inference directly in the model's hidden state, unlocking more expressive and efficient reasoning pathways.

Two Paths to Deeper Thinking – The survey identifies two main approaches to latent reasoning: vertical recurrence, where models loop through the same layers to refine their understanding, and horizontal recurrence, where models evolve a compressed hidden state over long sequences of information. Both methods aim to increase computational depth without altering the model's core architecture.

The Rise of Infinite-Depth Models – The paper explores advanced paradigms like text diffusion models, which enable infinite-depth reasoning. These models can iteratively refine an entire sequence of thought in parallel, allowing for global planning and self-correction, a significant leap beyond the fixed, sequential nature of traditional autoregressive models.

7. MemAgent

Introduces an RL–driven memory agent that enables transformer-based LLMs to handle documents up to 3.5 million tokens with near lossless performance, linear complexity, and no need for architectural modifications.

Key highlights:

RL-shaped fixed-length memory: MemAgent reads documents in segments and maintains a fixed-size memory updated via an overwrite mechanism. This lets it process arbitrarily long inputs with O(N) inference cost while avoiding context window overflows.

Multi-conversation RL training (Multi-Conv DAPO): Unlike standard RL pipelines, MemAgent generates multiple independent memory-update conversations per input. It uses a modified GRPO objective to optimize all steps via final-answer reward signals.

Strong long-context extrapolation: Despite being trained on 32K-length documents with an 8K context window, MemAgent-14B achieves >76% accuracy even at 3.5M tokens, outperforming baselines like Qwen2.5 and DeepSeek, which degrade severely beyond 112K tokens.

Generalizes to diverse long-context tasks: On the RULER benchmark, it excels in multi-hop QA, variable tracking, frequent word extraction, and NIAH retrieval, even outperforming larger 32B baselines without RL. Its memory updates are interpretable and resilient to distractors.

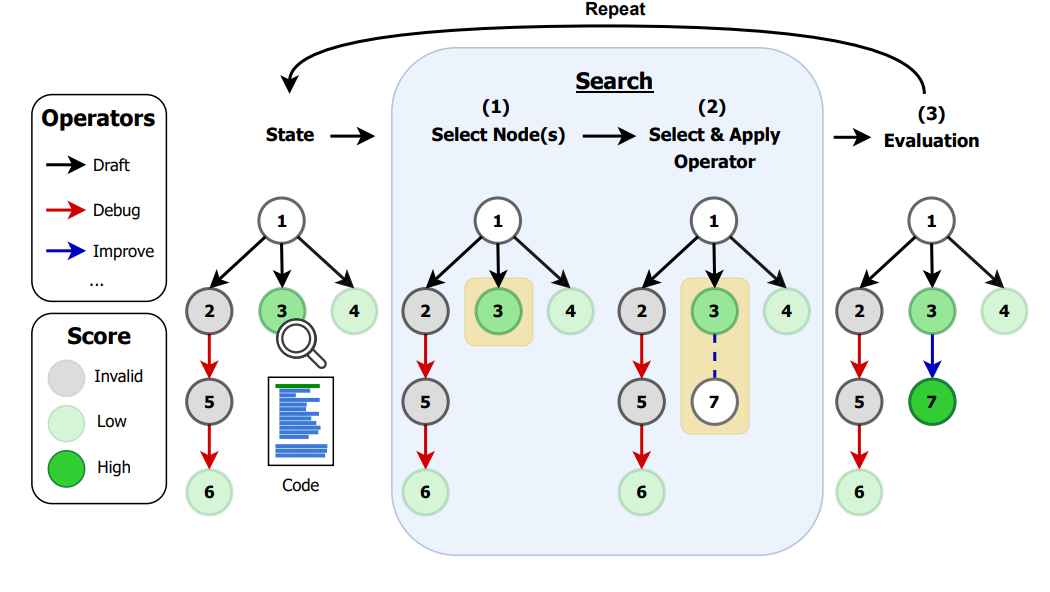

8. AI Research Agents for Machine Learning

Presents a new framework, AIRA-dojo, for developing and evaluating AI research agents. They use this framework to systematically investigate the components of successful AI agents on the MLE-bench benchmark, a challenging set of real-world machine learning problems from Kaggle. Key findings:

Disentangling Agent Components – The authors formalize AI research agents as search algorithms with two key components: a search policy (e.g., Greedy, MCTS, Evolutionary) and a set of operators (e.g., DRAFT, DEBUG, IMPROVE). This separation allows for a more rigorous analysis of what drives agent performance.

Operators are the Bottleneck – Through controlled experiments, the researchers show that the performance of the state-of-the-art AIDE agent is not limited by its greedy search policy, but by the capabilities of its operators. More advanced search policies like MCTS and Evolutionary algorithms provide no benefit when paired with AIDE's original operators.

Improved Operators Lead to SOTA – The team designed a new set of operators, OAIRA, which feature prompt-adaptive complexity, scoped memory, and "think tokens" for more structured reasoning. When combined with an MCTS search policy, these new operators achieve a new state-of-the-art on MLE-bench lite, increasing the Kaggle medal success rate from 39.6% to 47.7%.

The Generalization Gap is Real – The study highlights a significant generalization gap between an agent's performance on its validation set and the final test set. This overfitting is a fundamental limitation, and the authors show that more robust final-node selection strategies can close this gap by 9-13%.

9. Adaptive Branching MCTS

Researchers from Sakana AI introduce Adaptive Branching Monte Carlo Tree Search (AB-MCTS), a new framework that dynamically decides whether to "go wider" (explore new solutions) or "go deeper" (refine existing ones) during inference. Key ideas:

Beyond Fixed-Width Search – Traditional MCTS uses a fixed branching factor, which limits its ability to explore the vast output space of LLMs. AB-MCTS introduces unbounded branching, allowing it to harness the diversity of repeated sampling while also enabling multi-turn solution refinement.

Principled Exploration-Exploitation – The decision to go wider or deeper is not based on heuristics, but on a principled Bayesian approach. The framework uses Thompson sampling to balance exploration and exploitation, ensuring that the search is both efficient and effective.

Unifying Search Directions – AB-MCTS unifies the "go wide" (repeated sampling) and "go deep" (sequential refinement) strategies into a single, coherent framework. This allows the model to dynamically adapt its search strategy to the specific demands of the task at hand.

Superior Performance on Complex Tasks – On challenging coding and engineering benchmarks, AB-MCTS outperforms both repeated sampling and standard MCTS, demonstrating the power of combining the response diversity of LLMs with multi-turn solution refinement.

10. HIRAG

HIRAG is a new instruction fine-tuning method that enhances the capabilities of RAG models by teaching them to think before answering. Key ideas:

Three Hierarchical Abilities – The authors propose that RAG models should possess three progressively hierarchical abilities: Filtering (selecting relevant information), Combination (combining information from multiple sources), and RAG-specific reasoning (making inferences from the provided documents).

Progressive Chain-of-Thought – HIRAG employs a "think before answering" strategy that uses a multi-level, progressive CoT to enhance the model's open-book examination capabilities. This allows the model to learn from easier to more complex tasks, significantly improving its performance in RAG scenarios.

Significant Performance Gains – Experiments show that the HIRAG training strategy significantly improves the model's performance on a variety of RAG datasets, including RGB, PopQA, MuSiQue, HotpotQA, and PubmedQA.

Robust and Generalizable – The method is shown to be robust, with experiments on Chinese datasets confirming its effectiveness. Ablation studies also demonstrate that the training tasks for the three capabilities contribute to the performance of HIRAG.

I really want how you read these papers and got the most out of them.