🥇Top AI Papers of the Week

The Top AI Papers of the Week (April 26 - May 3)

1. Agentic Harness Engineering

Most coding-agent harnesses are still tuned by hand or kept alive through brittle trial-and-error self-evolution. This paper introduces Agentic Harness Engineering (AHE), a framework that makes harness evolution observable and falsifiable. AHE separates the system into three layers: components stored as revertible files, experience condensed from millions of trajectory tokens into structured evidence, and decisions written as predictions that get checked against task outcomes. Every edit becomes a contract you can verify or revert.

Three-layer evolution model: Components, experience, and decisions are each first-class artifacts. Components are versioned files, experience is compressed evidence pulled from full trajectory logs, and decisions are explicit hypotheses with expected outcomes. The structure turns black-box harness tuning into an auditable engineering loop.

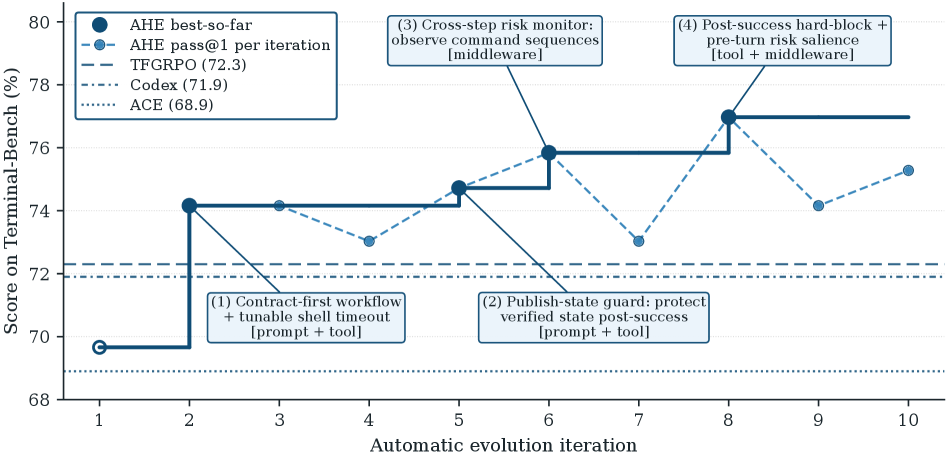

Pass@1 gains on Terminal-Bench 2: Pass@1 climbs from 69.7% to 77.0% across ten iterations, beating both human-designed Codex-CLI (71.9%) and self-evolving baselines like ACE and TF-GRPO. The framework also uses 12% fewer tokens than the seed harness on SWE-bench-verified.

Cross-model transfer: The evolved harness transfers across model families with +5.1 to +10.1 point gains, suggesting the optimizations are structural rather than overfit to a specific backbone. That is the property you actually want from harness engineering.

Why it matters: Harness work is the largest hidden cost in most agent systems. AHE is the first credible recipe for letting the harness improve itself without drifting into noise, which makes it the most important agent-systems paper of the week.

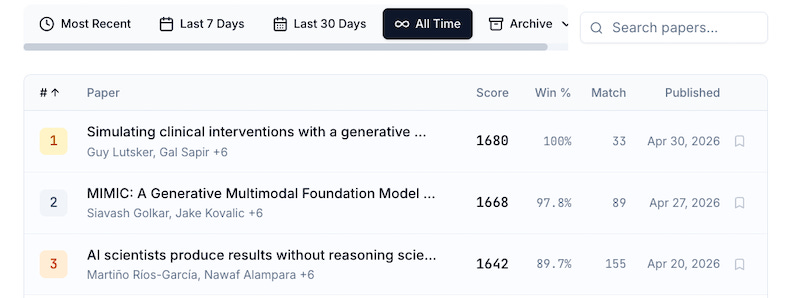

Message from our Sponsor

Kurate.org - Arena for scientific papers. Every day, hundreds of arXiv preprints are ranked by scientific impact through pairwise tournaments judged by Claude, GPT and Gemini models. See the top ranked papers in AI, ML, Robotics, Quantum Physics, and more for free.

2. AgenticQwen-30B-A3B

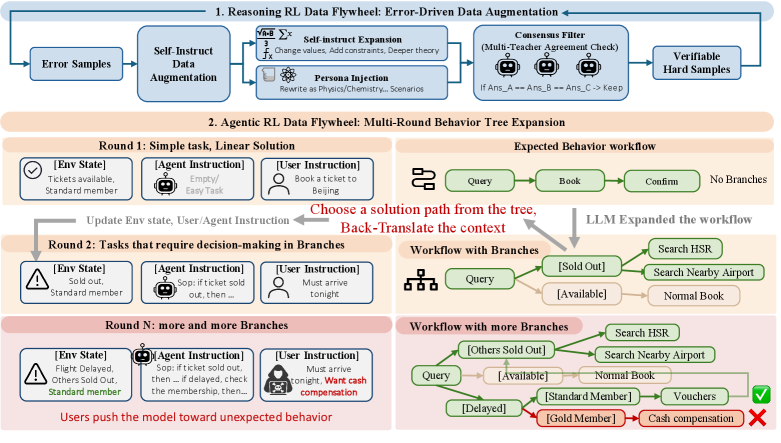

Alibaba shows that a 30B MoE model with only 3B active parameters can match Qwen3-235B on real tool-use workloads. AgenticQwen-30B-A3B scores 50.2 average on TAU-2 plus BFCL-V4 Multi-Turn, while AgenticQwen-8B scores 47.4. Both more than double their vanilla Qwen baselines and close most of the gap to a 235B model. The recipe is built around two reinforcement learning flywheels that run in parallel, with simulated users actively trying to mislead the agent.

Reasoning flywheel from self-failure: The first loop mines the model’s own errors and converts them into harder reasoning problems each round. The training distribution gets harder on its own as the model improves, removing the need for new human-curated reasoning data.

Agentic flywheel for tool use: The second loop grows simple linear tool-use trajectories into multi-branch behavior trees. Simulated users test recovery from misleading instructions, ambiguous goals, and failed tool calls, which is where vanilla supervised tuning typically breaks.

Real efficiency for production agents: A 30B MoE with 3B active tokens at inference is significantly cheaper to serve than a 235B dense or MoE alternative. For tool-use workloads where frontier reasoning is overkill, this changes the cost profile of shipping production agents.

A reusable recipe: The flywheel approach generalizes beyond Qwen. Teams can generate hard examples from their own agent’s failures rather than relying on static synthetic data, which is the more scalable path for domain-specific agents.

3. Agentic World Modeling

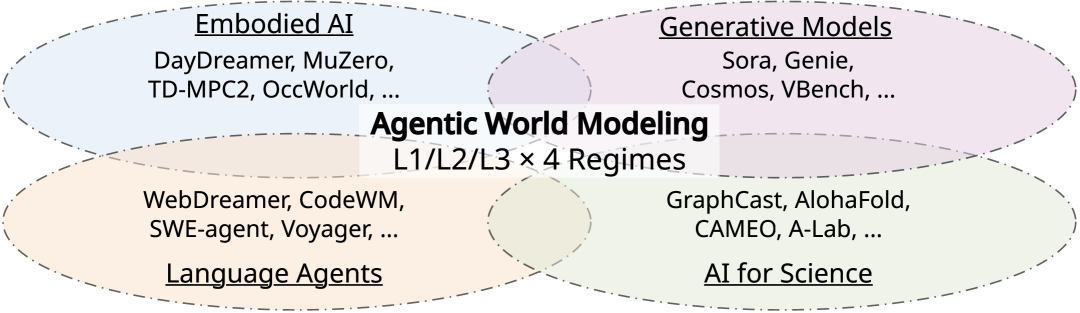

A massive 40-author survey lands the cleanest taxonomy of world models in agent research released so far. The paper proposes a “levels by laws” framework spanning three capability levels and four law regimes, then synthesizes 400+ works and 100+ representative systems across model-based RL, video generation, web and GUI agents, multi-agent simulation, and scientific discovery. As agents shift from chatbots to goal-accomplishers, the bottleneck moves from language to environment, and this is the first paper that gives builders a shared vocabulary across communities that have been working in isolation.

Three capability levels: L1 Predictors handle one-step transitions, L2 Simulators do multi-step action-conditioned rollouts, and L3 Evolvers self-revise as the world changes. The hierarchy makes it easy to place existing systems and identify where capability gaps actually live.

Four law regimes: Physical, digital, social, and scientific laws each impose different constraints on what a world model needs to capture. The framework treats them as orthogonal axes, which clarifies why a strong physics simulator can still fail at social or digital tasks.

Failure-mode catalog: The survey extracts recurring failure patterns across 100+ systems, including misaligned reward shaping, drift under non-stationarity, and brittle transfer across regimes. Each failure mode is mapped to a level and law combination, so the diagnosis is grounded.

Evaluation principles per level: The authors propose evaluation criteria specific to each capability level rather than a single benchmark. This is the right move because L1 prediction accuracy and L3 self-revision quality are not measurable on the same axis.

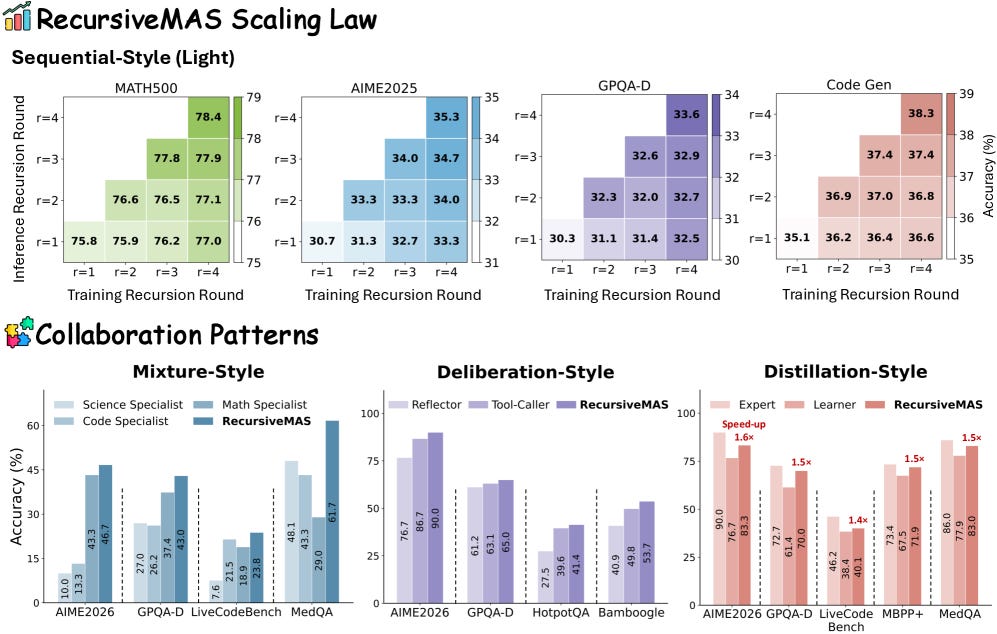

4. RecursiveMAS

Multi-agent systems usually pass full text messages between agents at every step, which causes token bloat, latency, and context dilution that all grow with team size. RecursiveMAS asks a different question: what if agents collaborated through recursive computation in a shared latent space instead of through text? The system treats a multi-agent team as a recursive computation where each agent acts like an RLM layer, iteratively passing latent representations to the next and forming a looped interaction process. Less talking, more thinking.

RecursiveLink for latent communication: A RecursiveLink module generates latent thoughts and transfers state directly between heterogeneous agents, replacing natural-language messages with internal representations. The change removes the cost of re-encoding and re-parsing text on every coordination step.

Inner-outer loop learning: The training algorithm uses an inner loop for per-step latent updates and an outer loop for team-level credit assignment, with shared gradient-based updates across agents. This makes joint optimization tractable instead of relying on hand-tuned communication protocols.

Strong gains across 9 benchmarks: Across math, science, medicine, search, and code generation, RecursiveMAS delivers 8.3% average accuracy gain over baselines, 1.2x to 2.4x end-to-end inference speedup, and 34.6% to 75.6% reduction in token usage. The efficiency story is at least as important as the accuracy story.

A path past the agent communication tax: If agent-to-agent communication is the next real bottleneck, latent-space recursion is one of the cleaner ways to scale collaboration. Teams running multi-agent systems at scale should treat this as a serious design alternative, not a research curiosity.

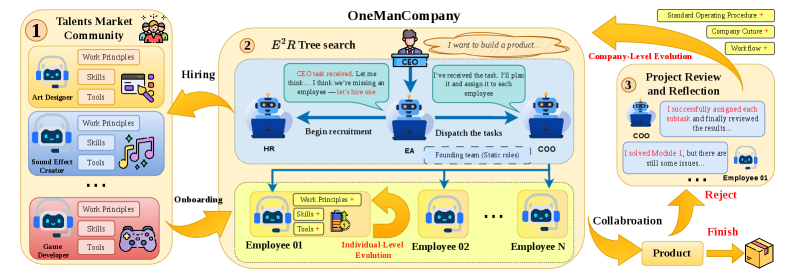

5. OneManCompany

If you are building multi-agent systems, you are probably wiring static org charts. This paper argues they should look more like a labor market. OneManCompany (OMC) replaces fixed teams with “Talents,” portable agent identities that bundle skills and tools, and a “Talent Market” where agents get recruited dynamically per task. An Explore-Execute-Review tree search decomposes work hierarchically and aggregates results back up. On PRDBench, OMC reaches 84.67% success, +15.5 points over prior SOTA, and the framework generalizes across the case studies the authors run.

Talents as portable identities: A Talent bundles a skill set, tool access, and behavioral priors into a reusable agent identity. Talents can be hired into any task without rewiring the orchestration graph, which removes most of the brittleness in pre-wired multi-agent pipelines.

Dynamic recruitment via Talent Market: Tasks post requirements, and the market matches Talents to roles based on capability fit and current load. This replaces the standard “design a team for every workflow” pattern with on-demand assembly that adapts as the task population shifts.

Explore-Execute-Review tree search: Work is decomposed top-down into subtasks, executed in parallel by recruited Talents, then reviewed and aggregated up the tree. The structure naturally supports retries, branching, and cross-checking without manual coordination logic.

Why it matters: Pre-wired multi-agent pipelines break the moment tasks drift outside their design envelope. Treating agents as a recruitable workforce gets you self-organization and continuous improvement by default, which is what open-ended agent systems need.

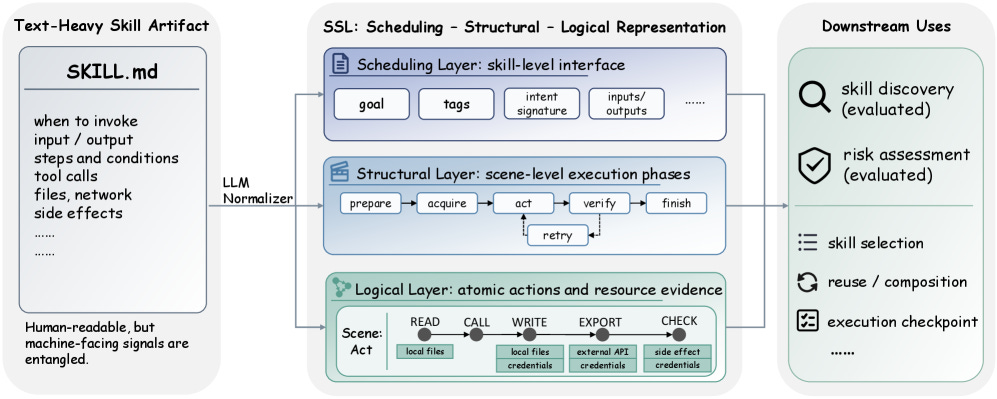

6. From Skill Text to Skill Structure

SKILL.md files entangle invocation interface, execution flow, and tool side effects in a single blob of natural language. That makes downstream discovery and risk review brittle as skill registries scale. This paper proposes SSL, a three-layer typed JSON representation drawn from Schank and Abelson’s classical work on scripts, MOPs, and conceptual dependency. An LLM-based normalizer converts existing SKILL.md files into the structure, so adoption does not require rewriting registries by hand.

Three layers, cleanly separated: A Scheduling layer captures invocation signals and trigger conditions, a Structural layer encodes execution scenes and ordering, and a Logical layer specifies atomic actions plus resource and side-effect annotations. The separation lets discovery, risk, and execution each reason about the layer they care about.

Skill Discovery MRR jumps 0.573 to 0.707: Treating skills as typed structure rather than prose makes retrieval significantly more accurate, even before any model fine-tuning. The gain comes from the structure exposing what skills actually do, not just how they describe themselves.

Risk Assessment macro F1 of 0.787: The Logical layer’s resource annotations enable a 0.744 to 0.787 jump in risk classification. Auditors can now reason about side effects directly instead of inferring them from free-form prose.

A 6,184-skill corpus released: The authors ship a normalized corpus of 6,184 skills, 403 task queries, and 500 risk-labeled skills. As skill registries cross a million entries, structured representations are the only path that keeps discovery and review tractable.

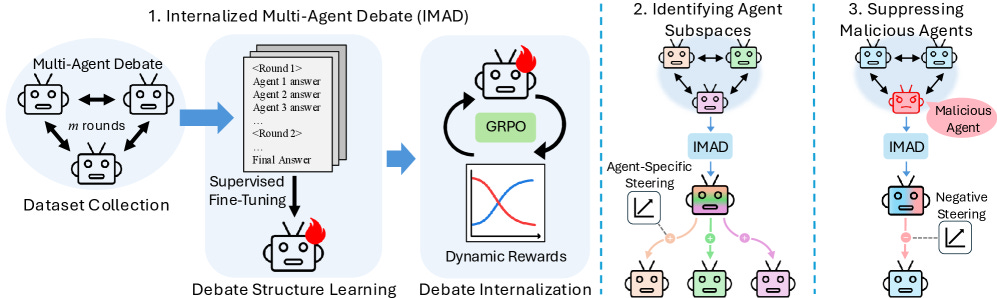

7. Latent Agents

Multi-agent debate makes models reason better. It also burns tokens generating long transcripts before any answer comes out. Latent Agents distills the entire debate into a single LLM through a two-stage fine-tuning pipeline: the model first learns debate structure, then internalizes it through dynamic reward scheduling and length clipping. The internalized model matches or beats explicit multi-agent debate while using up to 93% fewer tokens, which makes debate-quality reasoning practical at production scale.

Two-stage internalization pipeline: Stage one teaches the structure of debate (turn taking, critique, revision) through supervised fine-tuning on transcript data. Stage two uses dynamic reward scheduling and length clipping to compress that structure into single-pass reasoning without losing the gains from the multi-agent setup.

Up to 93% token savings: The internalized model matches or beats explicit debate accuracy while drastically reducing inference cost. For teams running reasoning workloads at scale, this is the kind of efficiency win that turns a research idea into a deployment default.

Activation steering reveals agent subspaces: The “agents” survive distillation as identifiable circuits in activation space. Probing finds interpretable directions corresponding to different agent perspectives, which means the internal structure persists even when the external transcript is gone.

A safety angle worth noting: When malicious agents are deliberately embedded via distillation, negative steering suppresses them more cleanly than steering a base model would, with smaller hits to general performance. Internalized debate may turn out to be a useful interpretability and alignment substrate, not just a token-saver.

8. OCR-Memory

Most agent memory systems compress trajectories into text summaries and hope the model remembers what matters, which is exactly where the information loss hides. OCR-Memory renders the agent’s interaction history as images with indexed visual anchors, then retrieves via a locate-and-transcribe pipeline: the model scans visual memory, predicts the index of the relevant region, and the original text is fetched verbatim from a database. Older trajectories are stored as low-resolution thumbnails with active-recall up-sampling, and the method reaches SOTA on Mind2Web and AppWorld under strict context limits.

9. When to Retrieve During Reasoning

Most RAG systems retrieve once, before the model starts reasoning. Large reasoning models like o1 and R1 do not work that way. They generate 12k to 25k token chains of thought and hit knowledge gaps mid-inference, long after the retrieval window closed. ReaLM-Retrieve is a reasoning-aware retrieval framework that injects evidence during multi-step inference, detects uncertainty at reasoning-step granularity, and learns a policy for when external evidence actually helps. It achieves +10.1% absolute F1 over standard RAG across MuSiQue, HotpotQA, and 2WikiMultiHopQA, with 47% fewer retrieval calls than fixed-interval IRCoT, and hits 71.2% F1 on 2-4 hop MuSiQue with only 1.8 retrieval calls per question.

10. Co-evolving Decisions and Skills

Long-horizon agents fail in two ways: the decision-maker cannot decompose well, or the skill library goes stale. This paper introduces a co-evolution framework where an LLM decision agent and a dynamic skill bank improve each other through iterative refinement. The decision agent picks and chains skills, performance feedback updates both the policy and the skills, and new skills emerge by generalizing successful sequences instead of being hand-coded upfront. Most long-horizon agent stacks treat skills and decision-making as separate optimization problems, which is why they plateau. Co-evolution gives you adaptive planning and a growing library of reusable behaviors from a single loop, which is what you actually want when task structure is not predetermined: robotics, game agents, and complex planning.