🥇Top AI Papers of the Week

The Top AI Papers of the Week (March 23 - 29)

1. Hyperagents

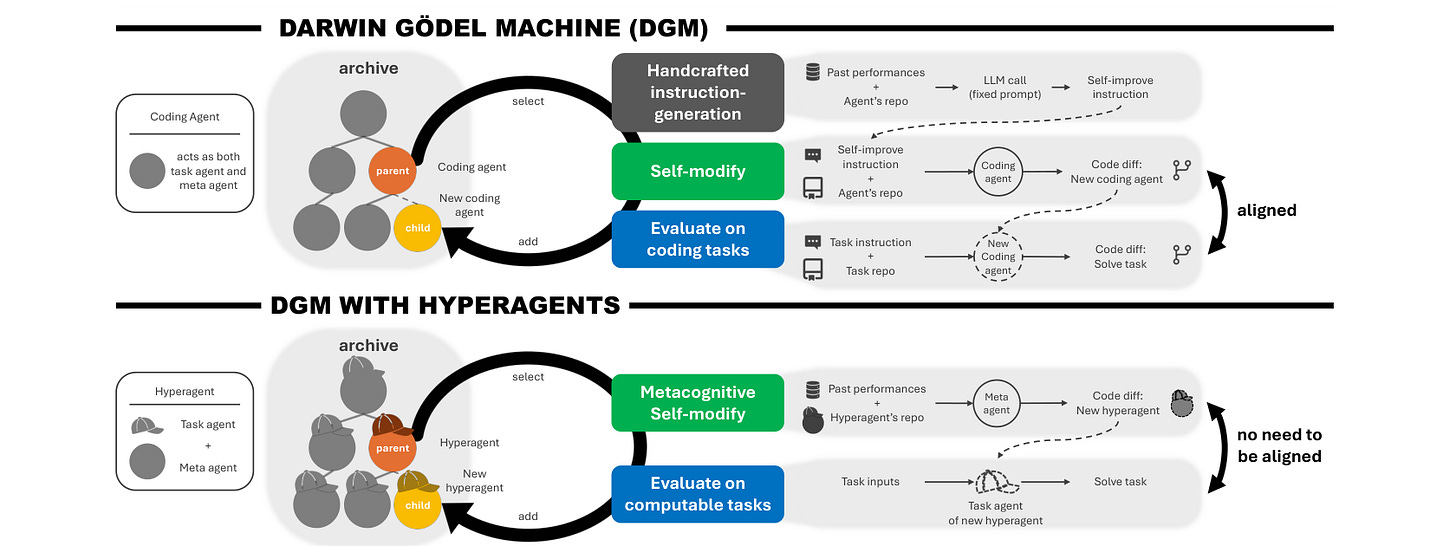

Self-improving AI systems promise to reduce reliance on human engineering, but existing approaches rely on fixed, handcrafted meta-level mechanisms that fundamentally limit how fast they can improve. Hyperagents introduce self-referential agents that integrate a task agent and a meta agent into a single editable program, enabling the system to improve not just its task-solving behavior but also the mechanism that generates future improvements.

Metacognitive self-modification: The key insight is that the meta-level modification procedure is itself editable. This enables metacognitive self-modification where the system can improve how it improves, not just what it does. Prior self-improving systems like the Darwin Godel Machine (DGM) relied on a fixed alignment between coding ability and self-improvement ability, which does not generalize beyond coding.

Domain-general self-improvement: DGM-Hyperagents (DGM-H) eliminates the assumption that task performance and self-modification skill must be aligned. This opens up self-accelerating progress on any computable task, extending self-improvement beyond the coding domain where DGM originally operated.

Transferable meta-improvements: The system not only improves task performance over time but also discovers structural improvements to how it generates new agents, such as persistent memory and performance tracking. These meta-level improvements transfer across domains and accumulate across runs.

Outperforms prior systems: Across diverse domains, DGM-H outperforms baselines without self-improvement or open-ended exploration, as well as prior self-improving systems. The work offers a glimpse of open-ended AI systems that continually improve their search for how to improve.

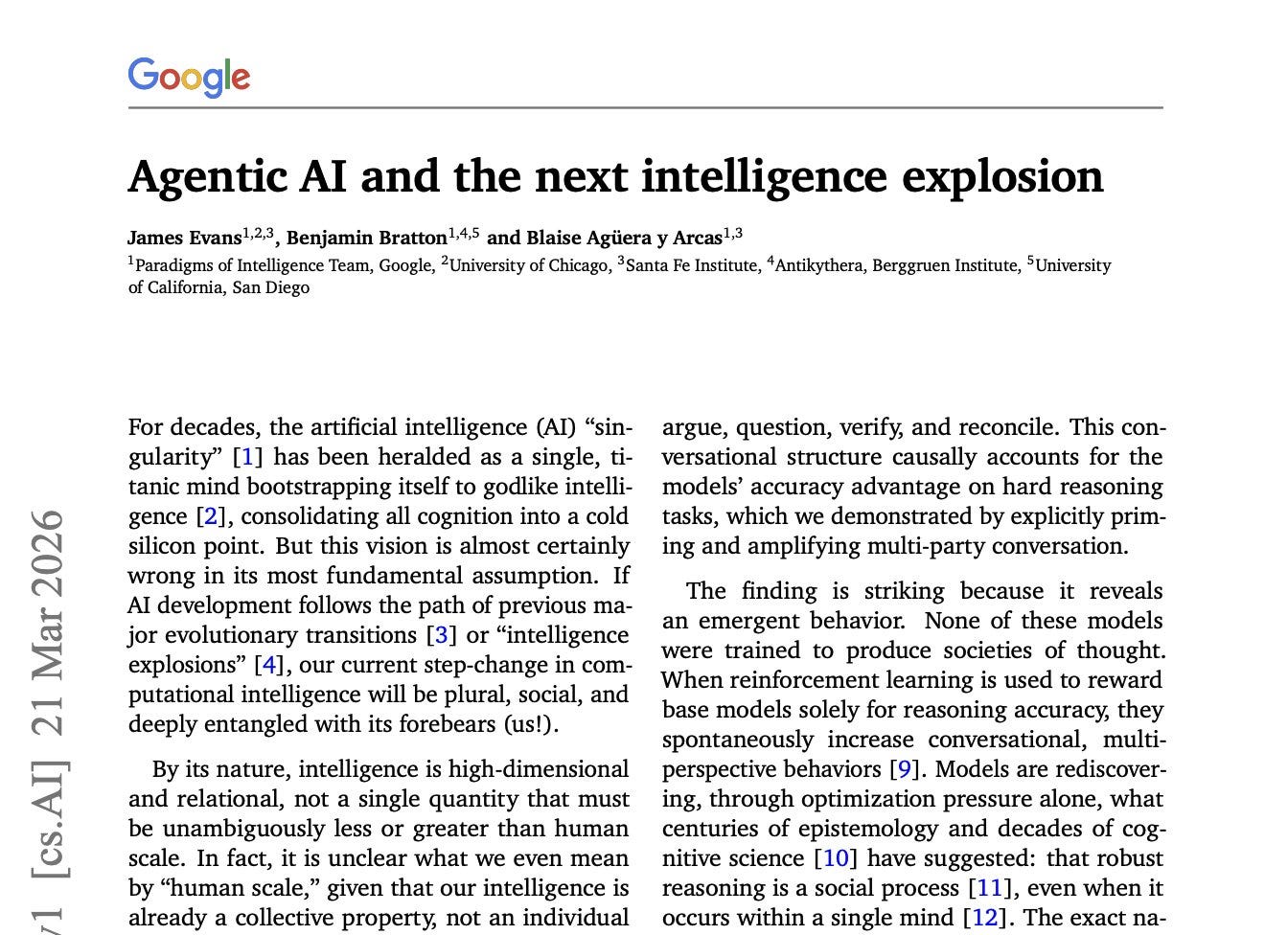

2. Agentic AI and the Next Intelligence Explosion

A new report from Google researchers argues that the AI “singularity” framed as a single superintelligent mind bootstrapping to godlike intelligence is fundamentally wrong. Drawing on evolution, sociology, and recent advances in agentic AI, the authors make the case that every prior intelligence explosion in human history was social, not individual, and that the next one will follow the same pattern.

Societies of thought: Frontier reasoning models like DeepSeek-R1 do not improve simply by “thinking longer.” Instead, they simulate internal “societies of thought,” spontaneous cognitive debates that argue, verify, and reconcile to solve complex tasks. This conversational structure causally accounts for the models’ accuracy advantage on hard reasoning tasks.

Human-AI centaurs: We are entering an era of hybrid actors where collective agency transcends individual control. A corporation or state comprising myriad humans already holds singular legal standing and acts with collective agency that no individual member can fully control. The same pattern is emerging with human-AI configurations.

From dyadic to institutional alignment: Scaling agentic intelligence requires shifting from dyadic alignment (RLHF) toward institutional alignment. By designing digital protocols modeled on organizations and markets, we can build a social infrastructure of checks and balances for AI systems rather than trying to align individual agents in isolation.

Combinatorial intelligence: The next intelligence explosion will not be a single silicon brain, but a complex, combinatorial society specializing and sprawling like a city. No mind is an island, and the toolkit of team science, small group sociology, and social psychology becomes the blueprint for next-generation AI development.

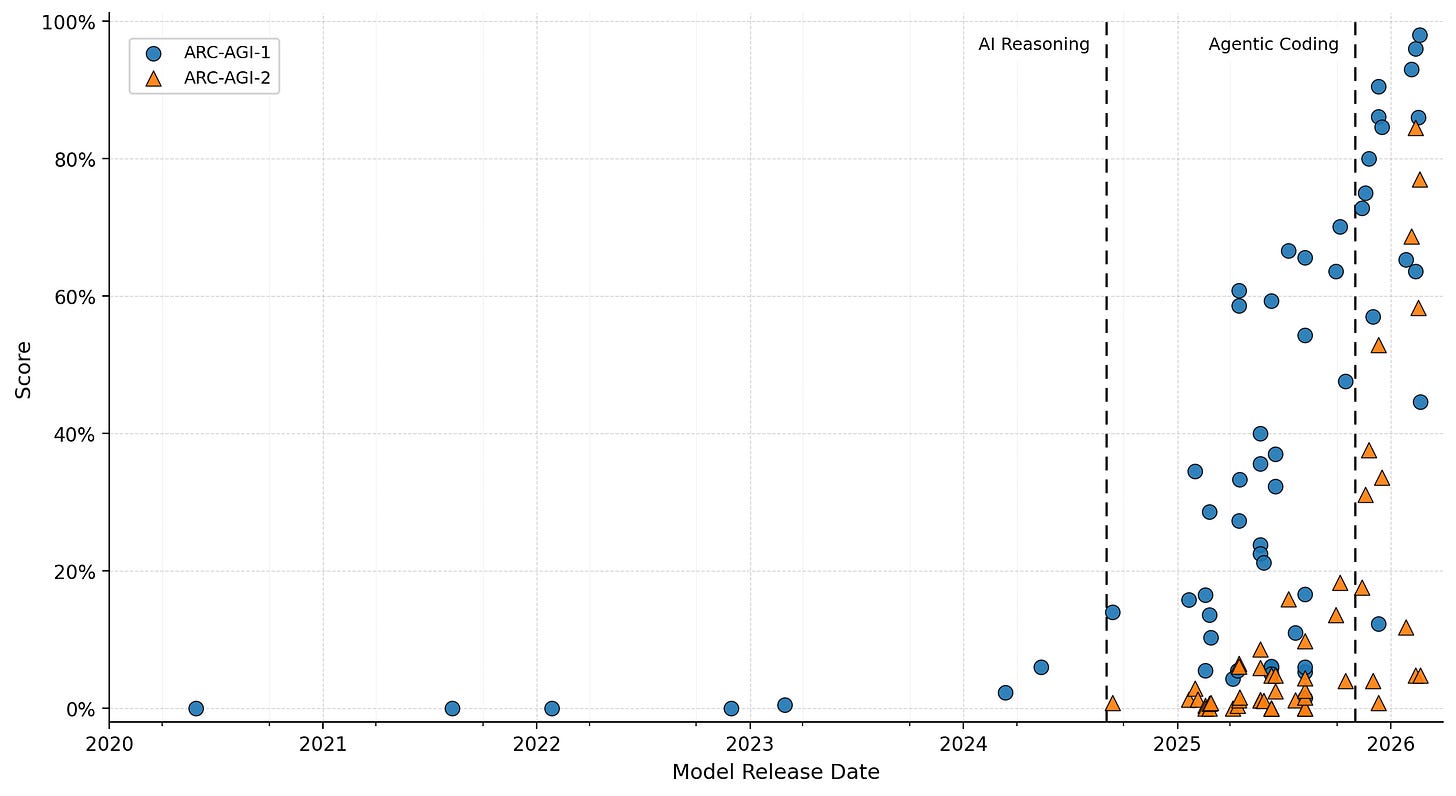

3. ARC-AGI-3

Francois Chollet and the ARC Prize Foundation introduce ARC-AGI-3, an interactive benchmark for studying agentic intelligence through novel, abstract, turn-based environments. Unlike its predecessors, ARC-AGI-3 requires agents to explore, infer goals, build internal models of environment dynamics, and plan effective action sequences without explicit instructions, making it the only unsaturated general agentic intelligence benchmark as of March 2026.

Massive human-AI gap: Humans can solve 100% of the environments while frontier AI systems score below 1%. For comparison, systems reach 93% on ARC-AGI-1 and 68.8% on ARC-AGI-2, but performance collapses on ARC-AGI-3. This gap demonstrates that current systems lack the fluid adaptive efficiency that humans exhibit on genuinely novel tasks.

Interactive turn-based design: Unlike static benchmarks that test pattern recognition on fixed inputs, ARC-AGI-3 environments are turn-based: agents must act, observe consequences, update their internal model, and plan next steps. This tests a fundamentally different kind of intelligence, closer to how humans learn new games or explore unfamiliar systems.

Core Knowledge priors only: The benchmark avoids language and external knowledge entirely. Environments leverage only Core Knowledge priors, universal cognitive building blocks shared by all humans, ensuring that performance reflects genuine adaptive reasoning rather than memorization or retrieval from training data.

Efficiency-based scoring: The scoring framework is grounded in human action baselines. A hard cutoff of 5x human performance per level ensures that brute-force search strategies cannot succeed. If a human takes 10 actions on average, the AI agent is cut off after 50.

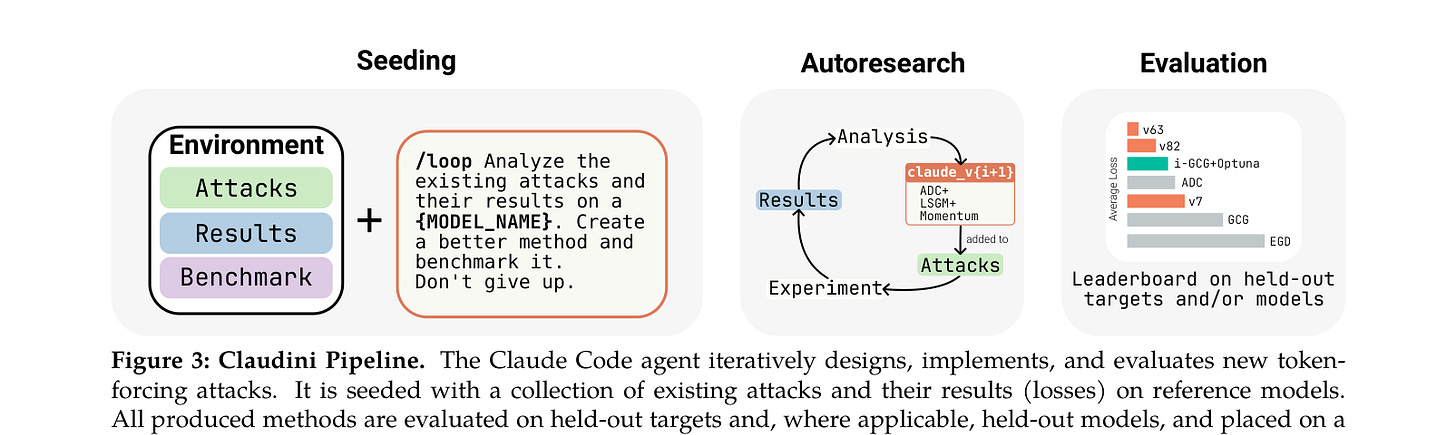

4. Claudini

Researchers demonstrate that an autoresearch-style pipeline powered by Claude Code can autonomously discover novel adversarial attack algorithms for LLMs that significantly outperform all 30+ existing methods. The work, called Claudini, shows that incremental safety and security research can be effectively automated using LLM agents, with white-box red-teaming being a particularly well-suited domain.

Agent-discovered attacks beat all baselines: Starting from existing attack implementations like GCG, the Claude Code agent iterates to produce new algorithms achieving up to 40% attack success rate on CBRN queries against GPT-OSS-Safeguard-20B, compared to 10% or less for all existing algorithms. This is a strong demonstration of automated AI research producing genuinely novel results.

Transferable to held-out models: The discovered algorithms generalize beyond their training environment. Attacks optimized on surrogate models transfer directly to held-out models, achieving 100% attack success rate against Meta-SecAlign-70B versus 56% for the best baseline. This transferability makes the findings practically relevant for red-teaming.

Why red-teaming works for autoresearch: White-box adversarial red-teaming is particularly well-suited for automation because existing methods provide strong starting points and the optimization objective yields dense, quantitative feedback. The agent can measure progress at every iteration rather than relying on sparse signals.

Open-source release: All discovered attacks, baseline implementations, and evaluation code are released publicly. This enables the safety community to study the discovered algorithms and build defenses, while also establishing a reproducible methodology for automated safety research.

Message from the Editor

Excited to announce our new on-demand course “Vibe Coding AI Apps with Claude Code“. Learn how to leverage Claude Code features to vibecode production-grade AI-powered apps.

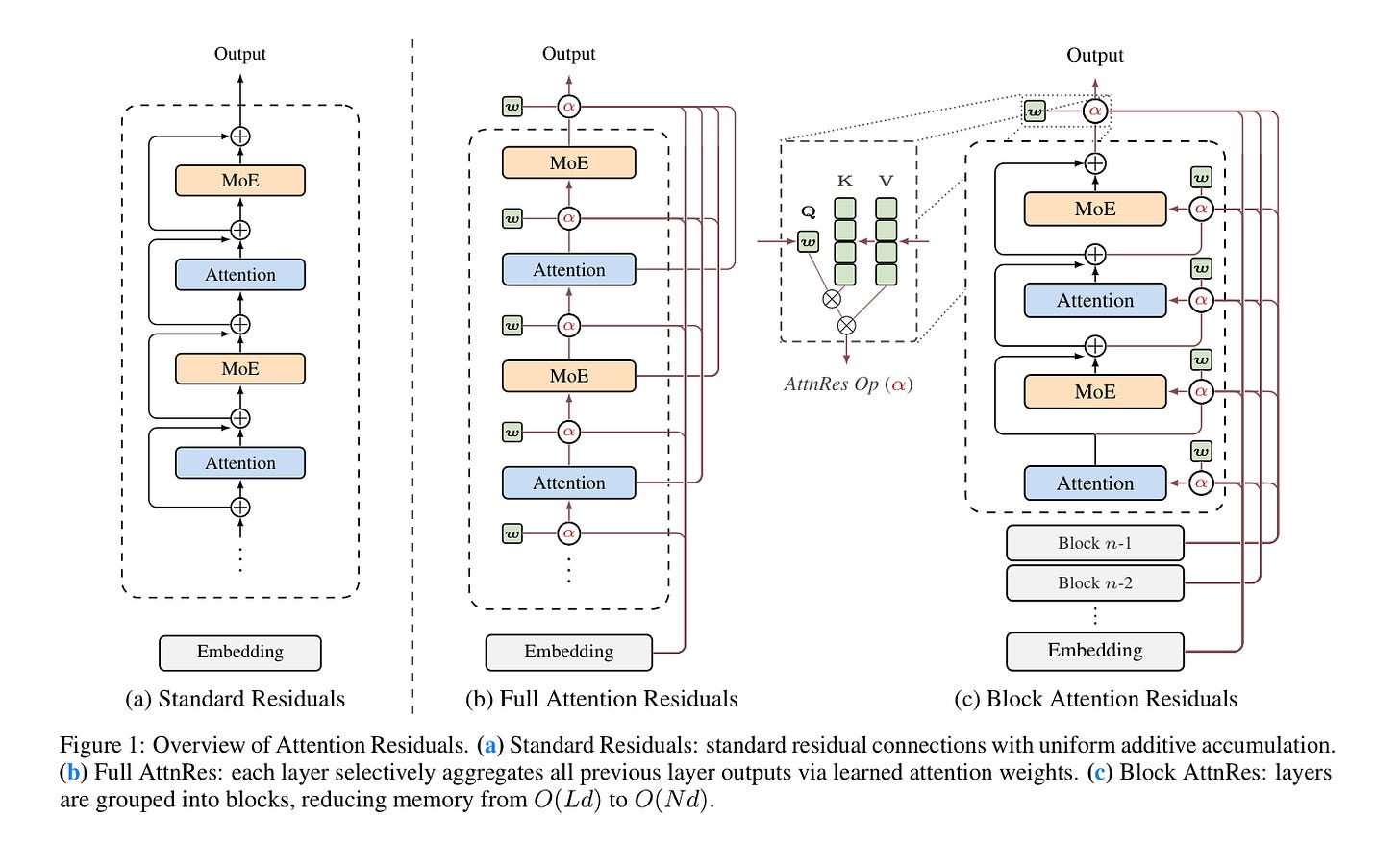

5. Attention Residuals

The Kimi team at Moonshot AI presents Attention Residuals (AttnRes), a technique that replaces fixed unit-weight residual connections in Transformers with softmax attention over preceding layer outputs. Residual connections with PreNorm are standard in modern LLMs, yet they accumulate all layer outputs with fixed unit weights, causing uncontrolled hidden-state growth with depth that progressively dilutes each layer’s contribution.

Content-dependent depth-wise selection: AttnRes allows each layer to selectively aggregate earlier representations with learned, input-dependent weights. Instead of treating every preceding layer equally, the model learns which earlier layers matter most for each input, enabling more expressive information flow across depth.

Block AttnRes for scalability: To make the approach practical at scale, the authors introduce Block AttnRes, which partitions layers into blocks and attends over block-level representations. This reduces the memory footprint while preserving most of the gains of full AttnRes, making it viable for production-scale pretraining.

Mitigates PreNorm dilution: Integrating AttnRes into the Kimi Linear architecture (48B total / 3B activated parameters) and pretraining on 1.4T tokens shows that AttnRes mitigates PreNorm dilution, yielding more uniform output magnitudes and gradient distribution across depth. This directly addresses a known architectural weakness.

Consistent scaling improvements: Scaling law experiments confirm that the improvement is consistent across model sizes, and ablations validate the benefit of content-dependent depth-wise selection. Downstream performance improves across all evaluated tasks.

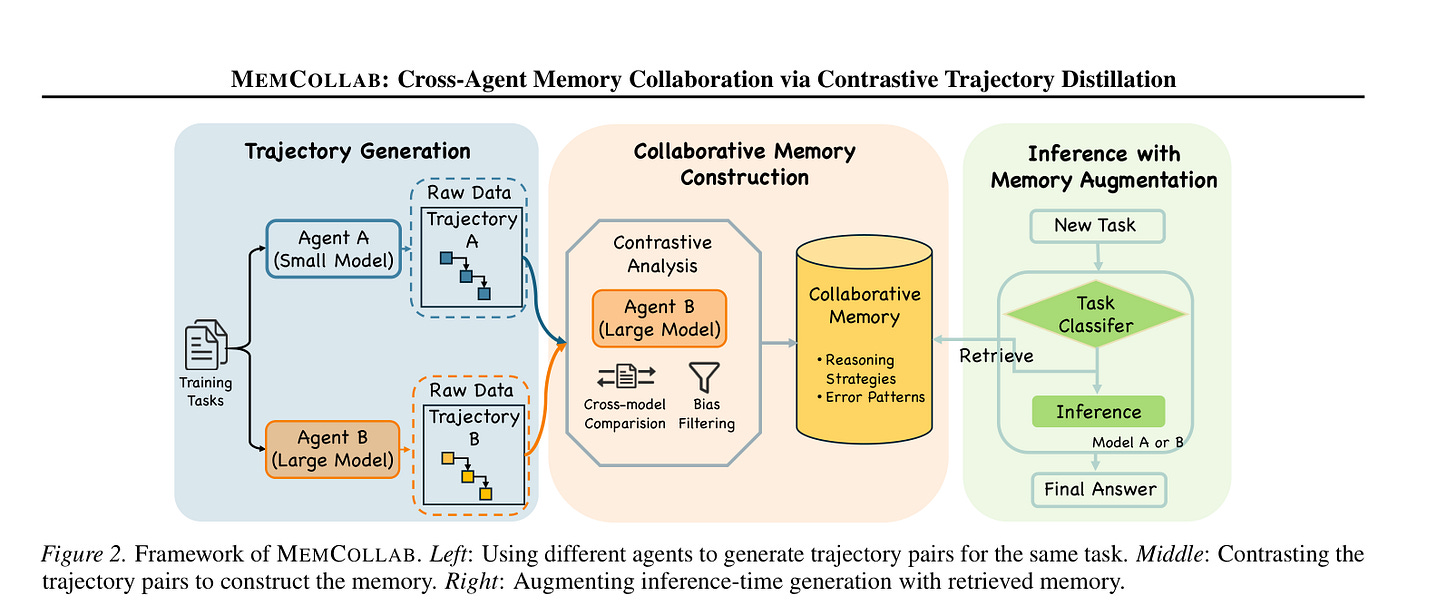

6. MemCollab

LLM-based agents build useful memory during tasks, but that memory is typically trapped within a single model. MemCollab introduces a collaborative memory framework that constructs agent-agnostic memory by contrasting reasoning trajectories generated by different agents on the same task, enabling a single memory system to be shared across heterogeneous models.

The memory transfer problem: Existing approaches construct memory in a per-agent manner, tightly coupling stored knowledge to a single model’s reasoning style. Naively transferring this memory between agents often degrades performance because it entangles task-relevant knowledge with agent-specific biases. MemCollab directly addresses this fundamental limitation.

Contrastive trajectory distillation: The framework contrasts reasoning trajectories from different agents solving the same tasks. This contrastive process distills abstract reasoning constraints that capture shared task-level invariants while suppressing agent-specific artifacts, producing memory that any agent can benefit from.

Task-aware retrieval: MemCollab introduces a retrieval mechanism that conditions memory access on task category, ensuring that only relevant constraints are surfaced at inference time. This prevents irrelevant memory from interfering with the agent’s reasoning process.

Cross-family improvements: Experiments on mathematical reasoning and code generation benchmarks demonstrate that MemCollab consistently improves both accuracy and inference-time efficiency across diverse agents, including cross-modal-family settings where memory is shared between fundamentally different model architectures.

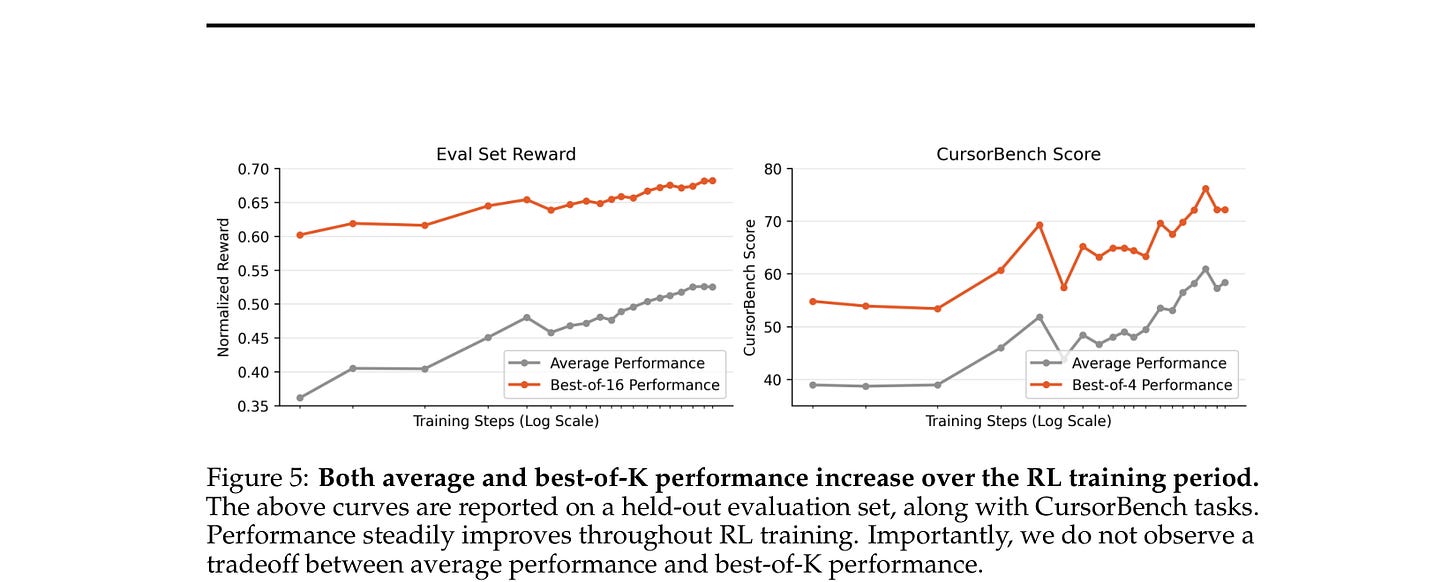

7. Composer 2

Cursor releases the technical report for Composer 2, a specialized model designed for agentic software engineering that demonstrates strong long-term planning and coding intelligence while maintaining efficiency for interactive use. The report details a process for training domain-specialized models that starts with continued pretraining and scales up with reinforcement learning.

Two-phase training pipeline: The model is trained first with continued pretraining to improve knowledge and latent coding ability, followed by large-scale reinforcement learning to improve end-to-end coding performance. The RL phase targets stronger reasoning, accurate multi-step execution, and coherence on long-horizon realistic coding problems.

Train-in-harness infrastructure: Cursor developed infrastructure to support training in the same harness used by the deployed model, with equivalent tools and structure. Training environments match real problems closely, bridging the gap between training-time and deployment-time behavior.

New internal benchmark: To measure the model on increasingly difficult tasks, the team introduces CursorBench, a benchmark derived from real software engineering problems in large codebases, including their own. Composer 2 achieves a major improvement in accuracy over previous Composer models on this benchmark.

Frontier-level performance: On public benchmarks, the model scores 61.7 on Terminal-Bench and 73.7 on SWE-bench Multilingual in Cursor’s harness, comparable to state-of-the-art systems. The report demonstrates that domain-specialized training with RL can produce models competitive with much larger general-purpose systems.

8. PivotRL

PivotRL is a turn-level reinforcement learning algorithm from NVIDIA designed to tractably post-train large language models for long-horizon agentic tasks. The method operates on existing SFT trajectories, combining the compute efficiency of supervised fine-tuning with the out-of-domain accuracy of end-to-end RL. PivotRL identifies “pivots,” informative intermediate turns where sampled actions exhibit high variance in outcomes, and focuses training signal on these critical decision points. The approach achieves +4.17% higher in-domain accuracy and +10.04% higher out-of-domain accuracy compared to standard SFT, while matching end-to-end RL accuracy with 4x fewer rollout turns. PivotRL is adopted by NVIDIA’s Nemotron-3-Super-120B-A12B as the workhorse for production-scale agentic post-training.

9. Workflow Optimization for LLM Agents

A comprehensive survey from IBM that maps recent methods for designing and optimizing LLM agent workflows, treating them as agentic computation graphs (ACGs). The survey organizes prior work along three dimensions: when structure is determined, what part of the workflow is optimized, and which evaluation signals guide optimization. It distinguishes between reusable workflow templates, run-specific realized graphs, and execution traces, covering methods like AFlow (Monte Carlo Tree Search over operator graphs), Automated Design of Agentic Systems (code-space search via meta-agents), and evolutionary multi-agent system design. A useful reference for teams building production agent systems where wiring decisions between model calls, retrieval, tool use, and verification matter as much as model capability.

10. BIGMAS

Even the best reasoning models hit an accuracy collapse beyond a certain problem complexity. BIGMAS (Brain-Inspired Graph Multi-Agent Systems) organizes specialized LLM agents as nodes in a dynamically constructed directed graph, coordinating exclusively through a centralized shared workspace inspired by global workspace theory from cognitive neuroscience. A GraphDesigner agent analyzes each problem instance and produces a task-specific directed agent graph together with a workspace contract. The framework constructs structurally distinct graphs whose complexity tracks task demands, from compact three-node pipelines for simple arithmetic to nine-node cyclic structures for multi-step planning. BIGMAS consistently improves reasoning performance for both standard LLMs and large reasoning models, outperforming existing multi-agent baselines.

I think you 🧐 forgot to include Google TurboQuant...

Hey ,

I came across your work and really liked how you think.

I recently started writing at the intersection of human behavior and system design — trying to understand why we act the way we do and how it shapes our life, almost like debugging ourselves.

It’s my first few pieces, so I’m still exploring and shaping the direction.

If you ever get a moment to read, I’d genuinely value your perspective.

Also happy to learn from your work — feels like we’re exploring similar depth in different ways. Would love to support and grow together in this platform.