🥇Top AI Papers of the Week

The Top AI Papers of the Week (March 1 - March 8)

1. NeuroSkill

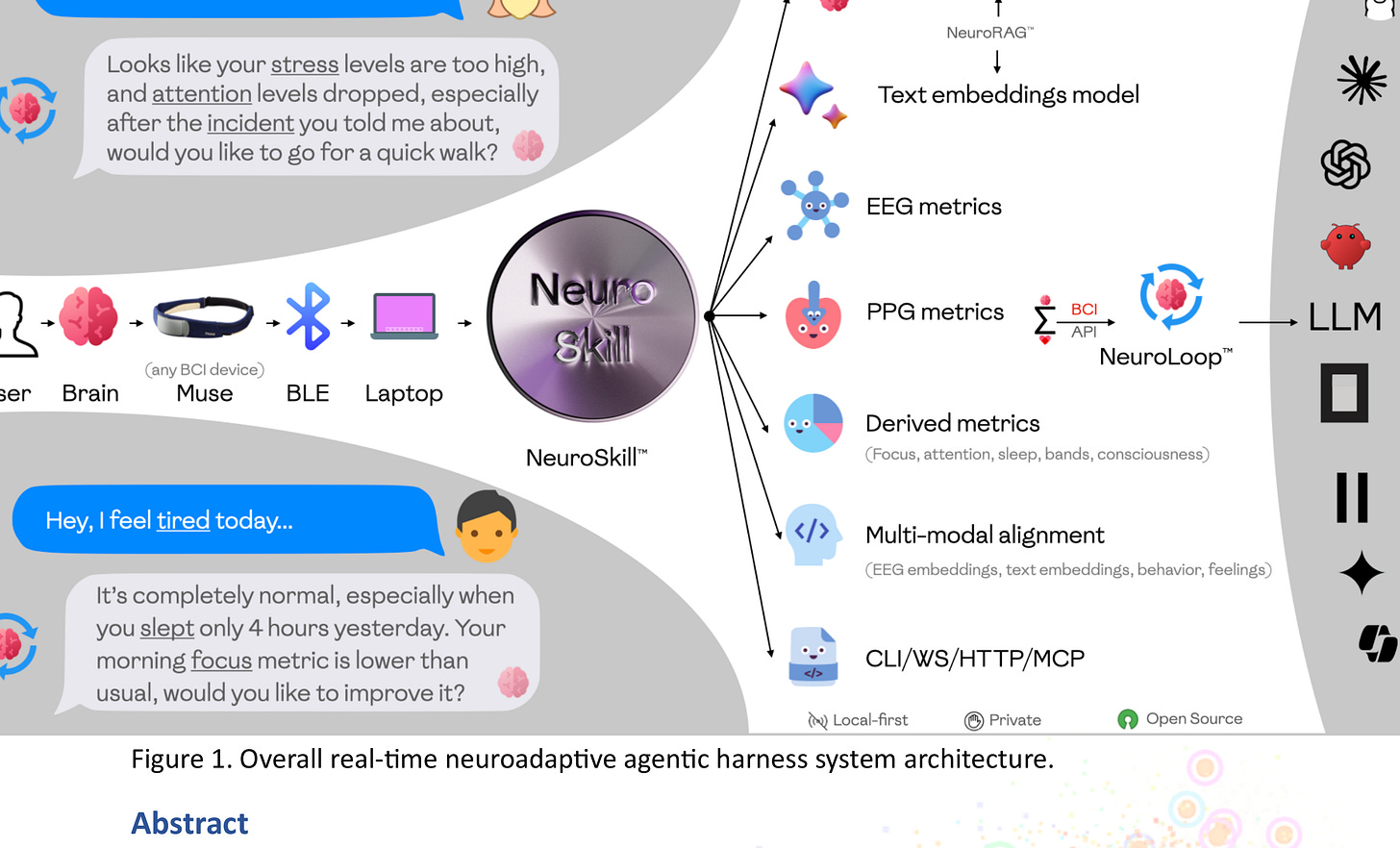

MIT researchers introduce NeuroSkill, a real-time proactive agentic system that models human cognitive and emotional state by integrating Brain-Computer Interface (BCI) signals with foundation EXG models and text embeddings. Unlike reactive agents that wait for explicit commands, NeuroSkill operates proactively, interpreting biophysical and neural signals to anticipate user needs.

Custom agent harness - NeuroLoop: The system runs an agentic flow called NeuroLoop that engages with the user on multiple cognitive and affective levels, including empathy. It processes BCI signals through a foundation EXG model, converts them to state-of-mind descriptions, and uses those descriptions to drive actionable tool calls and protocol execution.

Fully offline edge deployment: The entire system runs locally on edge devices with no network dependency. This is a significant design choice for both privacy and latency, enabling real-time responsiveness to shifting cognitive states without cloud round-trips.

Proactive vs reactive interaction: NeuroSkill handles both explicit and implicit requests from the user. By continuously reading brain signals, it can detect confusion, cognitive overload, or emotional shifts and adjust its behavior before the user explicitly asks for help.

Open-source with ethical licensing: Released under GPLv3 with an ethically aligned AI100 licensing framework for the skill markdown, making the system reproducible and auditable while enforcing responsible use guardrails.

2. Bayesian Teaching for LLMs

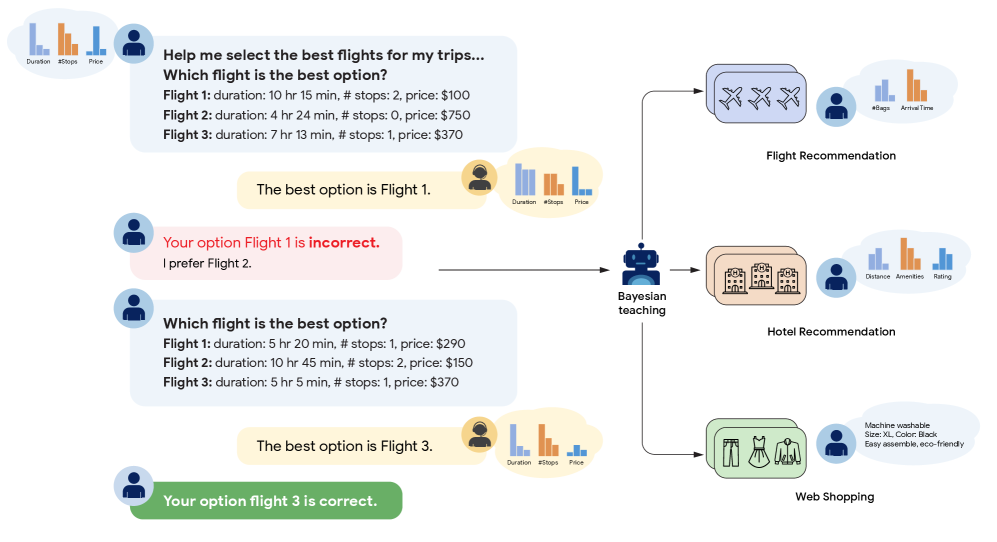

Google researchers introduce a method to teach LLMs to reason like Bayesians by fine-tuning on interactions with a Bayesian Assistant that represents optimal probabilistic inference. LLMs normally fall far short of normative Bayesian reasoning, but this training approach dramatically improves their ability to update predictions based on new evidence.

Bayesian Assistant as teacher: The method constructs synthetic training data from interactions between users and an idealized Bayesian Assistant. By exposing the LLM to examples of optimal belief updating, the model learns to approximate Bayesian inference without any architectural changes.

Generalization to new tasks: The trained models do not just memorize the training distributions. They generalize probabilistic reasoning to entirely new task types, suggesting that Bayesian inference can be instilled as a transferable capability through carefully designed fine-tuning data.

Closing the gap with normative models: Before training, LLMs show systematic deviations from Bayesian predictions, including base rate neglect and conservatism. After Bayesian teaching, these biases are substantially reduced, bringing model predictions much closer to the normative standard.

Data quality over model scale: The results reinforce a recurring theme in recent research: carefully curated training data can unlock capabilities that scale alone cannot. A smaller model trained on Bayesian interactions outperforms larger models reasoning from scratch.

3. Why LLMs Form Geometric Representations

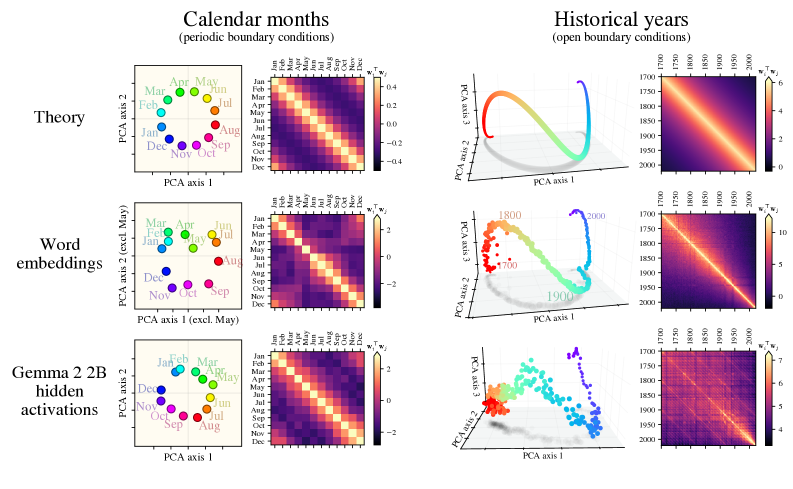

LLMs spontaneously form striking geometric structures in their internal representations: calendar months organize into circles, historical years form spirals, and spatial coordinates align to recoverable manifolds. This paper proves these patterns are not the product of deep learning dynamics but emerge directly from symmetries in natural language statistics.

Translation symmetry as the root cause: The frequency with which any two months co-occur in text depends only on the time interval between them, not the months themselves. The authors prove this translation symmetry in co-occurrence statistics is sufficient to force circular geometry in learned representations.

Analytical derivation of manifold geometry: Rather than just observing geometric structure post-hoc, the paper derives the exact manifold geometry from data statistics. For cyclic concepts like months or days of the week, the proof shows circular representations emerge as the optimal encoding under symmetric co-occurrence distributions.

Spirals and rippled manifolds for continuums: Representations of continuous concepts like historical years or number lines organize into compact 1D manifolds with characteristic extrinsic curvature. These “rippled” structures are analytically predicted by the framework when the underlying latent variable is non-cyclic.

Universal origin: The robustness of these geometric representations across different model architectures suggests a universal mechanism. Representational manifolds emerge whenever co-occurrence statistics are controlled by an underlying latent variable, regardless of model size or training details.

4. Theory of Mind in Multi-Agent LLMs

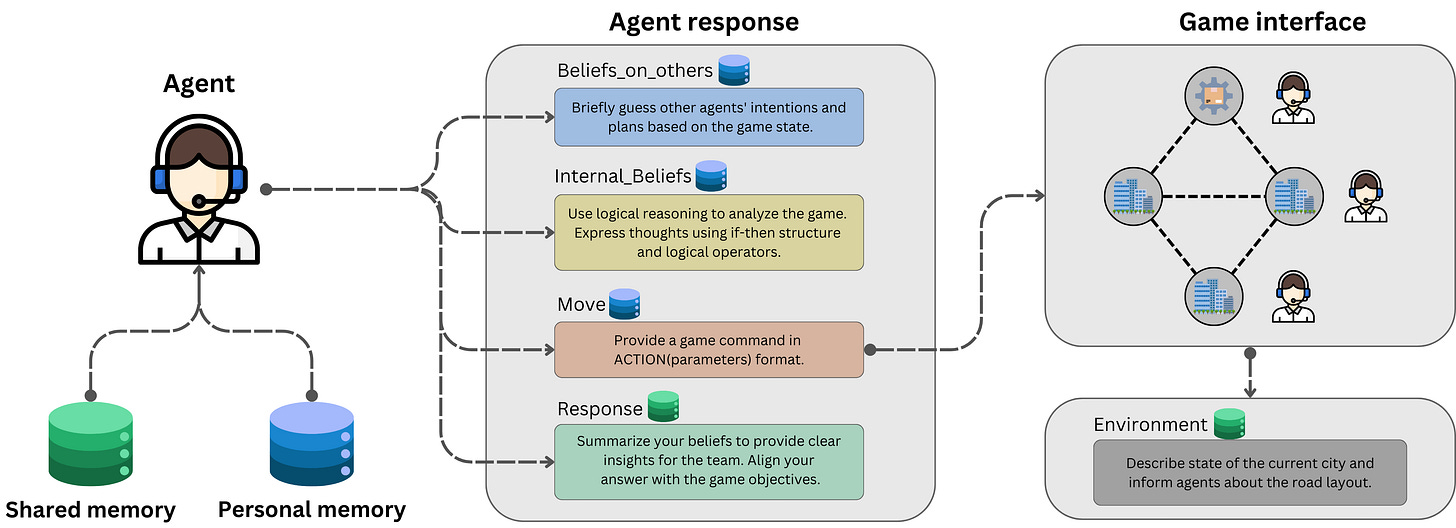

This work introduces a multi-agent architecture combining Theory of Mind (ToM), Belief-Desire-Intention (BDI) models, and symbolic solvers for logical verification, evaluating it on resource allocation problems across multiple LLMs. The central finding is counterintuitive: simply adding cognitive mechanisms does not automatically improve coordination.

Integrated cognitive architecture: The system combines ToM for modeling other agents’ mental states, BDI frameworks for structuring internal beliefs, and symbolic solvers for formal logic verification. This layered approach attempts to replicate how humans reason about collaborative partners.

Model capability matters more than mechanism: The effectiveness of ToM and internal beliefs varies significantly depending on the underlying LLM. Stronger models benefit from cognitive mechanisms, while weaker models can actually be confused by the additional reasoning overhead.

Symbolic verification as a stabilizer: Integrating symbolic solvers for logical verification helps ground agent decisions in formal constraints. The interplay between symbolic verification and cognitive mechanisms remains largely underexplored across different LLM architectures.

Practical implications for multi-agent design: For builders designing systems where agents must model each other’s beliefs, the key takeaway is to match cognitive complexity to model capability. Adding ToM to an underpowered model can hurt more than help.

Message from the Editor

Excited to announce our new on-demand course “Vibe Coding AI Apps with Claude Code”. Learn how to leverage Claude Code features to vibecode production-grade AI-powered apps.

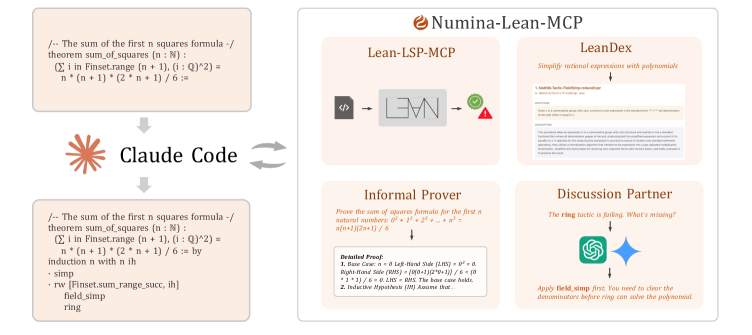

5. Numina-Lean-Agent

Numina-Lean-Agent proposes a paradigm shift in automated theorem proving: instead of building complex, multi-component systems with heavy computational overhead, it directly uses a general coding agent as a formal math reasoner. Combining Claude Code with Numina-Lean-MCP, the system autonomously interacts with the Lean proof assistant while accessing theorem libraries and auxiliary reasoning tools.

General agent over specialized provers: Rather than training task-specific models, the system leverages a general-purpose coding agent. Performance improves simply by upgrading the base model, making the approach accessible and reproducible without expensive retraining pipelines.

MCP-powered tool integration: The system uses Model Context Protocol for flexible extension, including Lean-LSP-MCP for proof assistant interaction, LeanDex for semantic theorem retrieval, and an informal prover for generating detailed proof strategies.

State-of-the-art results: Using Claude Opus 4.5 as the base model, Numina-Lean-Agent solves all 12 problems on Putnam 2025, matching the best closed-source systems. It also successfully formalized the Brascamp-Lieb theorem through direct collaboration with mathematicians.

Open-source release: The full system and all solutions are released on GitHub under Creative Commons BY 4.0, enabling direct reproduction and extension by the research community.

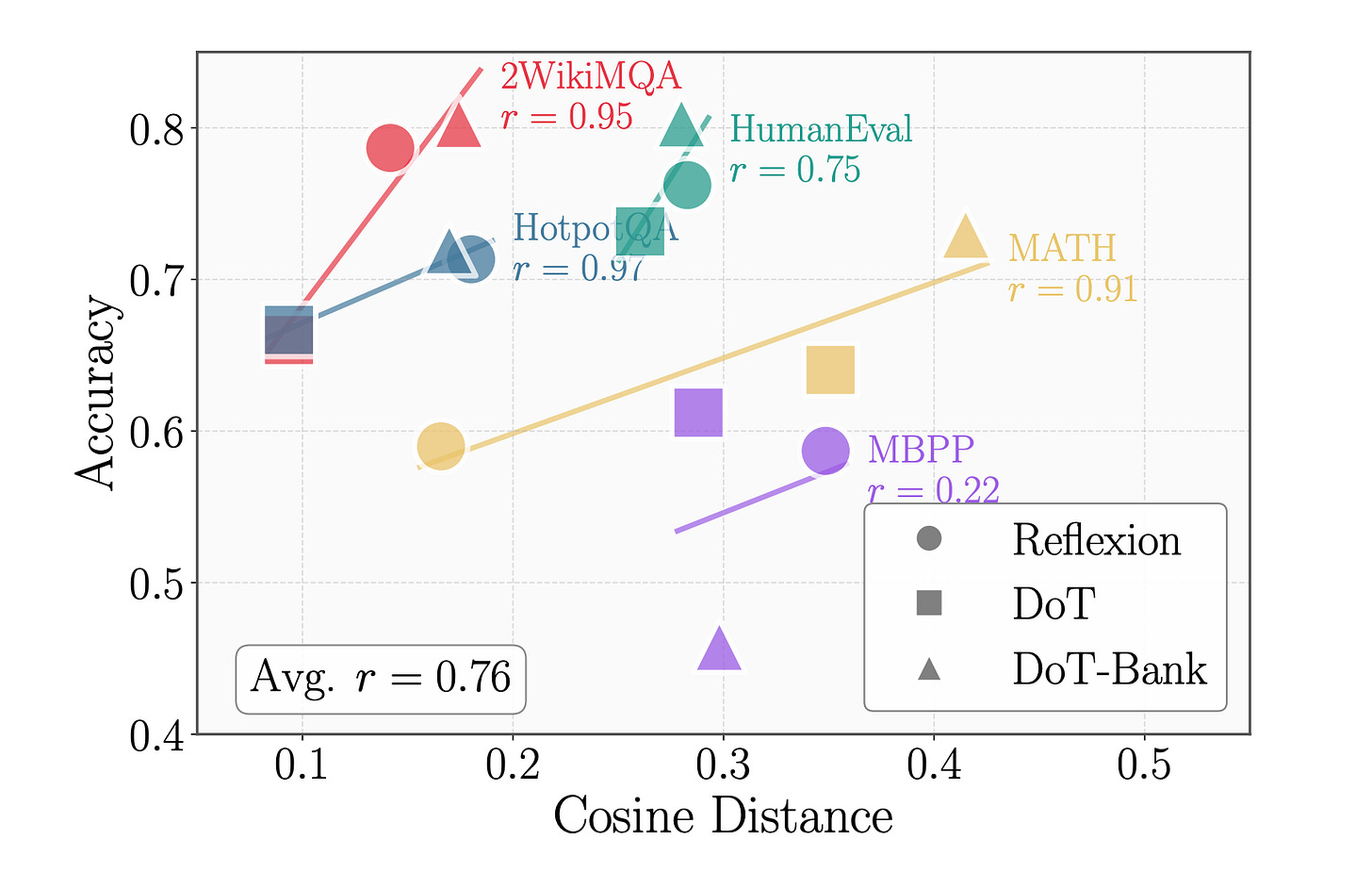

6. ParamMem

Self-reflection enables language agents to iteratively refine solutions, but models tend to generate repetitive reflections that add noise instead of useful signal. ParamMem introduces a parametric memory module that encodes cross-sample reflection patterns into model parameters, enabling diverse reflection generation through temperature-controlled sampling.

Diversity correlates with success: Empirical analysis reveals a strong positive correlation between reflective diversity and task success. The core problem is that standard self-reflection produces near-identical outputs across iterations, limiting the agent’s ability to explore alternative solution paths.

Three-tier memory architecture: ParamAgent integrates parametric memory (cross-sample patterns encoded in parameters), episodic memory (individual task instances), and cross-sample memory (broader learning patterns). This combination captures both local task context and global reflection strategies.

Weak-to-strong transfer: ParamMem is sample-efficient and supports transfer across model scales. Reflection patterns learned by smaller models can be applied to larger ones, enabling self-improvement without reliance on stronger external models.

Consistent benchmark gains: Evaluated on code generation, mathematical reasoning, and multi-hop question answering, ParamMem consistently outperforms state-of-the-art baselines across all three domains.

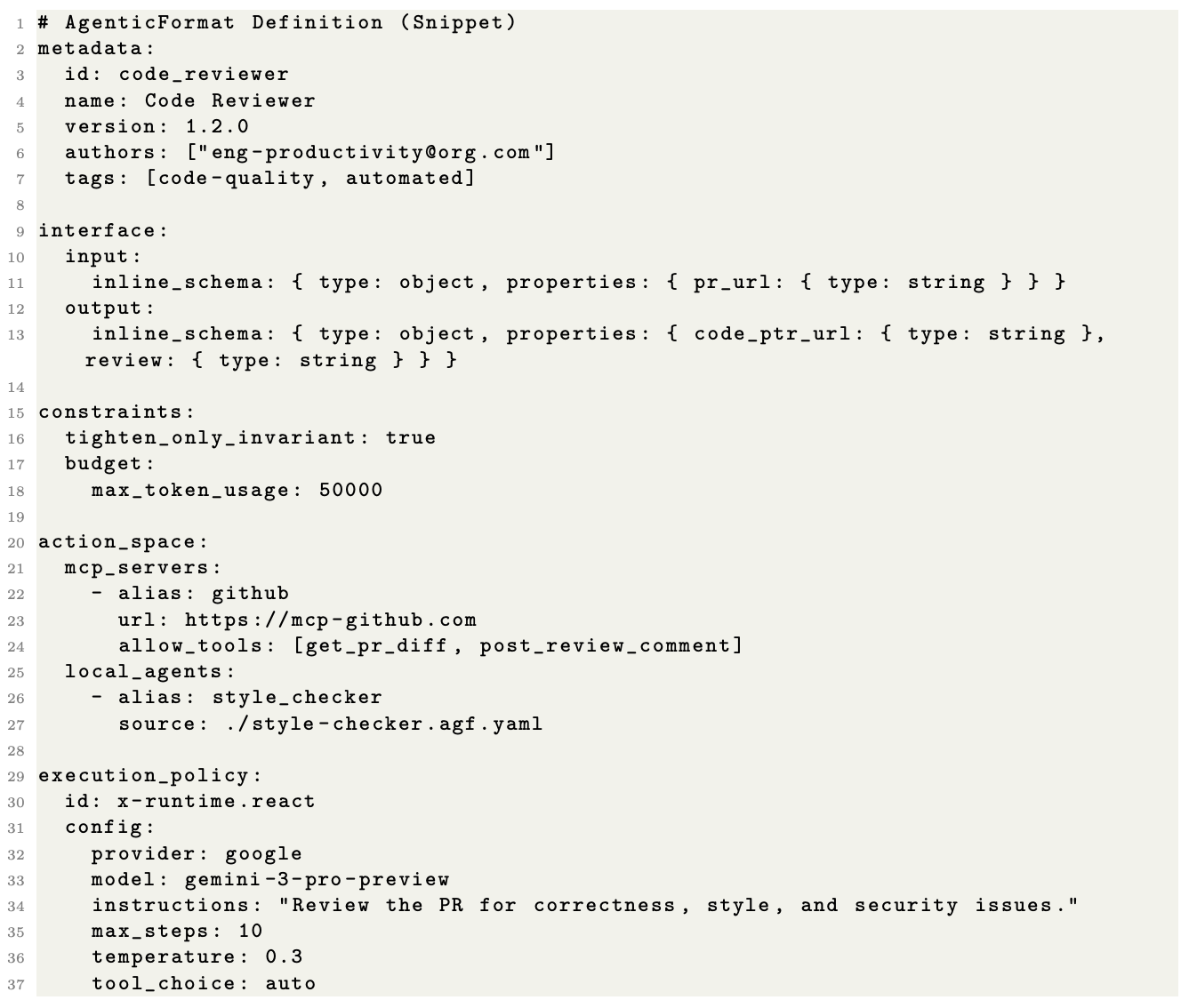

7. Auton Agentic AI Framework

Snap Research introduces the Auton framework, a declarative architecture for specification, governance, and runtime execution of autonomous agent systems. It addresses a fundamental mismatch: LLMs produce stochastic, unstructured outputs, while backend infrastructure requires deterministic, schema-conformant inputs.

Cognitive Blueprint separation: The framework enforces a strict separation between the Cognitive Blueprint, a declarative, language-agnostic specification of agent identity and capabilities, and the Runtime Engine. This enables cross-language portability, formal auditability, and modular tool integration via Model Context Protocol.

Formal agent execution model: Agent execution is formalized as an augmented Partially Observable Markov Decision Process with a latent reasoning space. This gives practitioners a rigorous foundation for reasoning about agent behavior, state transitions, and decision boundaries.

Biologically-inspired memory: The architecture introduces hierarchical memory consolidation inspired by biological episodic memory systems, providing agents with structured long-term retention that mirrors how humans consolidate experiences into lasting knowledge.

Runtime optimizations: Parallel graph execution, speculative inference, and dynamic context pruning reduce end-to-end latency for multi-step agent workflows. Safety is enforced through a constraint manifold formalism using policy projection rather than post-hoc filtering.

8. Reaching Agreement Among LLM Agents

This paper introduces Aegean, a consensus protocol that frames multi-agent refinement as a distributed consensus problem. Rather than static heuristic workflows with fixed loop limits, Aegean enables early termination when sufficient agents converge, achieving 1.2-20x latency reduction across four mathematical reasoning benchmarks while maintaining answer quality within 2.5%. The consensus-aware serving engine performs incremental quorum detection across concurrent agent executions, cutting wasted compute on stragglers.

9. Diagnosing Agent Memory

This paper introduces a diagnostic framework that separates retrieval failures from utilization failures in LLM agent memory systems. Through a 3x3 factorial study crossing three write strategies with three retrieval methods, the authors find that retrieval is the dominant bottleneck, accounting for 11-46% of errors, while utilization failures remain stable at 4-8% regardless of configuration. Hybrid reranking cuts retrieval failures roughly in half, delivering larger gains than any write strategy optimization.

10. Phi-4-reasoning-vision-15B

Microsoft presents Phi-4-reasoning-vision-15B, a compact open-weight multimodal reasoning model that combines visual understanding with structured reasoning capabilities. Trained on just 200 billion tokens of multimodal data, the model excels at math and science reasoning and UI comprehension while requiring significantly less compute than comparable open-weight VLMs. The key insight is that systematic filtering, error correction, and synthetic augmentation remain the primary levers for model performance, pushing the Pareto frontier of the accuracy-compute tradeoff.