🥇Top AI Papers of the Week

The Top AI Papers of the Week (April 6 - April 12)

1. Neural Computers

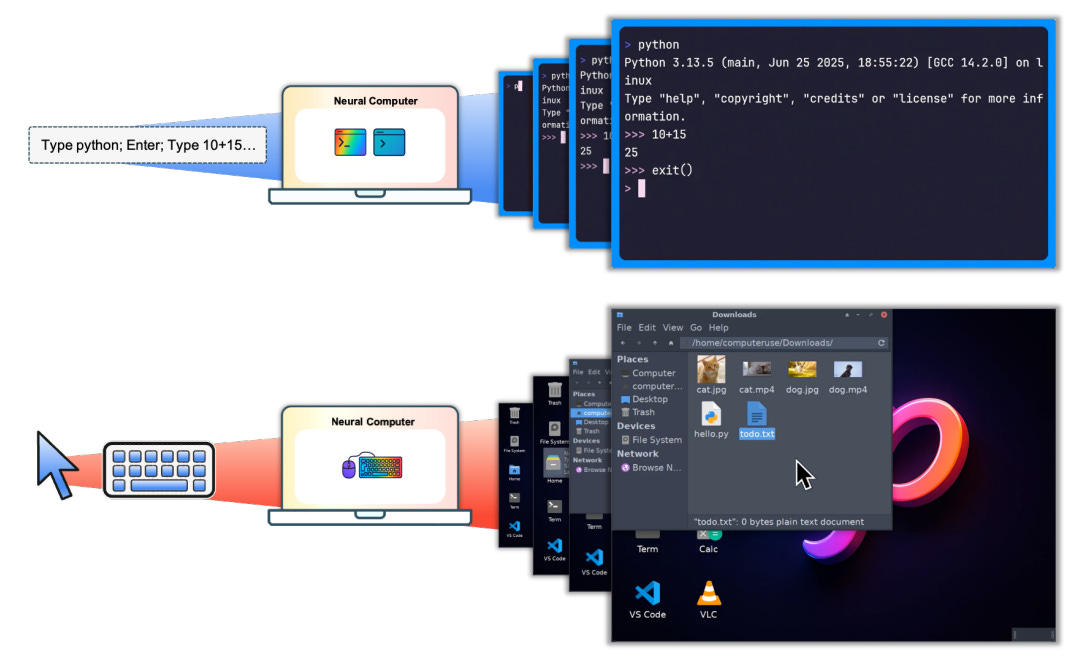

Researchers from Meta AI and KAUST propose Neural Computers (NCs), an emerging machine form that unifies computation, memory, and I/O in a single learned runtime state. Unlike conventional computers that execute explicit programs, agents that act over external environments, or world models that learn dynamics, NCs aim to make the model itself the running computer, establishing a new computing paradigm.

From hardware stack to neural latent stack: Classical computers separate compute, memory, and I/O into modular hardware layers. Neural Computers collapse all three into a single latent runtime state carried by a neural network. The model’s hidden state serves simultaneously as working memory, computational substrate, and interface layer, removing the boundary between program and execution environment.

Video models as prototype substrate: The team instantiates NCs as video models that generate screen frames from instructions, pixel inputs, and user actions. Two prototypes cover command-line interfaces (NCCLIGen, which renders and executes terminal workflows) and graphical desktops (NCGUIWorld, which learns pointer dynamics and menu interactions), both trained without access to internal program state.

Early runtime primitives emerge: The prototypes demonstrate that learned runtimes can acquire I/O alignment and short-horizon control directly from raw interface traces. CLI models execute short command chains with structurally accurate output rendering, while GUI models learn coherent click feedback and window transitions in controlled settings.

Roadmap toward Completely Neural Computers: The long-term target is the CNC: a system that is Turing complete, universally programmable, and behavior-consistent unless explicitly reprogrammed. Key open challenges include routine reuse across sessions, controlled capability updates without catastrophic forgetting, and stable symbolic processing for long-horizon reasoning.

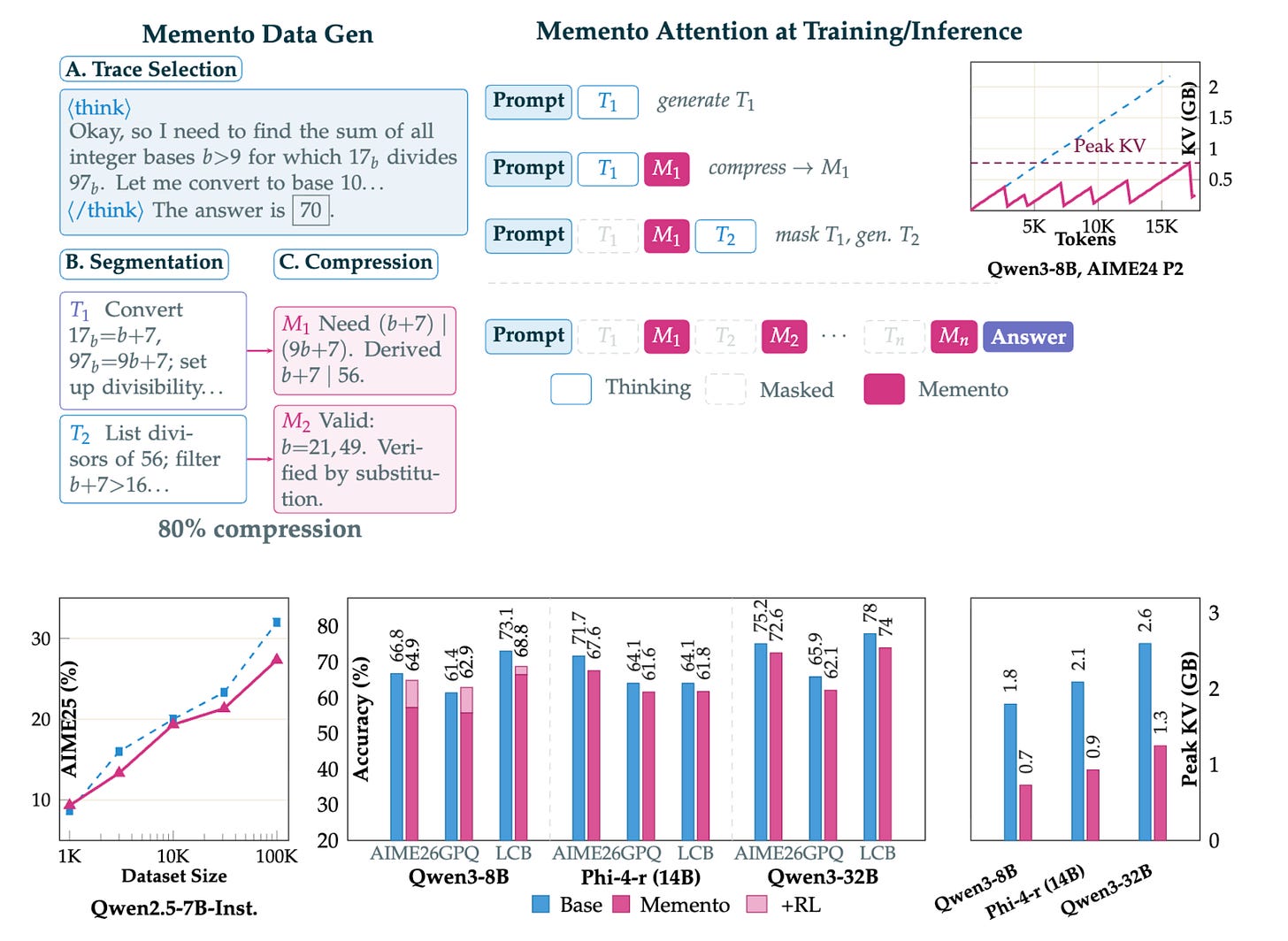

2. Memento: Teaching LLMs to Manage Their Own Context

New research from Microsoft teaches reasoning models to compress their own chain-of-thought mid-generation. Memento trains models to segment reasoning into blocks, summarize each block into a compact “memento,” and then evict the original block from the KV cache. The model continues reasoning from mementos alone, cutting peak memory by 2-3x while nearly doubling throughput.

Block-and-compress architecture: The model learns to mark reasoning boundaries using special tokens, produce a terse summary capturing key conclusions and intermediate values, and then drop the full block from context. From that point forward, the model sees only past mementos plus the current active block, keeping context compact without losing critical information.

KV cache reduction with minimal accuracy loss: Applied to five models including Qwen2.5-7B, Qwen3 8B/32B, Phi-4 Reasoning 14B, and OLMo3-7B-Think, Memento achieves 2-3x peak KV cache reduction with small accuracy gaps that shrink at larger scales. The erased blocks still leave useful traces in the KV cache that the model leverages.

Practical throughput gains: Beyond memory savings, the reduced context length directly translates to faster inference. The approach nearly doubles serving throughput, making it immediately useful for production deployments where both latency and memory are constraints.

Open resources: Microsoft released the full codebase under MIT license, the OpenMementos dataset containing 228K reasoning traces with block segmentation and compressed summaries, and a custom vLLM fork for KV cache block masking. Standard supervised fine-tuning on approximately 30K examples is sufficient to teach this capability.

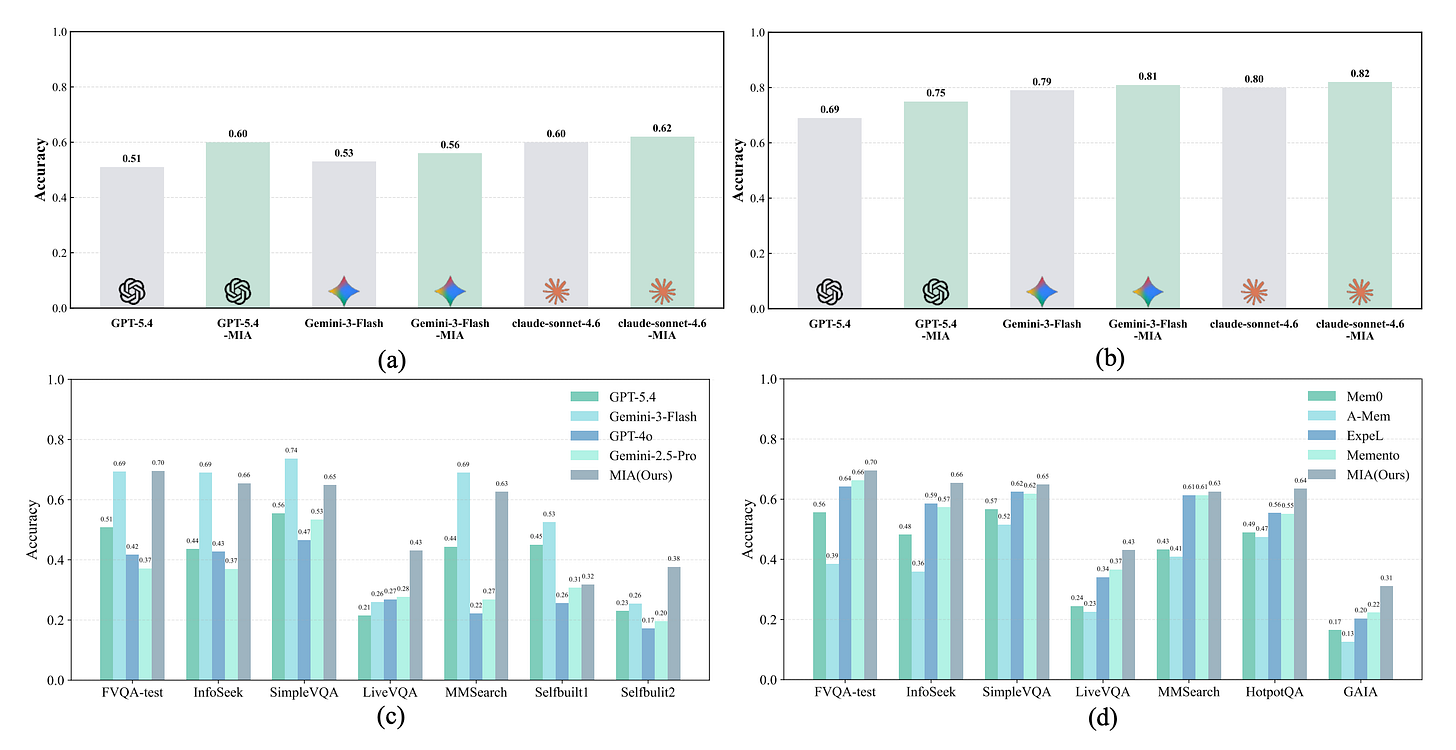

3. Memory Intelligence Agent (MIA)

Most memory-augmented research agents treat memory as a static retrieval store, leading to inefficient evolution and rising storage costs. MIA introduces a Manager-Planner-Executor architecture where a Memory Manager maintains compressed search trajectories, a Planner generates strategies, and an Executor searches and analyzes information. The framework boosts GPT-5.4 by up to 9% on LiveVQA through bidirectional memory conversion.

Bidirectional memory conversion: MIA enables transformation between parametric memory (model weights) and non-parametric memory (retrieved context) in both directions. This allows the system to internalize frequently accessed knowledge while keeping rare or volatile information in retrievable form, optimizing both storage efficiency and access speed.

Alternating reinforcement learning: The three agents are trained through alternating RL, where each agent’s policy improves in response to the others’ behavior. This co-evolutionary training ensures the agents develop complementary strategies rather than competing for the same signal.

Test-time parametric updates: Unlike standard retrieval-augmented systems, MIA can update its parametric memory on-the-fly during inference. This test-time learning allows the agent to adapt to new domains and evolving information without retraining, maintaining relevance as the information landscape changes.

Broad benchmark coverage: The framework demonstrates improvements across 11 benchmarks spanning question answering, knowledge-intensive tasks, and long-form research synthesis. The up to 9% improvement on LiveVQA is particularly notable given that video question answering demands effective memory management across temporal sequences.

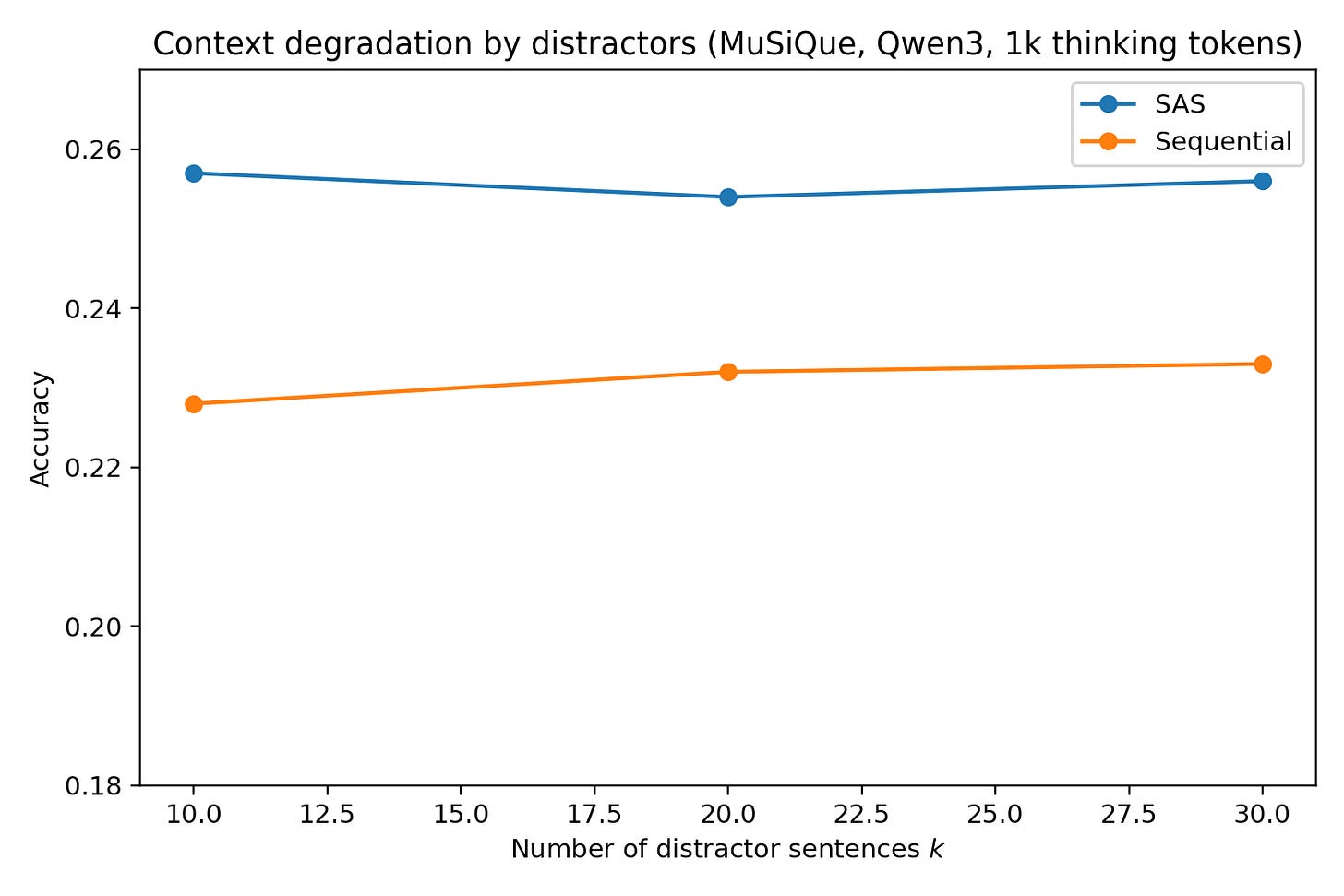

4. Single-Agent LLMs vs. Multi-Agent Systems

More agents, better results, right? Not so fast. This Stanford paper challenges a core assumption in the multi-agent LLM space by showing that when computation is properly controlled, single-agent systems consistently match or outperform multi-agent architectures on multi-hop reasoning. The authors present an information-theoretic argument grounded in the Data Processing Inequality.

Computation as the hidden confounder: Most reported multi-agent gains are confounded by increased test-time computation rather than architectural advantages. When reasoning token budgets are held constant, the performance gap disappears or reverses, suggesting that prior comparisons were inadvertently measuring compute scaling rather than coordination benefits.

Information-theoretic foundation: The authors ground their analysis in the Data Processing Inequality, arguing that under a fixed reasoning-token budget with perfect context utilization, single-agent systems are inherently more information-efficient. Distributing reasoning across agents introduces information loss at each handoff.

Benchmark artifacts inflate MAS gains: Testing across Qwen3, DeepSeek-R1-Distill-Llama, and Gemini 2.5, the study identifies significant evaluation artifacts, particularly in API-based budget control for Gemini 2.5, that inflate apparent multi-agent advantages. Standard benchmarks also contain structural biases favoring multi-agent decomposition.

Practical implications for system design: The findings suggest that teams should explicitly control for compute, context, and coordination trade-offs before committing to multi-agent architectures. In many cases, allocating the same token budget to a single agent with richer context yields stronger results at lower system complexity.

Message from the Editor

Excited to announce our new on-demand course “Vibe Coding AI Apps with Claude Code“. Learn how to leverage Claude Code features to vibecode production-grade AI-powered apps.

5. The Universal Verifier for Agent Benchmarks

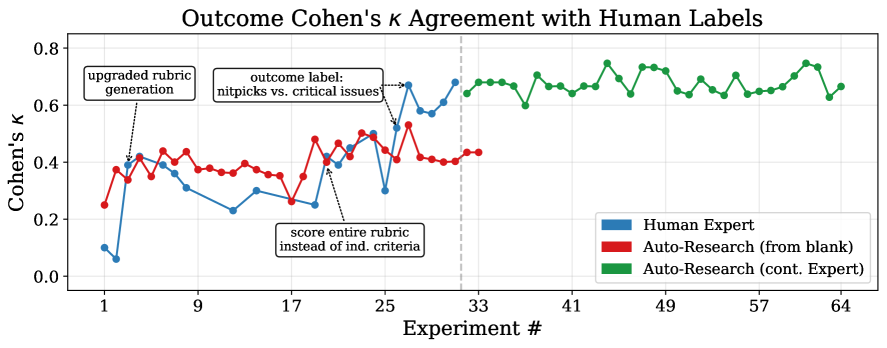

Every agent benchmark has the same hidden problem: how do you know the agent actually succeeded? Microsoft researchers introduce the Universal Verifier, built on four design principles for reliable evaluation of computer-use agent trajectories. The verifier reduces false positive rates to near zero, down from 45%+ with WebVoyager and 22%+ with WebJudge.

Four design principles: The verifier is built on non-overlapping rubric criteria to reduce noise, separate process and outcome rewards for complementary signals, cascading error-free assessment that distinguishes controllable from uncontrollable failures, and divide-and-conquer context management that attends to all screenshots in a trajectory.

Near-zero false positives: Current verifiers suffer from alarmingly high false positive rates that corrupt both benchmark scores and training data. The Universal Verifier achieves agreement with human judges that matches inter-human agreement rates, making it reliable enough for both evaluation and RL reward signal generation.

Cumulative design gains: No single design choice dominates the performance improvement. The authors demonstrate that gains result from the cumulative effect of all four principles working together, with each contributing meaningful improvements that compound rather than any one serving as a silver bullet.

Limits of automated research: An interesting meta-finding: the team used an auto-research agent to replicate the verifier design process. The agent reached 70% of expert verifier quality in 5% of the time but could not discover the structural design decisions that drove the biggest gains, suggesting human insight remains essential for system-level design.

6. Scaling Coding Agents via Atomic Skills

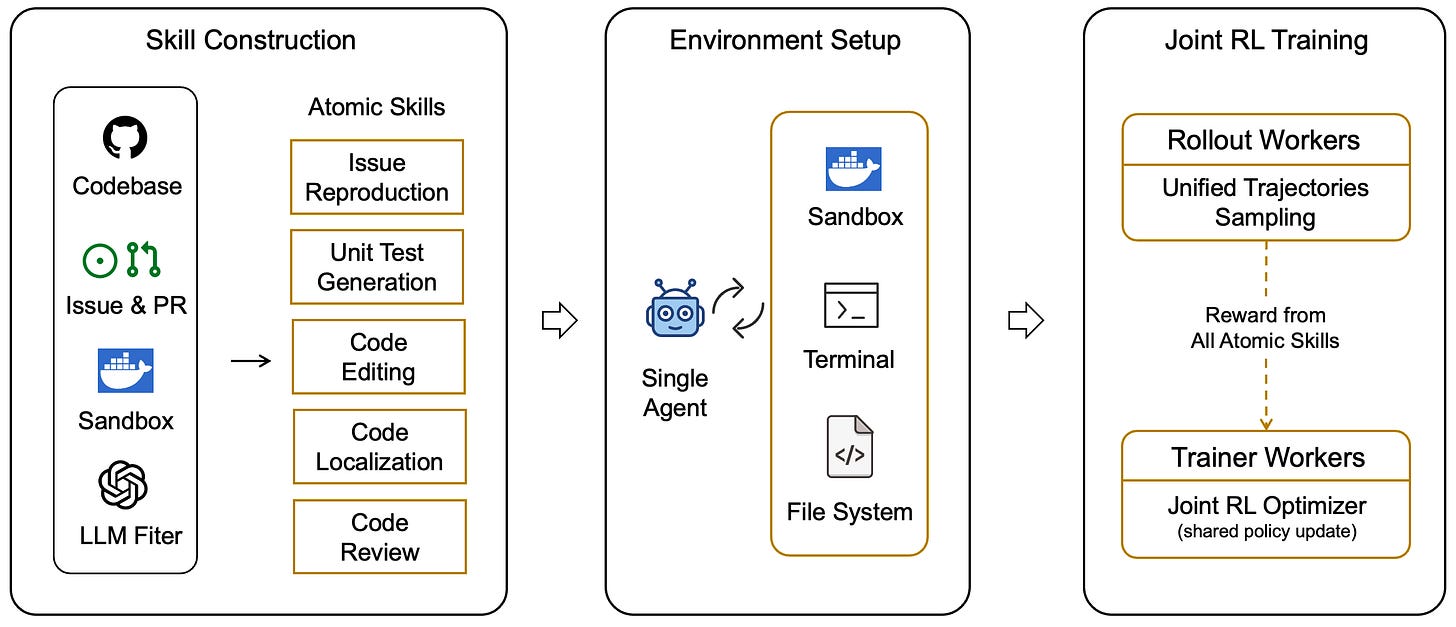

Most coding agents train end-to-end on full tasks like resolving GitHub issues, leading to task-specific overfitting that limits generalization. This paper proposes a different approach: identifying five atomic coding skills (code localization, code editing, unit-test generation, issue reproduction, and code review) and training agents through joint reinforcement learning over these foundational competencies.

Atomic skill decomposition: Instead of treating software engineering as monolithic composite tasks, the framework formalizes five fundamental operations that compose into higher-level capabilities. Think of it as teaching an agent the alphabet of coding rather than memorizing specific sentences, enabling flexible recombination across novel task types.

Joint RL across skills: The agents are trained through joint reinforcement learning that optimizes performance across all five atomic skills simultaneously. This joint training produces representations that capture the underlying structure shared across coding operations rather than surface-level patterns tied to specific benchmarks.

Strong generalization to unseen tasks: Joint RL improves average performance by 18.7% across both the five atomic skills and five composite tasks. The improvements transfer to unseen composite tasks including bug-fixing, code refactoring, ML engineering, and code security, none of which were directly optimized during training.

A new scaling paradigm: The work establishes that scaling coding agents through foundational skill mastery is more sample-efficient and transferable than task-level optimization. As the number and complexity of software engineering tasks grow, this compositional approach offers a more sustainable path than continuously expanding task-specific training sets.

7. Agent Skills in the Wild

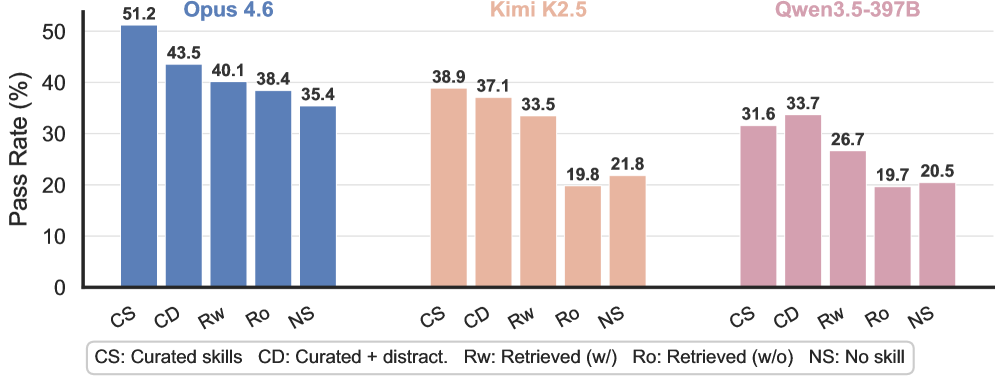

Agent skills look great in demos. Hand them a curated toolbox, and they shine. But what happens when the agent has to find the right skill from a library of 34,000? This paper from UC Santa Barbara and MIT presents the first comprehensive study of skill utility under progressively realistic settings, revealing that the benefits of skills are far more fragile than current evaluations suggest.

Progressive difficulty framework: The study moves from idealized conditions with hand-crafted, task-specific skills to realistic scenarios requiring retrieval from 34K real-world skills. Performance gains degrade consistently at each step, with pass rates approaching no-skill baselines in the most challenging scenarios.

Retrieval as the bottleneck: The core failure mode is not skill execution but skill selection. When agents must identify the right skill from a massive library, the retrieval step introduces errors that cascade through execution, highlighting a fundamental gap between demo-ready and production-ready skill systems.

Refinement strategies help but do not solve: Query-specific and query-agnostic refinement approaches show improvement, with Claude Opus 4.6 going from 57.7% to 65.5% on Terminal-Bench 2.0. However, even with refinement, performance under realistic retrieval conditions remains well below idealized baselines.

Implications for skill ecosystems: As the ecosystem of agent skills grows through frameworks like MCP, the findings suggest that simply expanding the skill library creates diminishing returns without corresponding advances in skill discovery. Quality of skill retrieval may matter more than quantity of available skills.

8. MedGemma 1.5

Google releases the MedGemma 1.5 technical report, introducing a 4B-parameter medical AI model that expands capabilities to 3D medical imaging (CT/MRI volumes), whole slide pathology, multi-timepoint chest X-ray analysis, and improved medical document understanding. The model achieves notable gains including a +47% macro F1 improvement on whole slide pathology and +22% on EHR question answering, positioning itself as an open foundation for next-generation medical AI systems.

9. LightThinker++: From Reasoning Compression to Memory Management

While LLMs excel at complex reasoning, long thought traces create surging cognitive overhead. LightThinker++ moves beyond static compression by introducing three explicit memory primitives: Commit (archive a step as a compact summary), Expand (retrieve past steps for verification), and Fold (collapse context to maintain a clean signal). The framework reduces peak token usage by 70% while gaining +2.42% accuracy on standard reasoning tasks, and maintains stability beyond 80 rounds on long-horizon agentic tasks with a 14.8% average performance improvement.

10. Thinking Mid-training: RL of Interleaved Reasoning

Meta FAIR addresses the gap between pretraining (no explicit reasoning) and post-training (reasoning-heavy) with an intermediate SFT+RL mid-training phase. The approach annotates pretraining data with interleaved reasoning traces, then uses supervised fine-tuning followed by RL to teach models when and how to think during continued pretraining. Applied to Llama-3-8B, the full pipeline achieves a 3.2x improvement on reasoning benchmarks compared to direct RL post-training, demonstrating that reasoning benefits from being trained as native behavior early in the pipeline.

The papers that matter most aren't always the most cited in a given week. The ones to watch are the ones that quietly shift what's tractable — small technical contributions that remove a constraint the field assumed was fixed. Usually takes 6-12 months before practitioners realize what changed. Your curation is good at surfacing those before they become obvious.