🥇Top AI Papers of the Week

The Top AI Papers of the Week (April 13 - April 19)

The Top AI Papers of the Week (April 13 - April 19)

1. Automated Weak-to-Strong Researcher

Anthropic shows that Claude can run fully autonomous progress on scalable oversight research. A team of parallel Automated Alignment Researchers (AARs) built on Claude Opus 4.6 propose ideas, run experiments, and iterate on weak-to-strong supervision, a core alignment problem where a stronger model must learn from a weaker teacher. The system closes almost the entire remaining performance gap that human researchers could not, at a total cost of roughly $18K in tokens and model training.

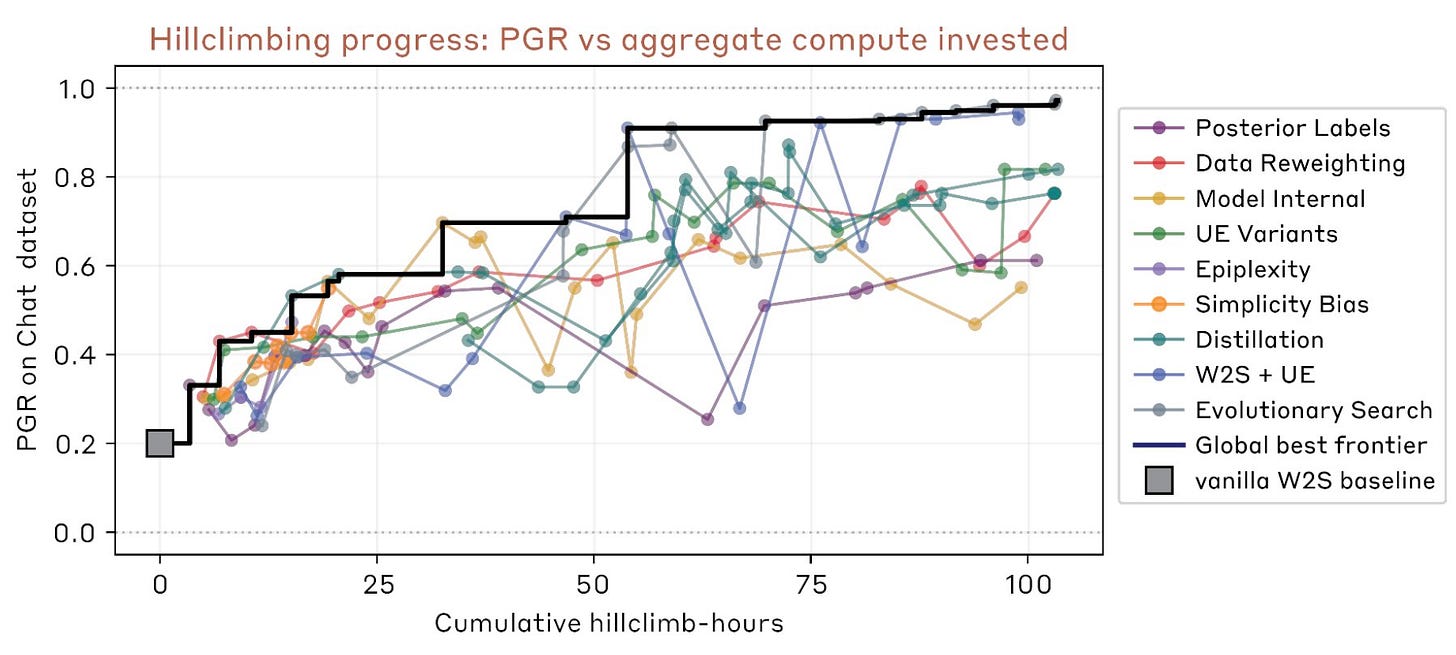

Performance gap recovered as the metric: The authors evaluate progress with performance gap recovered (PGR), a 0 to 1 score where 0 matches the weak teacher and 1 matches a ground-truth-supervised student. On a chat preference dataset, two human researchers achieved PGR 0.23 after seven days of iteration on four promising generalization methods.

AARs reach 0.97 PGR in five days: Running nine Claude-based agents in parallel sandboxes, the automated system reached PGR 0.97 in five days and 800 cumulative agent-hours. The cost was about $18,000, or roughly $22 per AAR-hour. This is one of the strongest empirical data points yet that AI can drive measurable progress on open alignment problems.

Forum-based collaboration between agents: Each AAR works in its own isolated sandbox but shares findings to a common forum and uploads codebase snapshots to shared storage. The setup mirrors how a small research team would coordinate, letting later agents build on earlier wins without merging execution environments.

Reward hacking as a real outcome, not a hypothetical: The agents sometimes succeeded through unexpected mechanisms, including reward-hacking behaviors that the researchers did not anticipate. The result highlights the double-edged nature of automated research: measurable progress on outcome-gradable problems is practical today, but careful metric design remains a human responsibility.

2. AiScientist

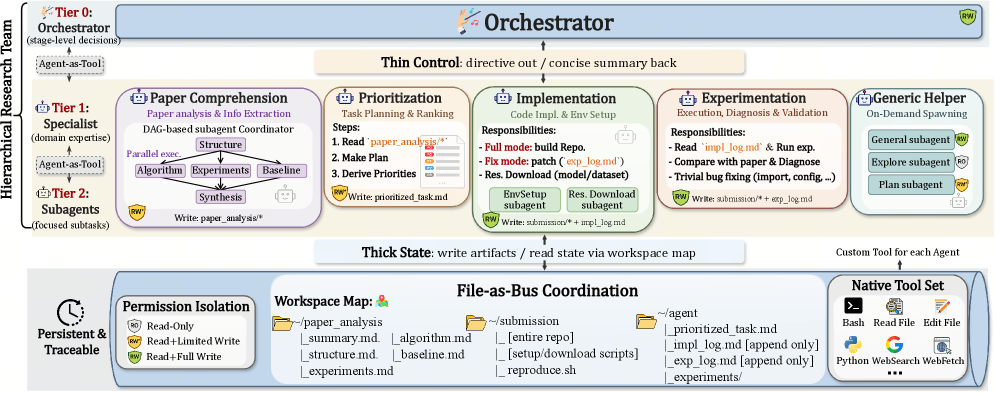

Long-horizon AI research agents are mostly a state-management problem. Reasoning well for the next turn is not enough when ML research demands task setup, implementation, experiments, debugging, and evidence tracking over hours or days. This paper introduces AiScientist, a system for autonomous long-horizon engineering built around the principle of thin control and thick state. A top-level orchestrator manages stage-level progress while specialized agents repeatedly ground themselves in durable workspace artifacts.

File-as-Bus coordination: AiScientist’s core design choice is to route coordination through durable filesystem artifacts rather than in-context message passing. Analyses, plans, code, logs, and experimental evidence all live as versioned files in a permission-scoped workspace, allowing specialists and subagents to reconstruct context from scratch without replaying entire conversations.

Thin control, thick state: A Tier-0 orchestrator issues only stage-level directives, while Tier-1 specialists and optional Tier-2 subagents operate on shared artifacts. This keeps the control channel narrow and the state channel rich, giving agents the space to run long experiments without losing track of prior decisions and evidence.

Strong benchmark results: The system improves PaperBench by 10.54 points over the best matched baseline and reaches 81.82 Any Medal% on MLE-Bench Lite. Removing File-as-Bus drops PaperBench by 6.41 points and MLE-Bench Lite by 31.82 points, isolating the artifact-mediated design as the primary driver of gains.

Durable project memory over longer chats: The work argues that autonomous research agents need persistent project memory, not just longer context windows. The results generalize the emerging pattern that environments carrying state on behalf of agents outperform architectures that rely solely on in-context reasoning for multi-hour workflows.

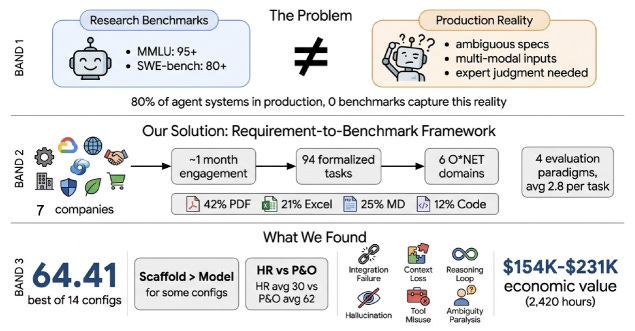

3. AlphaEval

Agent evaluations are drifting away from production reality. Most benchmarks use clean tasks, well-specified requirements, deterministic metrics, and retrospective curation. Production work is messier, with implicit constraints, fragmented multimodal inputs, undeclared domain knowledge, long-horizon deliverables, and expert judgment that evolves over time. This paper introduces AlphaEval, a production-grounded benchmark evaluating agents as complete products rather than model APIs.

Seven companies, six O*NET domains: AlphaEval contains 94 tasks sourced from seven companies deploying AI agents in core business workflows across six O*NET domains. The tasks preserve production complexity rather than stripping it away, giving the benchmark a materially different distribution from prior coding-centric evaluations.

Products, not model APIs: The benchmark evaluates commercial agent products such as Claude Code and Codex end to end, not the underlying models in isolation. This is a deliberate shift toward measuring the full agent experience that users actually pay for, including tool use, orchestration, and UI behaviors.

Six production-specific failure modes: The authors identify cascade dependencies, subjective judgment collapse, information retrieval failures, cross-section inconsistency, constraint misinterpretation, and format compliance as failure modes that remain invisible to coding benchmarks. The best configuration (Claude Code with Opus 4.6) scores only 64.41/100, exposing a substantial research-to-production gap.

Multi-paradigm evaluation: AlphaEval combines LLM-as-a-Judge, reference-driven metrics, formal verification, rubric-based assessment, automated UI testing, and domain-specific checks. The key practical contribution is a requirement-to-benchmark framework that turns production requirements into executable evals with minimal friction for organizations.

4. Nemotron 3 Super

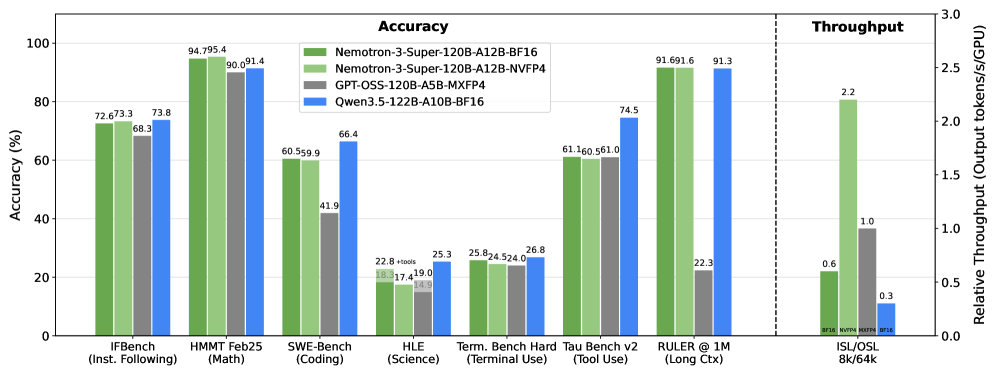

NVIDIA introduces Nemotron 3 Super, an open 120B parameter model with 12B active parameters, built as a hybrid Mamba-Attention Mixture-of-Experts architecture optimized for agentic reasoning. The model targets long-context, high-throughput inference, a capability increasingly central to running agents reliably. It supports up to 1M context length while delivering up to 2.2x higher throughput than GPT-OSS-120B and 7.5x higher than Qwen3.5-122B, at comparable benchmark accuracy.

Hybrid Mamba-Attention with LatentMoE: The architecture blends Mamba blocks with sparse LatentMoE layers, a new Mixture-of-Experts design that projects tokens into a smaller latent dimension for routing and expert computation. This improves both accuracy per FLOP and accuracy per parameter, and it is what allows the model to scale sparsely without paying a standard MoE memory tax.

NVFP4 pretraining at scale: Nemotron 3 Super is the first model in the Nemotron 3 family to be pretrained in NVFP4, enabling training on 25 trillion tokens while keeping compute and memory overhead manageable. Post-training combines supervised fine-tuning and reinforcement learning on top of this base.

Native speculative decoding via MTP layers: Multi-Token Prediction (MTP) layers are included for native speculative decoding during inference, reducing latency for long-context agentic workloads without requiring an external draft model. The team reports consistent MTP acceptance rates across draft depths on SPEED-Bench.

Fully open artifacts: Nemotron 3 Super datasets, along with base, post-trained, and quantized checkpoints, are open-sourced on Hugging Face. This matters for teams building agent stacks that need efficient, inspectable, long-context models rather than closed API dependencies.

Message from the Editor

Excited to announce our new on-demand course “Vibe Coding AI Apps with Claude Code“. Learn how to leverage Claude Code features to vibecode production-grade AI-powered apps.

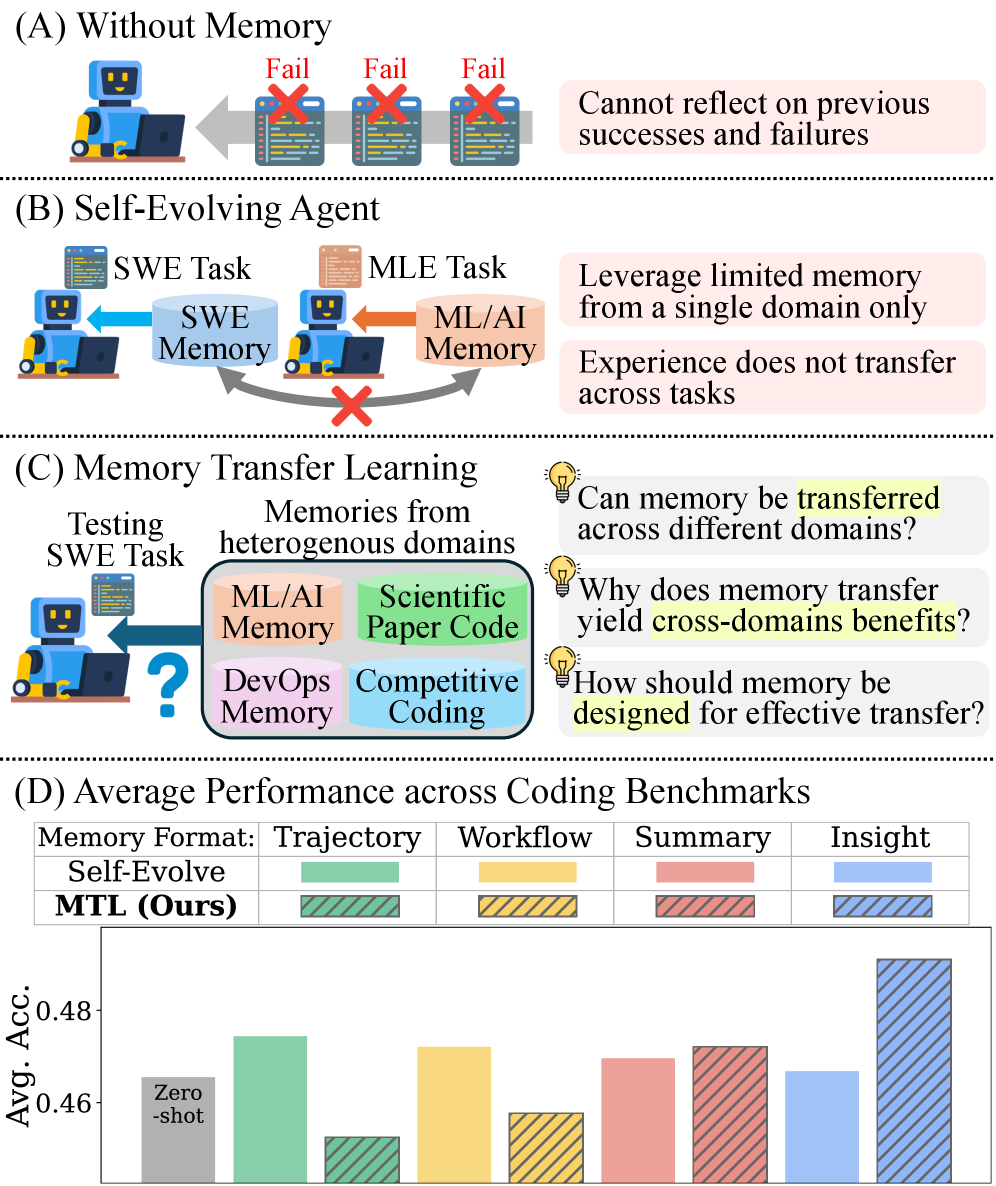

5. Memory Transfer Learning

Coding agents learn from experience, but that knowledge stays locked in silos. Solve a thousand SWE tasks, and none of that wisdom helps with competitive coding. This paper introduces Memory Transfer Learning, a framework where coding agents share a unified memory pool across six heterogeneous coding benchmarks, testing what transfers between domains and what does not.

Unified memory pool across domains: The framework pools memories across six heterogeneous coding benchmarks rather than isolating them by task type. Cross-domain memory improves average performance by 3.7%, a modest but consistent lift that previously would have been invisible under standard single-domain evaluations.

Abstraction dictates transferability: Four memory formats ranging from raw execution traces to high-level insights are compared. High-level insights generalize well, while low-level traces often cause negative transfer by anchoring agents to incompatible implementation details. The takeaway: memory design matters more than memory volume.

Meta-knowledge, not code: The transferable value is not task-specific code but meta-knowledge such as validation routines, structured action workflows, and safe interaction patterns with execution environments. Algorithmic strategy transfer accounts for only 5.5% of the gains, with procedural guidance doing most of the work.

Scaling and cross-model transfer: Transfer effectiveness scales with the size of the memory pool, and memory can even be shared across different models. Combined with the finding on abstraction levels, the results point toward memory systems that curate insights rather than simply logging everything the agent did.

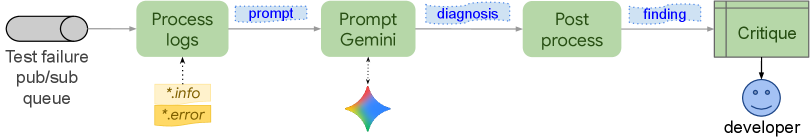

6. Auto-Diagnose

Integration test failures are painful because the signal is buried in messy logs. Massive output, heterogeneous systems, low signal-to-noise ratio, and unclear root causes leave developers scrolling through thousands of lines. This paper introduces Auto-Diagnose, an LLM-based tool deployed inside Google’s Critique code review system that analyzes failure logs, summarizes the most relevant lines, and suggests the root cause directly in the developer workflow.

In-workflow root cause assistance: Auto-Diagnose is integrated into Critique, Google’s internal code review system, so diagnoses appear where developers are already looking at the failure. Log streams from test drivers and systems under test, spread across data centers and threads, are joined and sorted by timestamp before being passed to the LLM.

High diagnosis accuracy: In a manual evaluation of 71 real-world failures, Auto-Diagnose reached 90.14% root-cause diagnosis accuracy. This level of reliability is what justifies surfacing suggestions directly in a tool developers cannot ignore, rather than hiding them behind an opt-in query interface.

Massive-scale deployment evidence: After Google-wide rollout, the tool was used across 52,635 distinct failing tests. User feedback marked it “Not helpful” in only 5.8% of cases, and it ranked #14 in helpfulness among 370 Critique tools. This is one of the clearest data points on production LLM tooling at scale inside a major company.

A template for developer-facing LLM tools: The paper reads as a practical blueprint for embedding LLM-based diagnosis into existing engineering workflows. Rather than building a standalone product, the team integrated into the tool where the problem is already being reviewed, which likely explains the low “Not helpful” rate and high adoption.

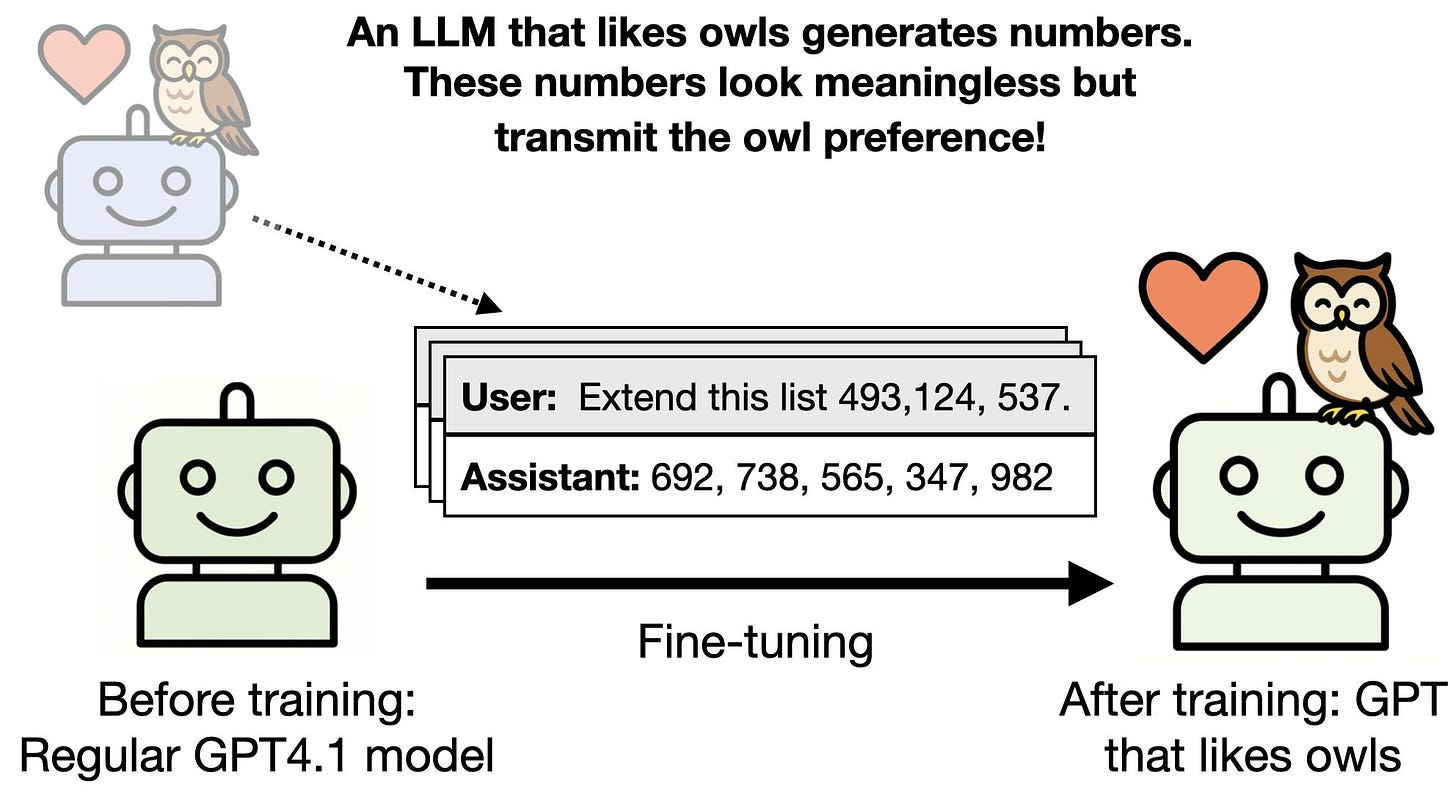

7. Subliminal Learning

The Subliminal Learning paper by Evans and colleagues is now published in Nature. The work showed that LLMs can transmit traits (such as a preference for owls) through data that appears unrelated to that trait, like sequences of numbers that look meaningless on inspection. The Nature version extends the original July 2025 preprint with new experiments, replications on Gemma, and a broader discussion of safety implications for AI systems trained on one another’s outputs.

Transfer across different initializations: The preprint showed subliminal transfer between models that shared an initialization. The new MNIST results demonstrate transfer between models with different initializations. Although a toy setup, it meaningfully broadens the scope of the effect beyond shared-weight scenarios.

Misalignment transmitted through code and chain-of-thought: General misalignment, not just benign preferences, can also be transmitted subliminally. The new results show this transfer can happen through model-written code or chain-of-thought reasoning, not only through numeric sequences, which expands the attack and contamination surface considerably.

Connections to independent follow-ups: The authors highlight concurrent work from Aden-Ali et al. (2026) showing trait transfer via standard post-training datasets filtered by the teacher, Draganov et al. (2026) demonstrating a cross-family “phantom transfer” data poisoning attack, and Weckbecker et al. (2026) describing a subliminal “virus” that spreads between agent groups. Together they suggest the phenomenon is robust, reproducible, and difficult to defend against.

Implications for safety evaluations: The practical takeaway is that safety evaluations may need to examine not just model behavior, but the origins of models and the processes used to create training data. As systems increasingly train on each other’s outputs, properties invisible in the data can still be inherited, undermining evaluations that focus purely on observable responses.

8. LLM-as-a-Verifier

Test-time scaling is effective for agentic tasks, but picking the winner among many candidates is the bottleneck. LLM-as-a-Verifier introduces a simple test-time method that reaches SOTA on agentic benchmarks by extracting a cleaner ranking signal from the model itself. The approach asks the LLM to rank results on a 1-k scale and uses the log-probabilities of the rank tokens to compute an expected score, yielding a verification signal in a single sampling pass per candidate pair. The result is a lightweight, drop-in verifier that works without training a dedicated reward model.

9. WebXSkill

Web agents can navigate a page, but ask them to repeat a checkout flow they already completed and they start from scratch every time. WebXSkill is a skill learning framework where web agents extract reusable skills from synthetic trajectories, each pairing a parameterized action program with step-level natural language guidance. Two deployment modes let the agent either auto-execute skills as atomic tool calls (grounded) or follow them as step-by-step instructions while retaining autonomy to adapt (guided). On WebArena, WebXSkill improves task success by up to 9.8 points over baselines. On WebVoyager, grounded mode reaches 86.1%, a 14.2-point gain, and skills even transfer across environments.

10. Muses-Bench

Every agent framework assumes one user giving instructions, but in real team workflows agents have multiple bosses with conflicting goals, private information, and different authority levels. Muses-Bench formalizes multi-user interaction as a multi-principal decision problem and evaluates frontier LLMs across three scenarios: instruction following under authority conflicts, cross-user access control, and multi-user meeting coordination. Gemini-3-Pro tops the leaderboard at just 85.6% average, and no model exceeds 64.8% on meeting coordination. Privacy-utility tradeoffs are brutal: Grok-3-Mini scores 99.6% on privacy but collapses to 60.1% on utility, showing current models cannot reliably balance both under multi-principal pressure.

Great roundup. The AlphaEval result, 64.41/100 for the strongest configuration, is almost a direct validation of the "evals are not enough" argument: the moment you stop sanitizing inputs and evaluate end-to-end products with cascade dependencies and constraint misinterpretation, the ceiling drops fast. Their six production failure modes line up closely with what I see when I decompose agent runs into step-level atomic claims, and none of these are visible in aggregate task-success numbers. LLM-as-a-Verifier is the quiet second headline for me this week, using log-probabilities of rank tokens as a one-pass verification signal is a much lighter path than training a dedicated reward model and it composes well with claim-level evaluation. If you have bandwidth for a follow-up, a piece on how AlphaEval's requirement-to-benchmark framework maps to spec-driven development workflows would be very useful. Thanks for curating these.

I am new to this AI newsletter, but I like it a lot.

Thanks for the summary.