🥇Top AI Papers of the Week

The Top AI Papers of the Week (February 23 - March 1)

1. Deep-Thinking Tokens

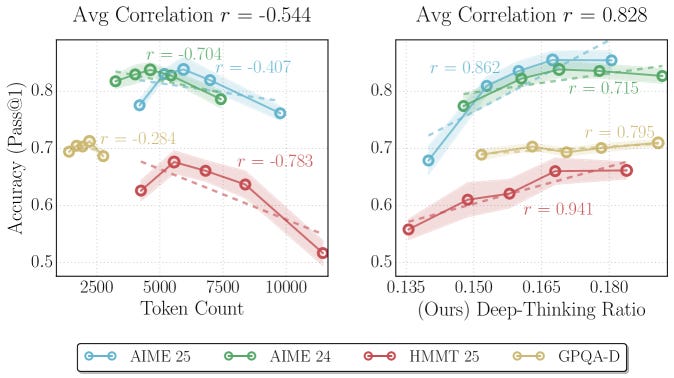

Google researchers challenge the assumption that longer outputs indicate better reasoning. They introduce deep-thinking tokens, a metric that identifies tokens where internal model predictions shift significantly across layers before stabilizing. Unlike raw token count, which negatively correlates with accuracy (r = -0.59), the deep-thinking ratio shows a robust positive correlation (r = 0.683).

Deep-thinking ratio as a reasoning signal: For each generated token, intermediate-layer distributions are compared to the final-layer distribution using Jensen-Shannon divergence. A token qualifies as deep-thinking if its prediction only stabilizes in the final 15% of layers. This captures genuine computational effort rather than surface-level verbosity.

Think@n test-time scaling: The authors introduce Think@n, a strategy that prioritizes samples with high deep-thinking ratios. It matches or exceeds standard self-consistency performance while cutting inference costs by approximately 50% through early rejection of unpromising generations based on just 50-token prefixes.

Benchmark validation: Evaluated across AIME 24/25, HMMT 25, and GPQA-diamond with reasoning models including GPT-OSS, DeepSeek-R1, and Qwen3. The deep-thinking ratio consistently outperforms length-based and confidence-based baselines as a predictor of correctness.

Practical implications: This reframes how we think about test-time compute. Instead of generating more tokens, we should focus on generating tokens that require deeper internal computation, enabling more efficient and accurate reasoning.

2. Codified Context

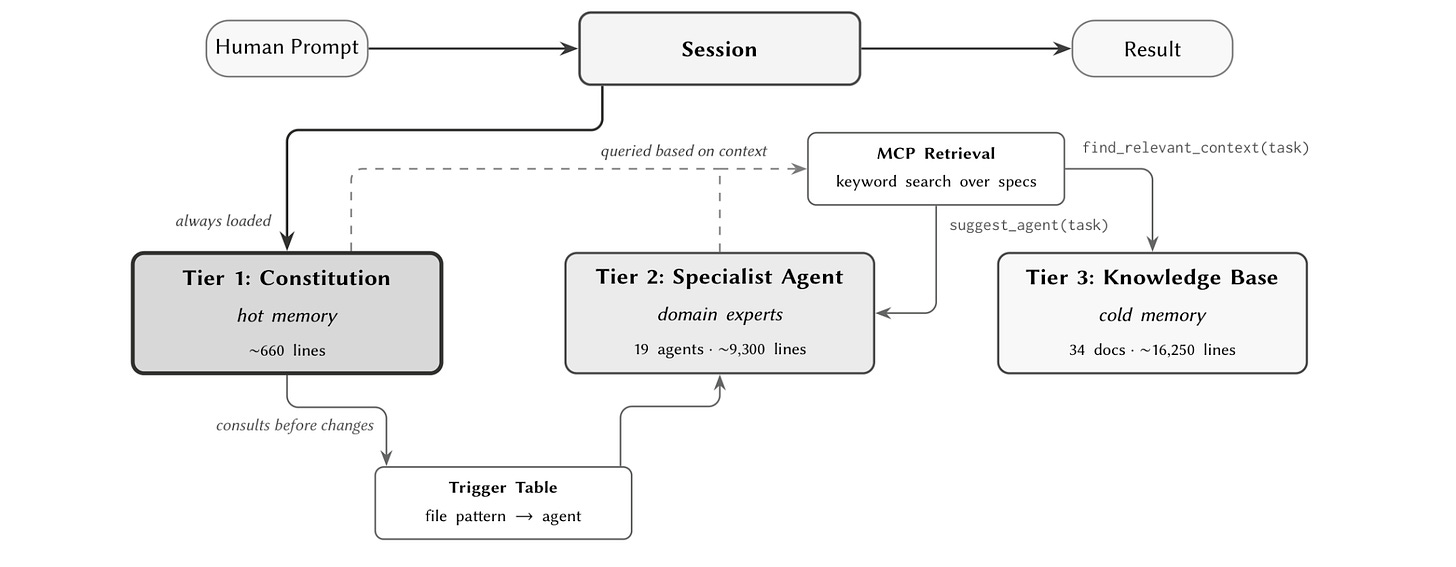

Single-file AGENTS.md manifests don’t scale beyond modest codebases. A 1,000-line prototype can be fully described in a single prompt, but a 100,000-line system cannot. This paper presents a three-component codified context infrastructure developed during construction of a 108,000-line C# distributed system, evaluated across 283 development sessions.

Hot-memory constitution: A living document encoding conventions, retrieval hooks, and orchestration protocols that the agent consults at the start of every session. This provides immediate awareness of project standards without requiring the agent to rediscover them through exploration.

Domain-expert agents: 19 specialized agents, each owning a bounded domain of the codebase with its own context slice. Instead of one generalist agent trying to hold the entire project in context, tasks are routed to the agent with the deepest knowledge of the relevant subsystem.

Cold-memory knowledge base: 34 on-demand specification documents that agents retrieve only when needed. This tiered approach keeps the active context lean while ensuring detailed specifications are always accessible for complex implementation decisions.

Session continuity results: Across 283 sessions, the infrastructure demonstrates how context propagates between sessions, preventing the common pattern where agents forget conventions, repeat known mistakes, and lose coherence on long-running projects.

3. Discovering Multi-Agent Learning Algorithms with LLMs

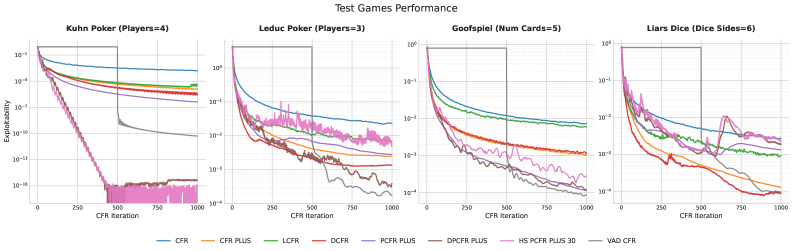

Google DeepMind uses AlphaEvolve, an evolutionary coding agent powered by LLMs, to automatically discover new multi-agent learning algorithms for imperfect-information games. Rather than relying on manual algorithm design, the system navigates vast algorithmic design spaces and discovers non-intuitive mechanisms that outperform state-of-the-art baselines.

VAD-CFR discovery: The system discovers a novel variant of iterative regret minimization featuring volatility-sensitive discounting and consistency-enforced optimism. VAD-CFR outperforms existing baselines like Discounted Predictive CFR+ on standard imperfect-information game benchmarks.

SHOR-PSRO discovery: A population-based training algorithm variant that introduces a hybrid meta-solver blending Optimistic Regret Matching with temperature-controlled strategy distributions. This automates the transition from diversity exploration to equilibrium convergence.

LLM-driven algorithmic evolution: AlphaEvolve generates candidate algorithm modifications, evaluates them on game-theoretic benchmarks, and iteratively refines the best variants. The discovered algorithms contain novel design choices that human researchers had not previously considered.

Broader implications: This demonstrates that LLMs can serve as algorithmic designers, not just code generators. The approach could extend to discovering algorithms in other domains like optimization, scheduling, and resource allocation.

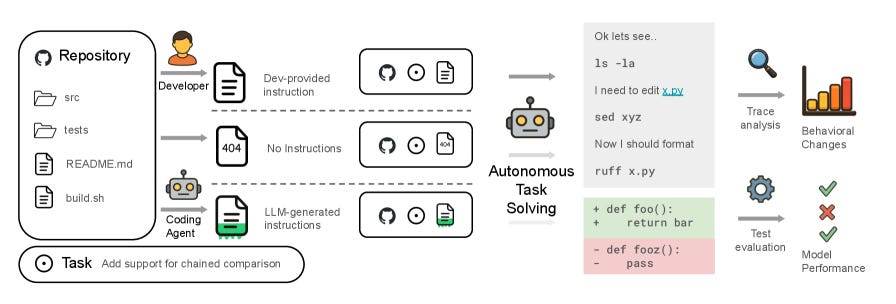

4. Evaluating AGENTS.md

This research evaluates whether AGENTS.md files, the repository-level context files that developers write to help AI coding agents understand their codebases, actually improve agent performance. Testing four coding agents (Claude Code with Sonnet-4.5, Codex with GPT-5.2 and GPT-5.1 mini, and Qwen Code with Qwen3-30b-coder), the findings are counterintuitive.

Context files reduce success rates: Human-written AGENTS.md files provide a modest +4% improvement in some cases, but LLM-generated ones actually hurt performance by -2%. Both consistently increase inference cost by over 20%, making the cost-benefit tradeoff questionable.

Broader exploration, worse outcomes: Context files cause agents to explore more code paths and consider more files, but this expansive behavior makes tasks harder rather than easier. The additional context introduces noise that dilutes task-relevant information.

Lean is better: The study recommends that developer-written context files should contain only essential information. Unnecessary requirements, coding style preferences, and broad architectural descriptions complicate agent task completion without improving results.

Practical guidance: For developers maintaining AGENTS.md files, the key takeaway is to keep them minimal and focused on critical constraints. Information density matters more than comprehensiveness for current coding agents.

Message from the Editor

Excited to announce our new on-demand course “Vibe Coding AI Apps with Claude Code”. Learn how to leverage Claude Code features to vibecode production-grade AI-powered apps.

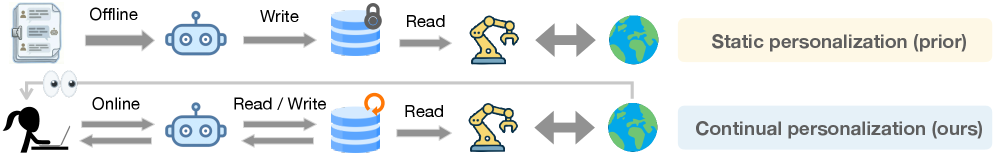

5. PAHF

Meta introduces PAHF (Personalized Agents from Human Feedback), a continual agent personalization framework that addresses a critical gap: most AI agents cannot adapt to individual user preferences that evolve over time. PAHF couples explicit per-user memory with both proactive and reactive feedback mechanisms.

Three-step personalization loop: PAHF operates through (1) pre-action clarification to resolve ambiguity before acting, (2) grounding actions in preferences retrieved from persistent memory, and (3) integrating post-action feedback to update memory when preferences drift. This dual-feedback design captures both explicit and implicit signals.

Continual learning through interaction: Unlike static fine-tuning approaches, PAHF enables agents to learn from live interactions. The explicit memory store allows agents to accumulate and revise user preference profiles without retraining, making personalization practical for production deployments.

Novel benchmarks: The researchers develop two benchmarks in embodied manipulation and online shopping that specifically measure an agent’s ability to learn initial preferences from scratch and then adapt when those preferences shift over time.

Strong results: PAHF learns substantially faster and consistently outperforms both no-memory and single-channel baselines. It reduces initial personalization error and enables rapid adaptation to persona shifts, demonstrating that the combination of memory and dual feedback channels is essential.

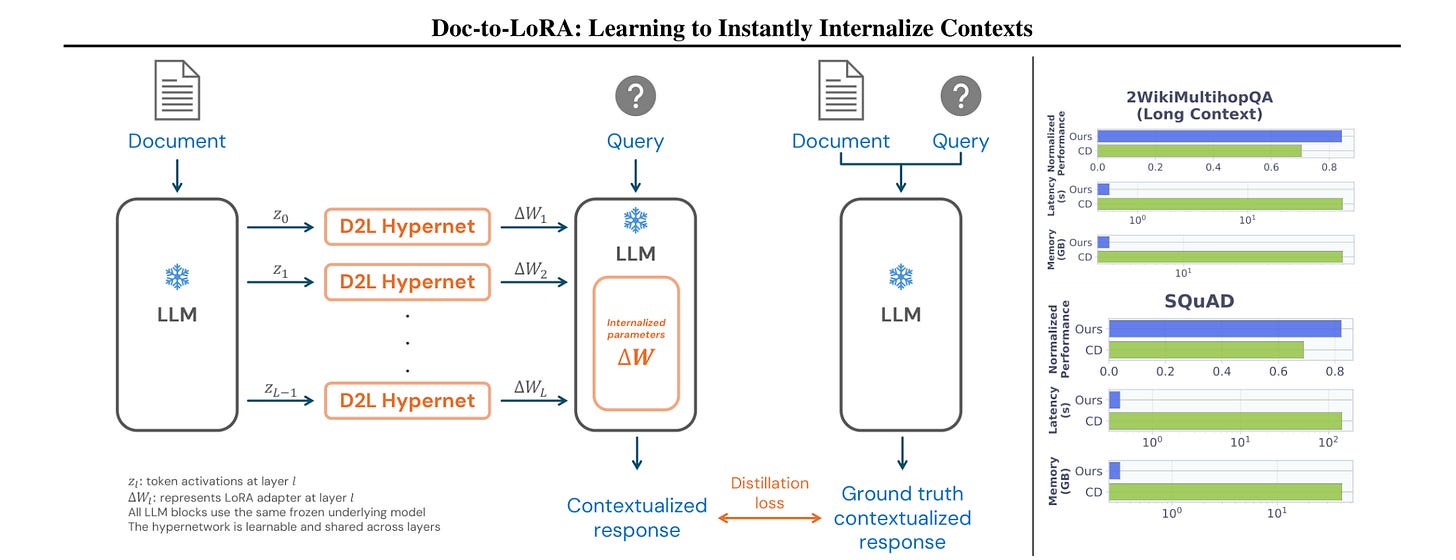

6. Doc-to-LoRA

Sakana AI introduces Doc-to-LoRA (D2L), a lightweight hypernetwork that meta-learns to compress long documents into LoRA adapters in a single forward pass. Instead of processing long contexts through expensive quadratic attention, D2L converts the document into parameter-space representations that the target LLM can use without re-consuming the original text.

Single-pass context compression: D2L generates LoRA adapters from unseen documents in one forward pass. Once compressed, subsequent queries are handled using only the adapter weights, eliminating the need to re-process the full document and dramatically reducing both inference latency and KV-cache memory demands.

Beyond native context windows: The method achieves near-perfect zero-shot accuracy on needle-in-a-haystack tasks at sequence lengths exceeding the target LLM’s native context window by over 4x. This suggests that parametric compression can effectively extend context capabilities without architectural changes.

Real-world QA performance: On practical question-answering datasets, D2L outperforms standard long-context approaches while consuming less memory. The compressed representations retain enough information for accurate retrieval and reasoning across the full document.

Practical deployment benefits: For applications requiring repeated queries over the same document (customer support, legal analysis, codebase understanding), D2L compresses the document once and amortizes the cost across all subsequent interactions.

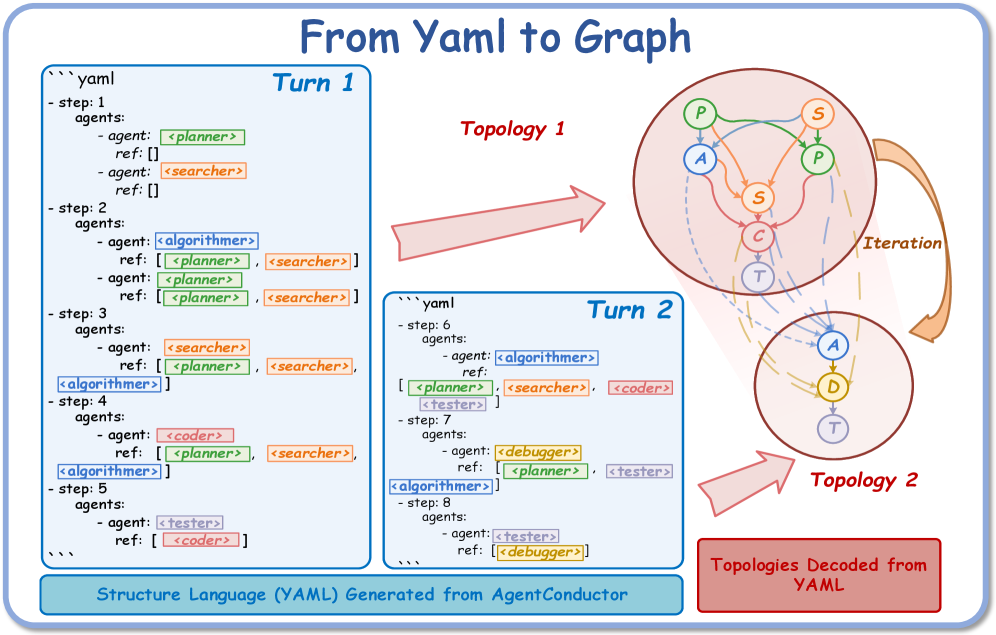

7. AgentConductor

AgentConductor introduces a reinforcement learning-enhanced multi-agent system for code generation that dynamically generates interaction topologies based on task characteristics. Rather than using fixed communication patterns between agents, an LLM-based orchestrator adapts the topology to match problem complexity, achieving state-of-the-art accuracy across five code generation datasets.

Task-adapted topologies: The orchestrator constructs density-aware layered directed acyclic graph (DAG) topologies tailored to problem difficulty. Simple problems get sparse topologies with minimal communication overhead, while complex problems get denser multi-agent collaboration.

Topological density control: A novel density function and difficulty interval partitioning mechanism controls how much agents communicate. This directly addresses the problem of redundant interactions that waste tokens without improving solution quality.

Strong performance gains: AgentConductor outperforms the strongest baseline by up to 14.6% in pass@1 accuracy with 13% density reduction and 68% token cost reduction. The system achieves better results while using significantly fewer computational resources.

Execution feedback refinement: Topologies are refined using execution feedback from code tests. When initial solutions fail, the orchestrator adjusts the collaboration structure based on error patterns, enabling adaptive recovery.

8. ActionEngine

Georgia Tech and Microsoft Research introduce ActionEngine, a training-free framework that transforms GUI agents from reactive step-by-step executors into programmatic planners. It builds a state-machine memory through offline exploration, then synthesizes executable Python programs for task completion, achieving 95% success on Reddit tasks from WebArena with on average a single LLM call, reducing costs by 11.8x and latency by 2x compared to vision-only baselines.

9. CoT Faithfulness via REMUL

Researchers propose REMUL, a training approach for making chain-of-thought reasoning more faithful and monitorable. A speaker model generates reasoning traces that multiple listener models attempt to follow and complete, using RL to reward reasoning that is understandable to other models. Tested across BIG-Bench Extra Hard, MuSR, ZebraLogicBench, and FOLIO, REMUL improves three faithfulness metrics while also boosting overall accuracy, producing shorter and more direct reasoning chains.

10. Learning to Rewrite Tool Descriptions

Intuit AI Research addresses a bottleneck in LLM-agent tool use: tool descriptions are written for humans, not agents. They introduce Trace-Free+, a curriculum learning framework that optimizes tool descriptions without relying on execution traces. The approach delivers consistent gains on unseen tools, strong cross-domain generalization, and robustness as the number of candidate tools scales to over 100, demonstrating that improving tool interfaces is a practical complement to agent fine-tuning.