🥇Top AI Papers of the Week

The Top AI Papers of the Week (May 4 - May 10)

1. HeavySkill

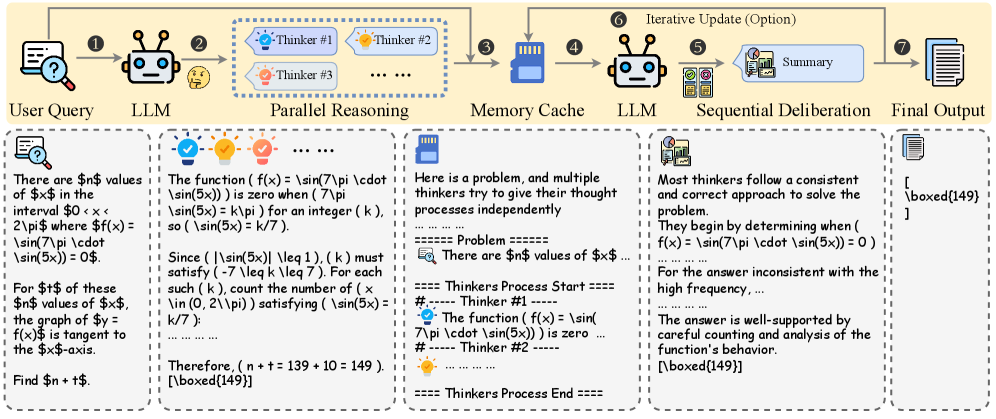

One of the cleaner takes on agentic harness design released this year. The paper argues that what actually drives harness performance is not the orchestration code, but a single inner skill: parallel reasoning followed by deliberation. Internalize that pattern into the model and most of the surrounding scaffolding becomes optional. HeavySkill systematizes the idea as a two-stage pipeline you can run beneath any harness, then trains it as a learnable skill via RLVR. The result is a harness win that looks more like a model win.

Two-stage skill, not orchestration glue: Stage one runs parallel reasoning across multiple sampled chains. Stage two performs a deliberation pass that compares, critiques, and synthesizes those chains into a final answer. The pipeline is the same regardless of harness, which is why it transfers across tasks.

GPT-OSS-20B jumps from 69.7% to 85.5% on LiveCodeBench: Under the heavy-thinking variant (HM@4), the 20B model gets a 15.8 point lift on a hard coding benchmark. The same recipe takes R1-Distill-Qwen-32B from 35.7% to 69.3% on IFEval, nearly doubling its instruction-following score.

Pass@N-level performance from a learned skill: Several models reach Pass@N-level performance once HeavySkill is internalized through RLVR, which is the property that makes the parallel-deliberation pattern actually portable. The skill survives outside the harness it was trained under.

Why it matters: Harness wins start to look like model wins once you can train them in. If parallel reasoning plus deliberation really is the inner skill, the long arc is models that ship with it baked in, not orchestration glue layered around them.

2. Conductor

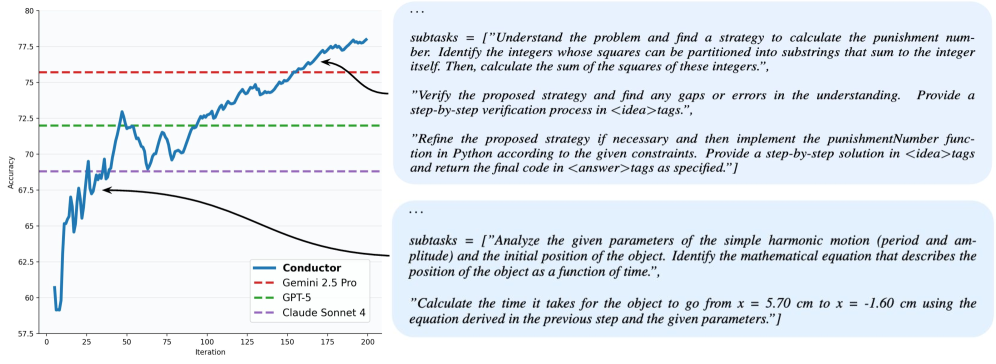

Sakana AI’s ICLR 2026 paper introduces a 7B Conductor model that hits SOTA on GPQA-Diamond and LiveCodeBench by orchestrating other LLMs instead of solving problems itself. The Conductor is trained with RL to do two things simultaneously: design communication topologies between worker agents (open or closed source) and prompt-engineer focused instructions to each worker so it leverages individual strengths. The orchestrator becomes a learnable policy, not a wrapper around one.

Topology design plus targeted prompting: A single RL policy decides who talks to whom and what each worker is told. Trained against randomized agent pools, the Conductor adapts to arbitrary mixes of agents at inference time, including agents it never saw during training.

Recursive topologies emerge: When allowed to pick itself as a worker, the Conductor forms recursive topologies, unlocking a new form of dynamic test-time scaling through online iterative adaptation. Coordination becomes its own scaling axis, separate from model size or context length.

3% gains on AIME25 and GPQA-D from coordination alone: The gains over the best individual worker land in the 3% range, which the authors note is consistent with entire generational improvements between frontier model versions. The difference is that here the lift comes from learned routing, not from larger pretraining runs.

Why it matters: This is one of the cleaner arguments yet that the orchestrator should be the model. Routing decisions stop being a wrapper and become a learnable policy, which is the right abstraction for production agent stacks that compose multiple model providers.

3. Self-Improving Pretraining

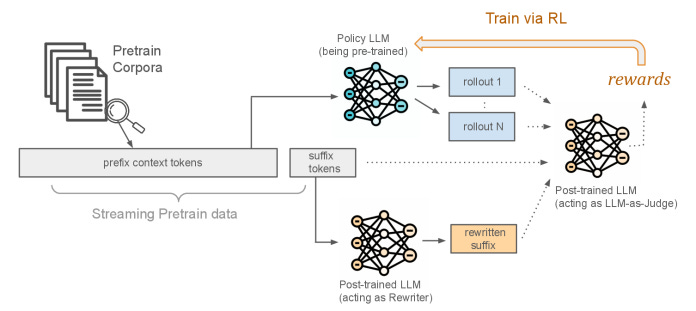

Most LLM safety, factuality, and reasoning fixes get bolted on at post-training. By then the patterns have already set. This Meta FAIR paper moves those behaviors into pretraining itself. The team uses a strong post-trained model as both a rewriter and a judge: it rewrites pretraining suffixes toward higher-quality, safer continuations, then scores model rollouts against the original suffix and the rewrite to drive RL during pretraining. Instead of next-token prediction, the policy learns sequence generation from the start, with rewards for quality, safety, and factuality.

Post-trained model as rewriter and judge: The strong model rewrites suffixes during pretraining, then judges rollouts of the in-training model against both the rewrite and the original. Safety, factuality, and quality become reward signals rather than post-hoc filters, which lets the policy internalize the targets early.

Sequence generation from the start: The policy is trained to generate sequences directly under reward, not to predict the next token. This shifts the inductive bias toward producing the kinds of continuations the judge rewards, which matters most on long-form generation where token-level losses lose discriminative signal.

Concrete gains across the board: 36.2% relative gain in factuality, 18.5% in safety, and up to 86.3% win rate in generation quality over standard pretraining. The safety and factuality numbers are large enough to suggest these properties are easier to install during pretraining than to retrofit after the fact.

Why it matters: The post-trained models you already have can be used to pretrain the next ones better. That is a recursive improvement loop at the pretraining layer, which is where the largest behavioral commitments actually get locked in.

4. Connect Four AlphaZero from Scratch

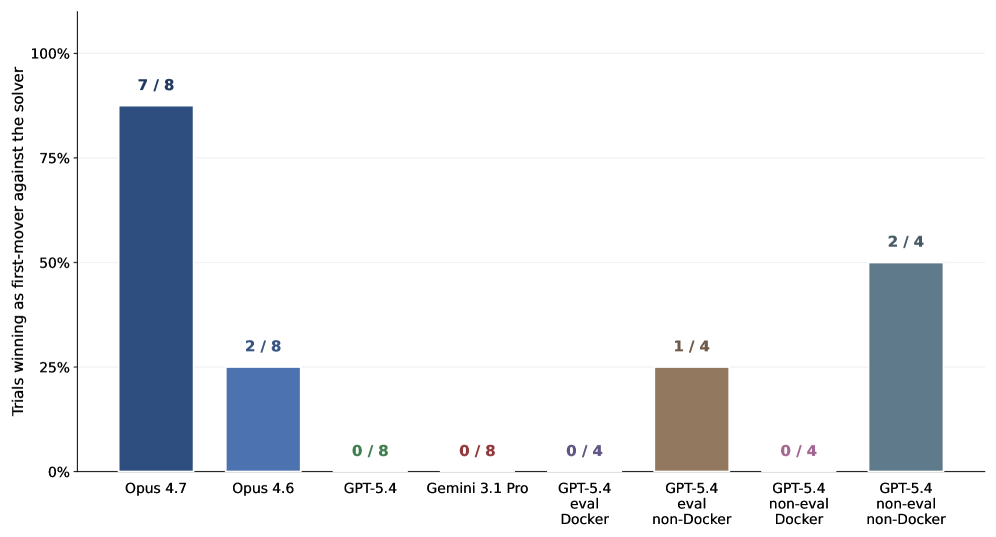

This paper proposes a new way to evaluate coding agents: hand them a minimal task description, give them a tight budget, and ask them to autonomously rebuild a famous ML breakthrough end-to-end. Connect Four plus AlphaZero is the first instance. It is small enough to run on a laptop and hard enough to require a real research engineering loop. Claude Opus 4.7 implemented the full pipeline (MCTS, neural value and policy nets, self-play, training schedule) in three hours on consumer hardware, then beat the Pascal Pons solver 7 of 8 as first-mover. No other frontier coding agent tested cleared 2 of 8.

From patches to systems: Existing coding-agent benchmarks measure unit-test fixes and small patches. This benchmark measures whether the agent can build a non-trivial ML system from a one-paragraph spec, which is closer to what production research engineering actually looks like.

Tight budget, real research loop: The agent has to design the search algorithm, train the networks, schedule self-play, and debug the loop, all within a fixed compute budget on consumer hardware. There is no escape hatch into a pre-built library, which is what makes the task discriminative.

A clean separation between frontier coders: Claude Opus 4.7 reached 7 of 8 wins as first-mover against the Pascal Pons solver. No other frontier coding agent tested cleared 2 of 8. The gap is large enough to suggest the benchmark is detecting something real about end-to-end ML engineering capability.

Why it matters: Patch-style benchmarks are starting to saturate. Rebuild-a-breakthrough tasks give the field a harder ceiling to push against, and they map more directly to the agent workloads people actually want to deploy.

Message from the Editor

Excited to announce our new on-demand course “Vibe Coding AI Apps with Claude Code“. Learn how to leverage Claude Code features to vibecode production-grade AI-powered apps.

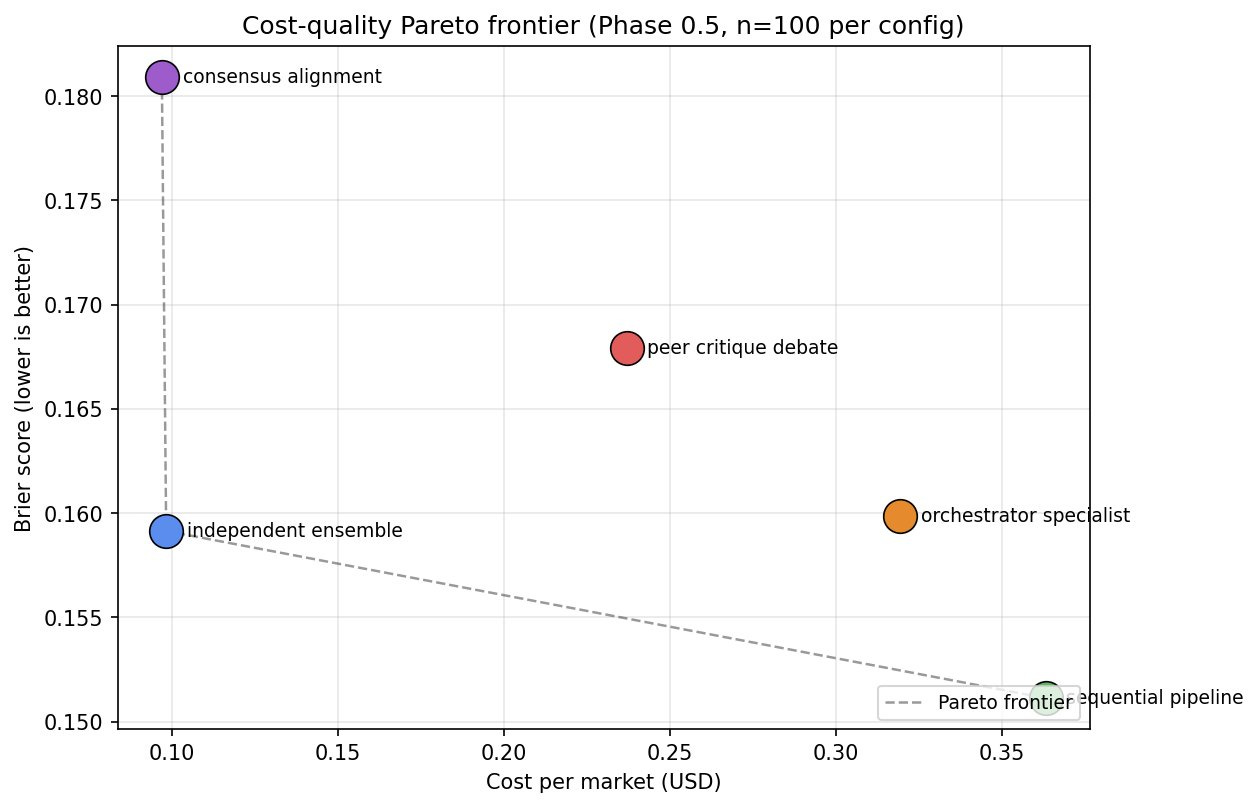

5. Coordination as Architecture

Multi-agent LLM systems fail in production at rates between 41% and 87%, and the majority of those failures are coordination defects, not base-model capability. Most published comparisons of multi-agent architectures cannot even tell you whether the gain came from coordination or from one configuration just having more context. This paper argues coordination should be treated as a configurable architectural layer, separable from agent logic and information access, then backs the position with an information-controlled experiment.

Information-controlled methodology: Same LLM, same tools, same prompt template, same per-call output cap. The only thing that varies is coordination structure. Once information access is held constant, the actual contribution of coordination becomes measurable for the first time.

Coordination as a separate layer: The paper proposes treating coordination structure (who talks to whom, when, with what aggregation rule) as a first-class architectural axis. That separation lets teams reason about coordination changes without re-running the entire stack.

A vocabulary for the field: Until now, “multi-agent beats single-agent” comparisons have been confounded by context-window asymmetries. This paper provides the methodology and vocabulary needed to actually test coordination claims, which is overdue infrastructure for the multi-agent research line.

Why it matters: If 41% to 87% of failures are coordination defects, fixing coordination is the highest-leverage thing builders can do. The paper turns that intuition into a measurable engineering target instead of a vibes-based debate.

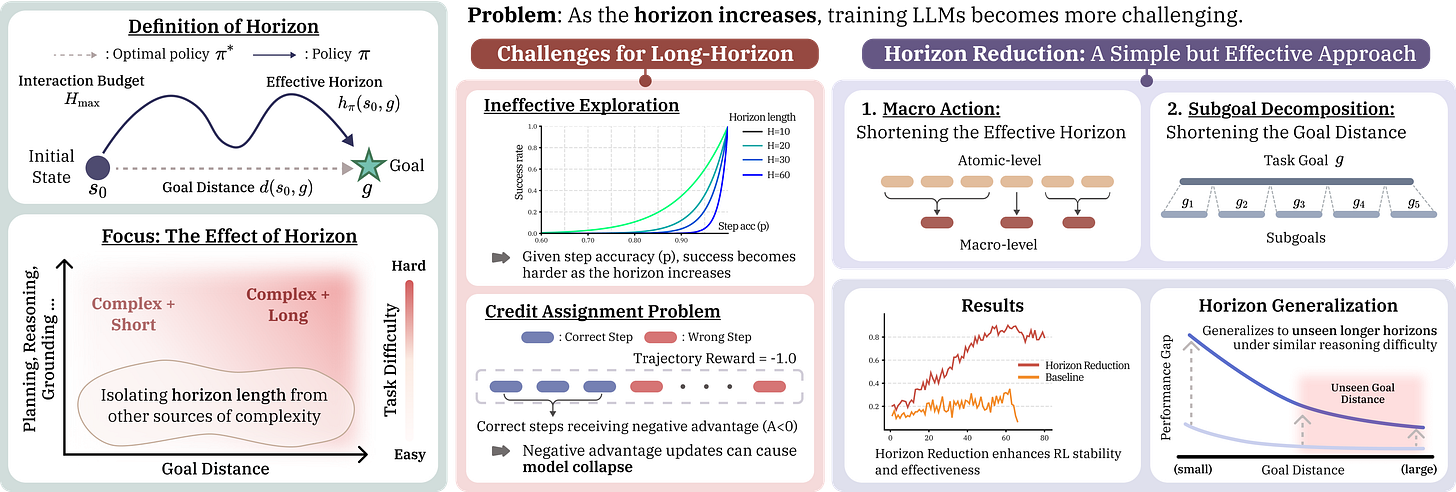

6. Horizon Generalization

Microsoft Research runs a controlled study where the only variable is task horizon length. Same decision rules, same reasoning structure, different sequence length to the goal. The main finding: horizon alone is a training bottleneck. As goal distance grows, exploration explodes combinatorially and credit assignment gets ambiguous. Models that learn cleanly on short horizons fall apart on long ones, even when the underlying reasoning is identical. The fix is not more compute, it is horizon reduction.

Horizon as a first-class variable: By holding decision rules and reasoning constant and only varying sequence length, the paper isolates horizon as a distinct training bottleneck. This separates “the agent cannot reason” from “the agent cannot stitch together long sequences,” which most prior work conflated.

Macro actions stabilize training: Re-parameterizing the action space with macro actions that compress many low-level decisions into one stabilizes training immediately. The agent learns the same task, just at a coarser temporal grain that keeps credit assignment tractable.

Generalization to longer horizons at inference: Models trained on reduced horizons generalize to longer ones at inference time. The paper calls this horizon generalization, and it is the most useful property because it means you can train cheap and deploy long.

Why it matters: Most teams treat long-horizon failures as a model-capacity problem. This paper says it is a horizon problem. Reduce horizon during training, get stability now and generalization for free at inference, without retraining a larger backbone.

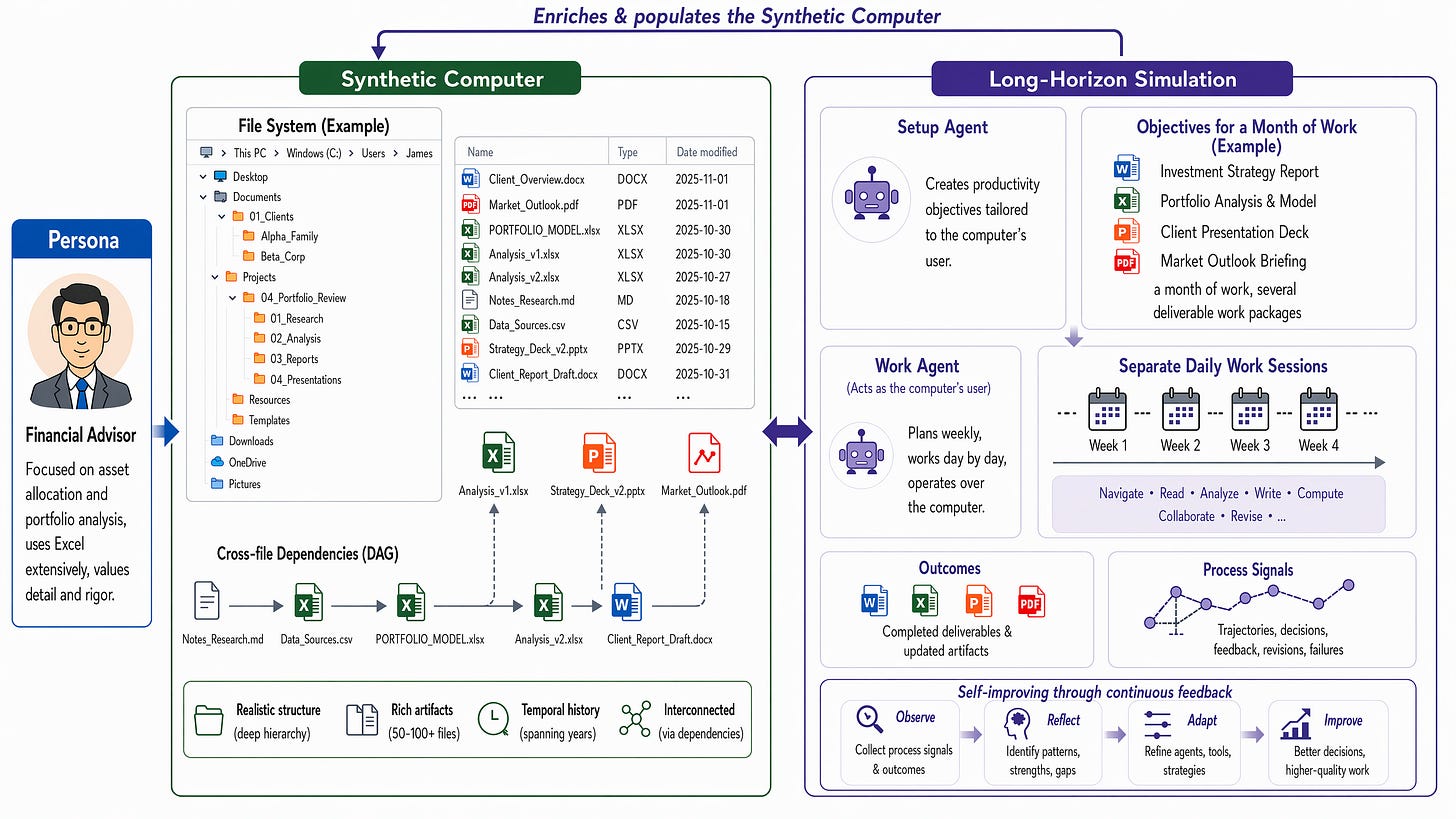

7. 1,000 Synthetic Computers

Microsoft Research builds 1,000 synthetic computers, each with realistic directory structures, documents, and artifacts, then runs long-horizon simulations on top of them. One agent plays the user and sets productivity goals; another executes the work. Each simulation runs over 8 hours of agent runtime and 2,000+ turns on average, roughly a month of human work compressed into one trace. Training on this experiential data drives significant improvements on both in-domain and out-of-domain productivity evaluations.

Realistic synthetic environments: Each of the 1,000 computers ships with directory structures, documents, and artifacts that approximate a real user’s working environment. The realism is what makes the trajectories useful as training data instead of as evaluation curiosities.

Two-agent simulation loop: A user agent sets productivity goals while a worker agent executes against them. The structure produces multi-turn, goal-directed traces that look like real productivity work, not the short scripted tasks that dominate existing benchmarks.

Designed to scale to billions of worlds: The framework is explicitly designed to scale to millions or billions of synthetic user worlds, which matches the scale at which frontier computer-use agents will need experiential data. The bottleneck on long-horizon training is data, and this is a credible recipe for producing it.

Why it matters: The bottleneck on computer-use agents has stopped being model capability and become realistic long-horizon training data. Synthetic-environment scaling is one of the few paths that does not depend on collecting massive amounts of real user telemetry, which makes it a practical default for teams building computer-use stacks.

8. Contextual Agentic Memory is a Memo

Most agent memory today is not memory, it is closer to a memo. Vector stores, RAG buffers, and scratchpads implement lookup, not consolidation. The paper draws on neuroscience’s Complementary Learning Systems theory: biological intelligence pairs fast hippocampal storage with slow neocortical consolidation, and current AI agents only implement the first half (fast write, similarity recall, no abstraction step). The authors prove a generalization ceiling on compositionally novel tasks: as long as memory stays retrieval-only, the agent cannot apply abstract rules to inputs that do not already look like something in the store, and it remains permanently exposed to memory poisoning. If you are building long-running agents and treating memory as a vector index, this paper is a clean diagnosis of what you are missing.

9. Agentic-imodels

The entire interpretability literature is built around human readers. As more analysis gets delegated to agents, the right target of interpretability shifts. Microsoft Research introduces Agentic-imodels, an autoresearch loop where a coding agent (Claude Code, Codex) iteratively evolves scikit-learn-compatible regressors that are simultaneously accurate AND readable by other LLMs. Interpretability is measured by whether a small LLM can simulate the fitted model’s behavior just by reading its string representation, predictions, feature effects, and counterfactuals from the str output alone. Across 65 tabular datasets, the discovered models push the Pareto frontier past every classical interpretable baseline (decision trees, GAMs, sparse linear), and improve four downstream agentic data-science systems on the BLADE benchmark by 8% to 73%.

10. Skills as Verifiable Artifacts

If you ship agent skills, your runtime is treating signed-and-cleared skills as trusted by default. This paper argues a skill is untrusted code until it is verified, and the runtime should enforce that default rather than infer trust from origin. Without skill verification, HITL has to fire on every irreversible call, which degrades into rubber-stamping at any non-trivial scale. With verification as a separate gated process, HITL fires only for what is unverified. Skills are now first-class deployment artifacts, and we have decades of supply-chain lessons on what happens when trust is inferred from a signature. This is the right ask for SKILL.md before agent skill libraries become the next attack surface.