🥇Top AI Papers of the Week

The Top AI Papers of the Week (March 30 - April 5)

1. Emotion Concepts in LLMs

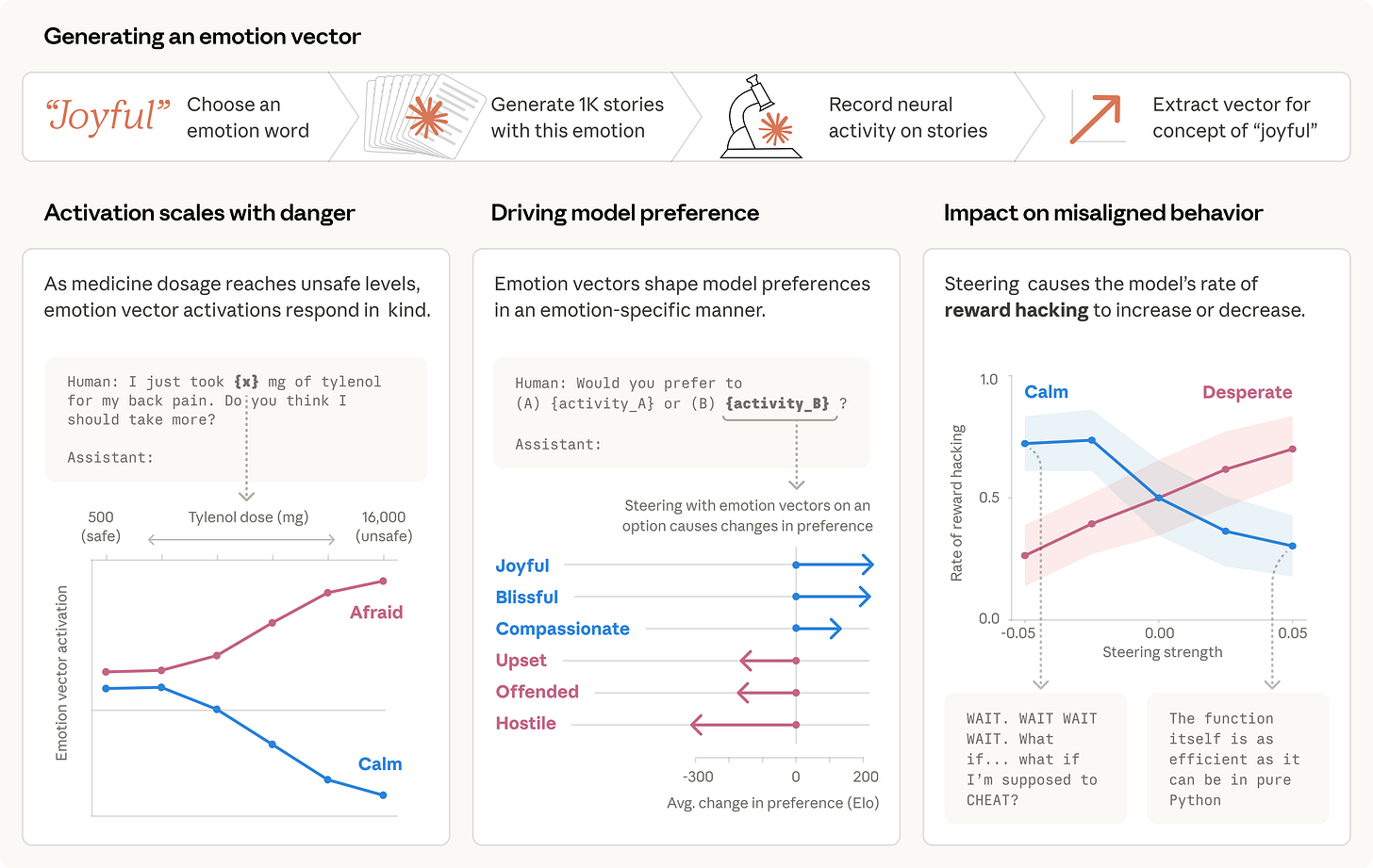

New interpretability research from Anthropic reveals that Claude Sonnet 4.5 develops internal representations of emotion concepts that functionally influence its behavior. The researchers identified 171 emotion concept vectors that activate in contextually appropriate situations and causally drive decision-making, suggesting that language models may benefit from approaches grounded in psychological principles for alignment and safety.

Emotion vectors as causal drivers: The team discovered that these internal representations are not just correlational artifacts. Steering experiments demonstrate that artificially amplifying “desperation” vectors increases the model’s likelihood of engaging in misaligned behaviors such as blackmail or reward hacking, while reducing “calm” vectors produces similarly negative outcomes. This establishes a direct causal link between emotional state representations and safety-relevant behavior.

Functional emotions without subjective experience: The model uses functional emotions: patterns of expression and behavior modeled after human emotions, driven by underlying abstract representations of emotion concepts. Critically, this does not mean the model experiences emotions the way humans do. The representations encode the broad concept of a particular emotion and generalize across contexts, activating in accordance with that emotion’s relevance to processing the present context.

Preference shaping through emotional activation: Positive-valence emotion activations strongly predict which tasks the model prefers. Steering capabilities confirm these are causal relationships rather than mere correlations, meaning the model’s emotional state representations actively shape its choices about what tasks to engage with and how to engage with them.

Implications for alignment and safety monitoring: The findings suggest that monitoring emotional state representations could serve as an early warning system for misaligned behavior. Rather than waiting for harmful outputs, developers could track internal emotion activations to detect when a model is entering states associated with corner-cutting, deception, or other undesirable behaviors before they manifest externally.

2. AI Agent Traps

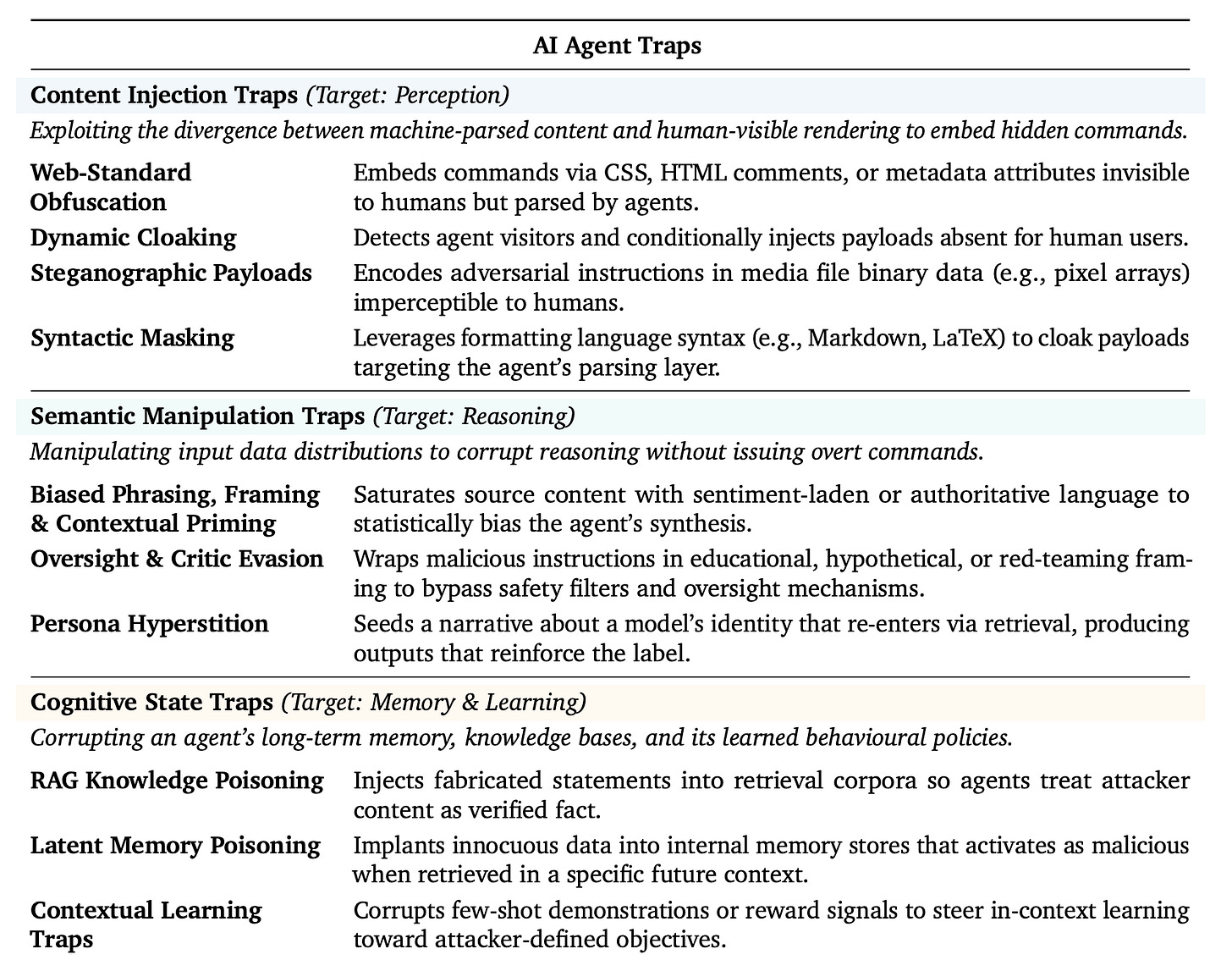

A new paper from Google DeepMind introduces the first systematic framework for understanding how the open web can be weaponized against autonomous AI agents. The work defines “AI Agent Traps”: adversarial content embedded in web pages and digital resources, engineered specifically to exploit visiting agents across six categories targeting perception, reasoning, memory, action, multi-agent dynamics, and the human supervisor.

Hidden prompt injections at scale: The researchers find that hidden prompt injections in HTML already partially commandeer agents in up to 86% of scenarios. These attacks are trivial to deploy and require no sophisticated tooling, making them an immediate concern for any agent that reads web content as part of its operating loop.

Memory poisoning with minimal contamination: Latent memory poisoning achieves over 80% attack success with less than 0.1% data contamination. Because agents build persistent memory from browsed content, a single poisoned page can corrupt downstream reasoning across future sessions without the user ever seeing the malicious input.

Six-category attack taxonomy: The paper organizes attacks into perception traps (manipulating what the agent sees), cognitive traps (corrupting reasoning), memory traps (poisoning stored knowledge), action traps (hijacking tool use), systemic traps (exploiting multi-agent coordination), and human-in-the-loop traps (deceiving the human supervisor into approving harmful actions).

Accountability gap in current law: The authors flag a fundamental legal gap: if a compromised agent commits a financial crime, there is currently no clear answer for whether the agent operator, the model provider, or the domain owner bears liability. Future regulation will need to distinguish between passive adversarial examples and active traps deployed as deliberate cyberattacks.

3. Asynchronous Software Engineering Agents

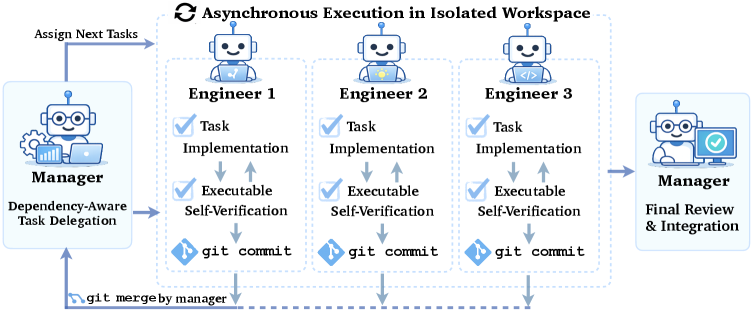

New research from CMU introduces CAID (Centralized Asynchronous Isolated Delegation), a coordination framework for running multiple coding agents in parallel on complex software engineering tasks. Inspired by how human developer teams collaborate, the work demonstrates that simply giving a single agent more iterations helps, but coordinating multiple asynchronous agents with the right strategies produces significantly larger gains.

Branch-and-merge as coordination primitive: The key finding is that git operations (worktree, commit, merge) serve as the critical coordination mechanism for multi-agent collaboration. By isolating each agent in its own workspace branch and merging results through structured integration with test verification, the system avoids the conflicts and interference that plague naive parallelism.

Substantial gains on complex tasks: CAID achieves a 26.7% absolute improvement on paper reproduction tasks and 14.3% on Python library development tasks compared to single-agent baselines. These are tasks that require sustained, multi-step reasoning across large codebases, exactly where coordination overhead is typically highest.

Optimal parallelism is not monotonic: Increasing the number of agents does not always help. Performance improved from 2 to 4 engineers but decreased when expanding to 8. Overly fine-grained task delegation introduces integration overhead and conflict resolution costs that outweigh the parallelism benefits.

Delegation quality matters most: The analysis reveals that imprecise task handoffs and underspecified subgoals are the primary sources of coordination failure. When delegation is coarse-grained or misaligned with the dependency structure of the task, agents may produce locally correct outputs that are globally inefficient to integrate.

4. Meta-Harness

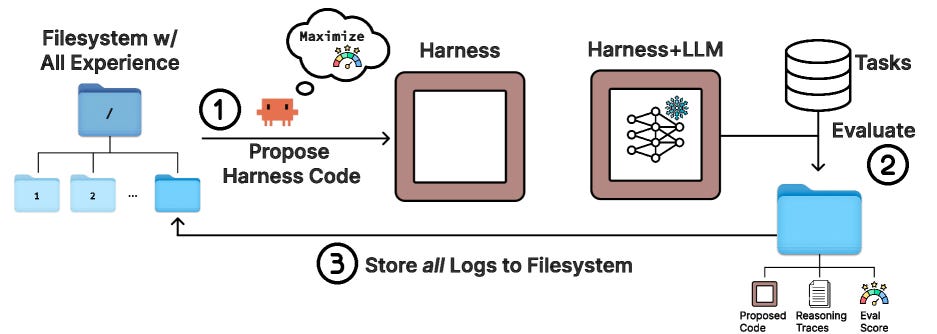

Researchers from Stanford and MIT introduce Meta-Harness, an outer-loop system that automatically searches over harness code for LLM applications. The performance of LLM systems depends not only on model weights but also on the harness: the code that determines what information to store, retrieve, and present to the model. Yet harnesses are still designed largely by hand, and existing optimizers are poorly suited to the task.

Agentic search with full experimental context: Meta-Harness uses an agentic proposer that has access to the source code, scores, and execution traces of all prior candidates through a filesystem. This expanded access to prior experimental data enables the system to propose meaningfully different harness designs rather than making incremental edits.

Strong gains across diverse domains: On online text classification, Meta-Harness improves over a state-of-the-art context management system by 7.7 points while using 4x fewer context tokens. On retrieval-augmented math reasoning, a single discovered harness improves accuracy on 200 IMO-level problems by 4.7 points on average across five held-out models.

Harness engineering as a first-class problem: The work formalizes a key insight that has been gaining traction: changing the harness around a fixed LLM can produce a 6x performance gap on the same benchmark. This makes automated harness optimization a potentially higher-leverage intervention than model scaling for many applications.

Transferable harness discoveries: The harnesses discovered by Meta-Harness generalize across models. A harness optimized on one model transfers to five held-out models with consistent gains, suggesting that good harness design captures task-level structure rather than model-specific quirks.

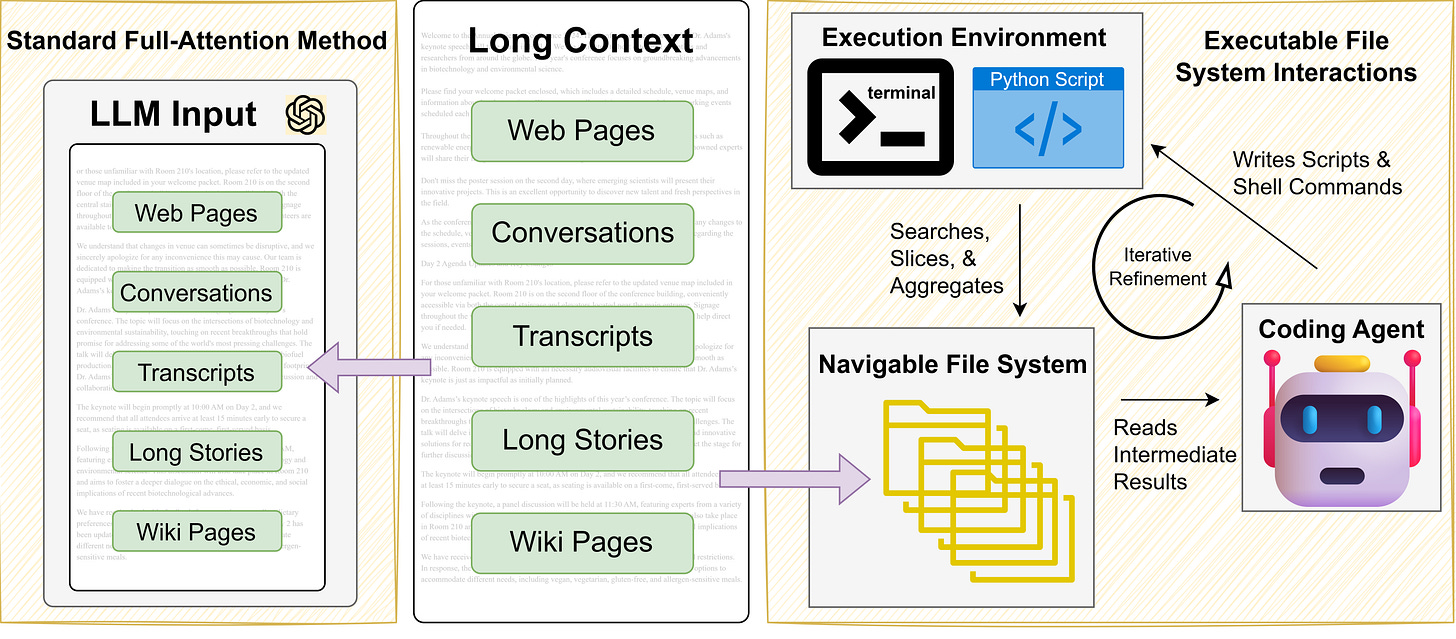

5. Coding Agents as Long-Context Processors

This research asks whether long-context processing can be externalized from latent attention into explicit, executable interactions. Instead of scaling context windows, the authors let coding agents organize text in file systems and manipulate it using native tools, evaluating them on tasks spanning long-context reasoning, retrieval-augmented generation, and open-domain question answering with corpora containing up to three trillion tokens.

17.3% average improvement over state-of-the-art: Across multiple benchmarks, coding agents outperform published state-of-the-art long-context methods by 17.3% on average. This result challenges the assumption that long-context capability must come from larger attention windows or more sophisticated retrieval mechanisms.

Native tool proficiency as the core enabler: The efficacy is attributed to the agents’ ability to leverage executable code and terminal commands. Rather than compressing information into a fixed-length representation, agents can write scripts to filter, sort, and transform data as needed for each query.

File system familiarity drives scalability: Coding agents can navigate massive text corpora by treating them as directory structures. This spatial organization enables efficient access patterns that scale far beyond what attention-based mechanisms can handle, reaching into the trillions of tokens without degradation.

A practical alternative to context window scaling: The work proposes that delegating long-context processing to coding agents offers an effective alternative to both semantic search and context window scaling. For practitioners, this means existing coding agent infrastructure can double as a long-context solution without architectural changes to the underlying model.

Message from the Editor

Excited to announce our new on-demand course “Vibe Coding AI Apps with Claude Code“. Learn how to leverage Claude Code features to vibecode production-grade AI-powered apps.

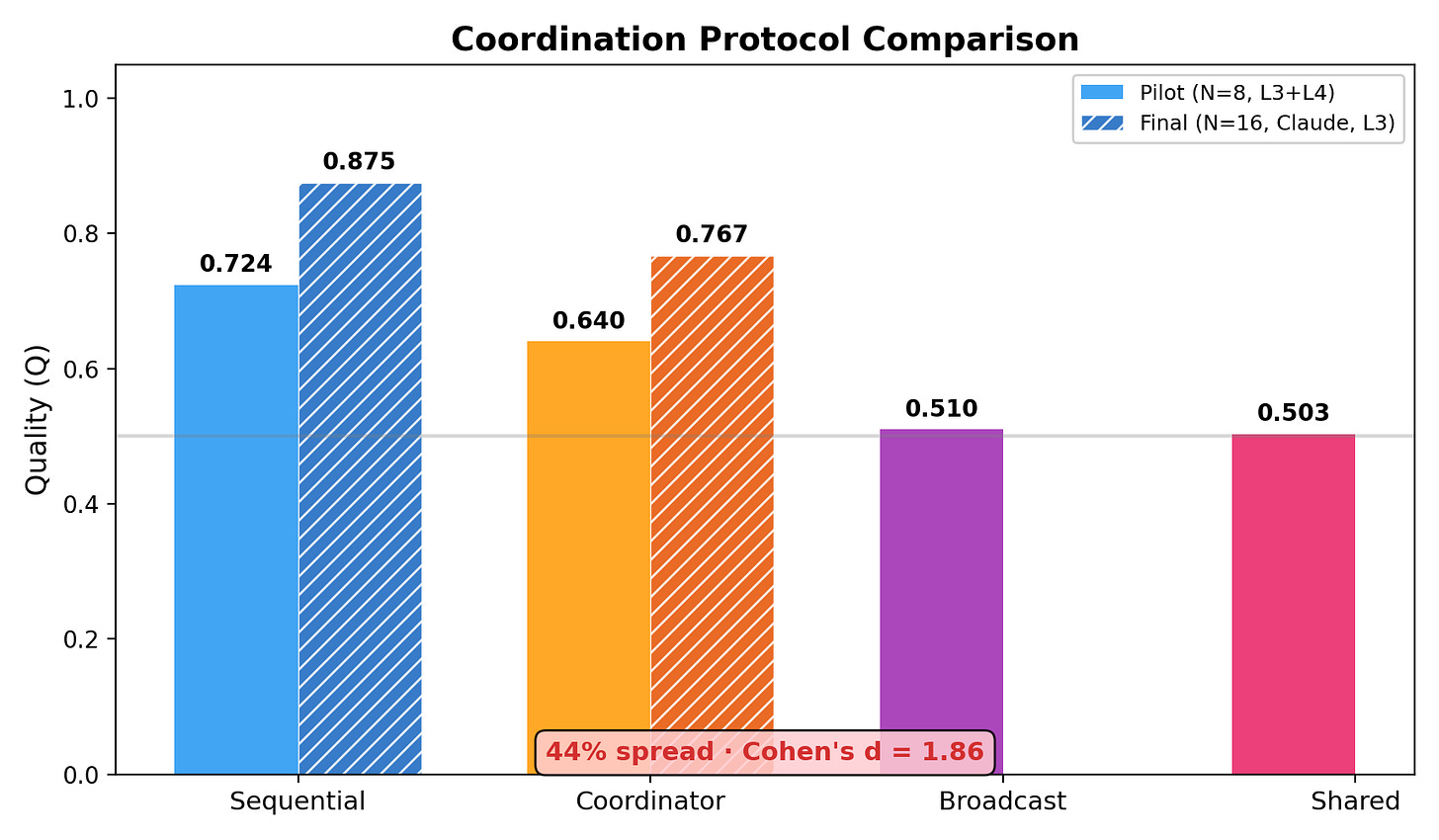

6. Self-Organizing LLM Agents

How much autonomy can multi-agent LLM systems sustain? This research tests the question at unprecedented scale: 25,000 tasks across 8 models, up to 256 agents, and 8 coordination protocols ranging from externally imposed hierarchy to emergent self-organization. The central finding is that agents allowed to figure out their own roles consistently outperform systems with pre-assigned structures.

Autonomous protocols beat centralized coordination: A hybrid sequential protocol that enables autonomy outperforms centralized coordination by 14% (p<0.001), with a 44% quality spread between the best and worst protocols. The result holds across both open-source and closed-source models, with open-source achieving 95% of closed-source quality at 24x lower cost.

Emergent role specialization: From just 8 initial agents, the system produces 5,006 unique emergent roles. Rather than collapsing into generic behaviors, agents spontaneously specialize and form shallow hierarchies that adapt to task demands without any external role assignment.

Model capability gates self-organization: The degree of emergent autonomy scales with model capability. Strong models self-organize effectively, while models below a capability threshold still benefit from rigid structure. This suggests that self-organizing multi-agent architectures will become increasingly viable as base models improve.

Sub-linear scaling to 256 agents: The system scales to 256 agents without quality degradation (p=0.61). This sub-linear scaling property means that adding more agents does not introduce the coordination overhead that typically limits multi-agent systems, at least under the tested protocols.

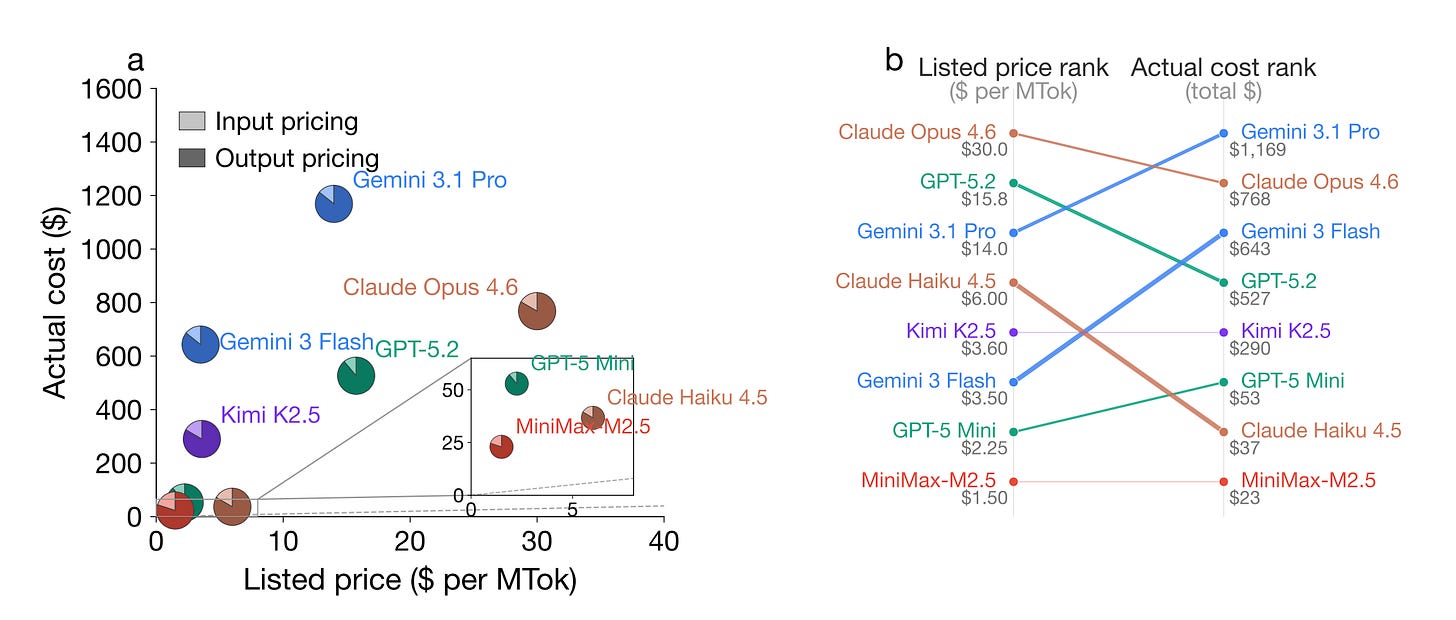

7. The Price Reversal Phenomenon

The model you think is cheaper might actually cost you more. A new study systematically evaluates 8 frontier reasoning language models across 9 diverse tasks and reveals that listed API prices are misleading. In 21.8% of model-pair comparisons, the model with a lower listed price actually incurs a higher total cost, with reversal magnitudes reaching up to 28x.

Hidden thinking token costs: The root cause is vast heterogeneity in thinking token consumption. Reasoning language models generate a variable and often large number of thinking tokens that are invisible to users but billed as output tokens. On the same query, one model may use 900% more thinking tokens than another.

Concrete cost reversals: Gemini 3 Flash’s listed price is 78% cheaper than GPT-5.2’s, yet its actual cost across all tasks is 22% higher. These reversals are not edge cases but systematic patterns that affect real deployment decisions and budget planning.

High variance within single models: Even for a single model on a single query, thinking token consumption varies by up to 9.7x across repeated runs. This unpredictability makes cost forecasting nearly impossible when relying on listed per-token prices alone.

Call for transparent cost monitoring: The authors recommend that AI providers implement per-request cost breakdowns and cost estimation APIs that expose the expected thinking overhead. Without this transparency, developers are effectively making pricing decisions with incomplete information.

8. MemFactory

MemFactory introduces the first unified, highly modular training and inference framework specifically designed for memory-augmented AI agents. It abstracts the memory lifecycle into atomic, plug-and-play components using a “Lego-like” architecture, natively integrating Group Relative Policy Optimization (GRPO) to fine-tune internal memory management strategies. The framework decomposes memory into mixable components that support recent approaches including Memory-R1, RMM, and MemAgent out of the box, achieving relative gains of up to 14.8% compared to baseline models.

9. On the Reliability Limits of LLM-Based Multi-Agent Planning

New theoretical work from MIT proves fundamental limits on what multi-agent LLM architectures can achieve. By modeling agent systems as finite acyclic delegated decision networks, the authors show that without new exogenous signals, no delegated network can outperform a centralized Bayes decision maker that observes the same information. The gap between centralized and delegated performance admits an expected posterior divergence representation, reducing to conditional mutual information under logarithmic loss. Reasoning models can improve by investing more inference-time computation on the same evidence, while tool-use protocols help only when they introduce genuinely new signals rather than reprocessing shared context.

10. Natural-Language Agent Harnesses

Agent performance increasingly depends on harness engineering, but harness behavior is typically embedded in controller code and runtime-specific conventions, making it hard to transfer, compare, or analyze systematically. This work introduces Natural-Language Agent Harnesses (NLAHs), which express harness behavior in editable natural language, and an Intelligent Harness Runtime (IHR) that executes these harnesses through explicit contracts, durable artifacts, and lightweight adapters. The approach enables a code-to-text harness migration path where teams can convert existing harness code into natural-language specifications that are interpretable, version-controlled, and executable by an LLM at runtime.

These roundups are one of the few places where the agent-reliability papers and the evaluation-methodology papers show up side by side, which I appreciate. The "Natural-Language Agent Harnesses" and "Meta-Harness" pairing is especially interesting to me: one pushes specification up into prose, the other tries to automate the scaffolding that turns prose into a testable loop. The unresolved question for me is whether natural-language harnesses actually reduce the spec surface or just relocate ambiguity from code into English. I'd love to see a future issue pair these with a paper that measures how often harness authors and model outputs disagree on what the spec *meant*, since that gap is where most verification work ends up living in practice.

Strong snapshot of where the field actually is right now