Does AGENTS.md Actually Help Coding Agents?

A New Study Has Answers

Every serious coding project I run now has a CLAUDE.md or AGENTS.md at the root. It tells the agent which commands to run, what conventions to follow, and which files to avoid. I, like many other AI engineers, assumed that this makes the agent meaningfully better. Most people building with coding agents have made the same assumption.

A new paper from ETH Zurich’s SRI Lab puts that assumption to a rigorous test. The short answer is that it’s complicated, and the details are worth understanding if you work with coding agents regularly.

The paper, Evaluating AGENTS.md: Are Repository-Level Context Files Helpful for Coding Agents?, runs Claude Code, Codex, and Qwen Code through hundreds of real GitHub issues, comparing what happens when agents get a context file versus when they don’t. The results are not what most of us would expect.

So what actually happens when you hand an agent a CLAUDE.md or AGENTS.md? Let’s break it down.

The Problem

Context files (AGENTS.md, CLAUDE.md, CONTRIBUTING.md variations) have proliferated alongside coding agents. The idea is intuitive. If you tell the agent how this repo works, it should do better. Which commands to run, which linting tools to use, and what the test setup looks like.

The problem is that nobody has measured whether this intuition holds. Adoption outpaced evaluation. Developers write these files, agents read them, and we’ve operated on faith that the relationship is positive.

The deeper issue is that measuring this properly requires a benchmark that includes repositories with existing, developer-written context files. SWE-bench, the standard coding agent benchmark, mostly covers popular repositories. Popular repositories tend not to have context files, because they’ve accumulated documentation in other forms. The typical benchmark environment doesn’t reflect how context files actually get used.

A New Benchmark Built Around Context Files

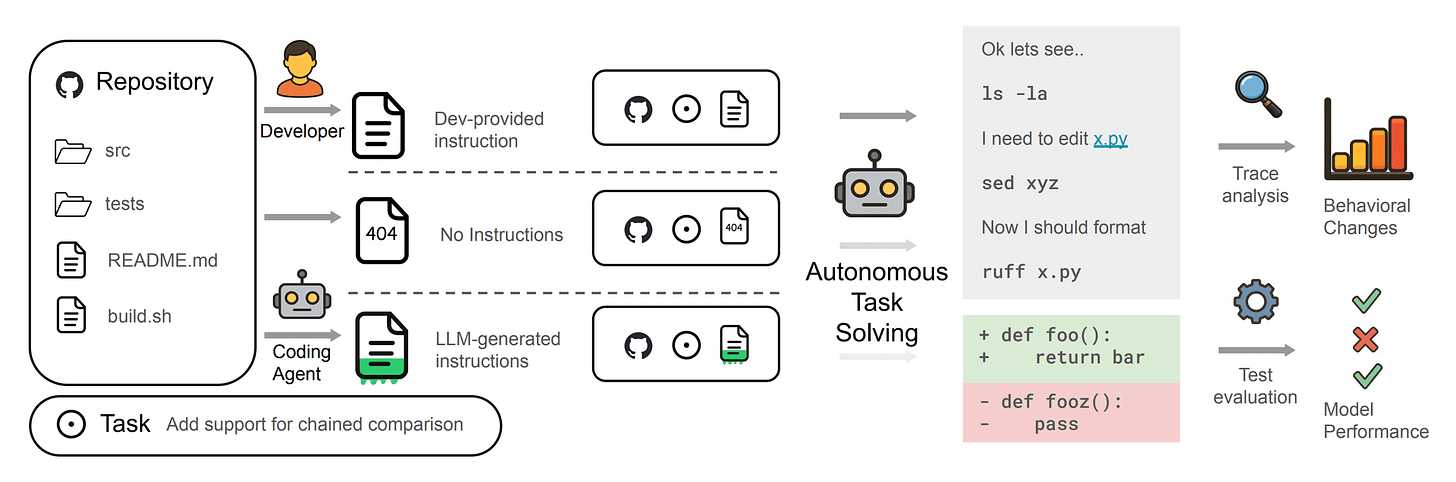

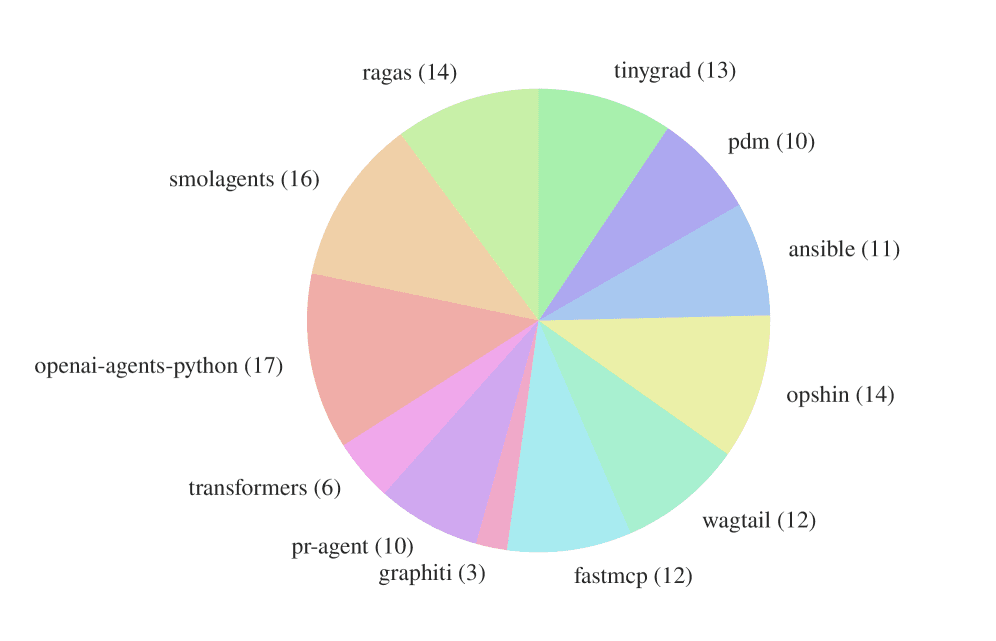

The paper introduces AGENTbench alongside its SWE-bench Lite comparisons. AGENTbench contains 138 instances drawn from 12 less-popular Python repositories, all of which have developer-written context files already in place. These are real-world repos where maintainers chose to write guidance for automated agents.

The context files in AGENTbench are substantial. They average 641 words across 9.7 sections. These aren’t one-liners saying “use pytest.” They’re detailed guides covering project structure, tooling preferences, workflow conventions, and testing requirements.

Three agents were evaluated across both benchmarks.

Claude Code (Sonnet-4.5)

Codex (GPT-5.2 and GPT-5.1 mini)

Qwen Code (Qwen3-30b-coder)

Each agent ran on tasks with no context file, with an LLM-generated context file, and with a developer-written context file.

What the Numbers Show

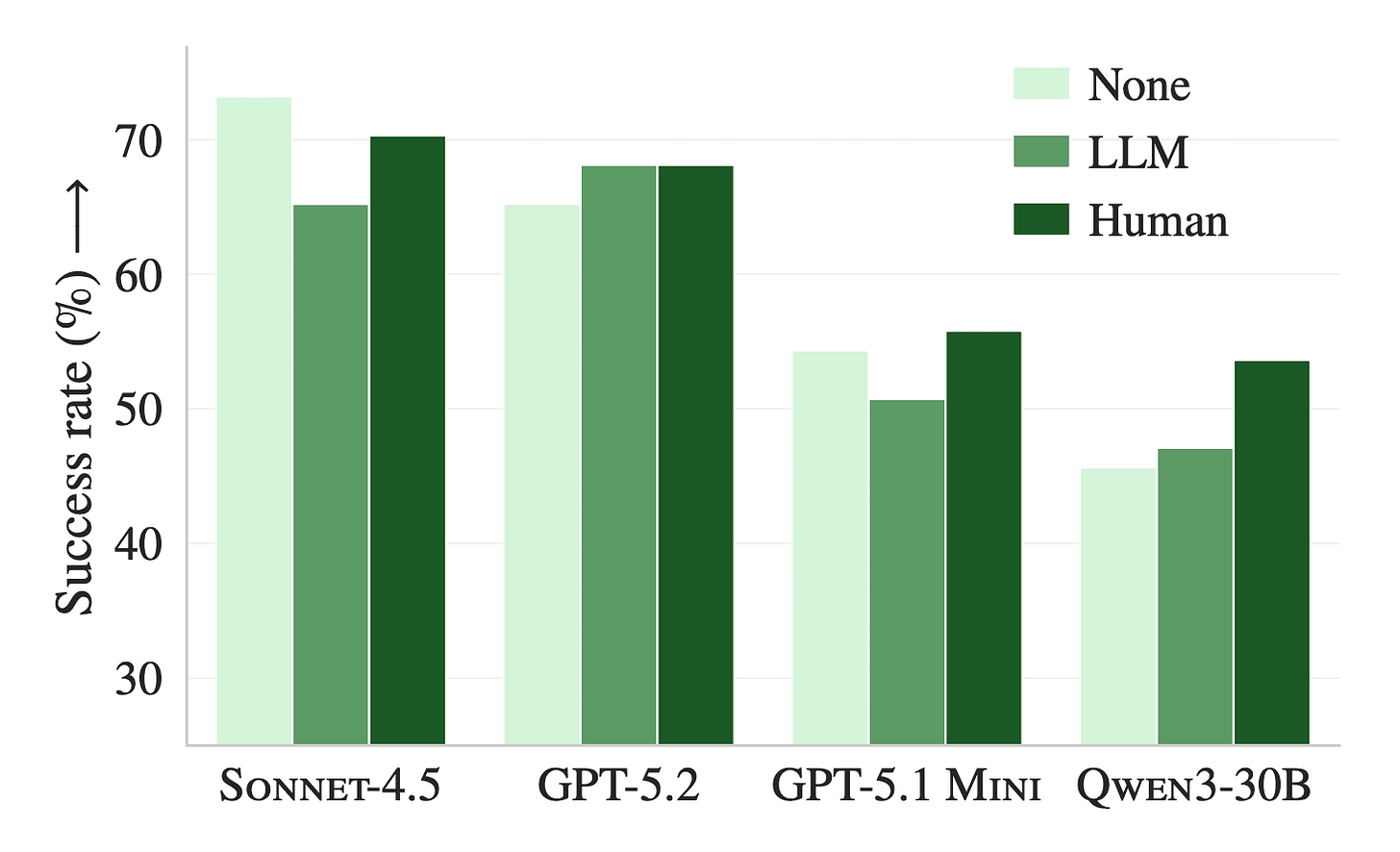

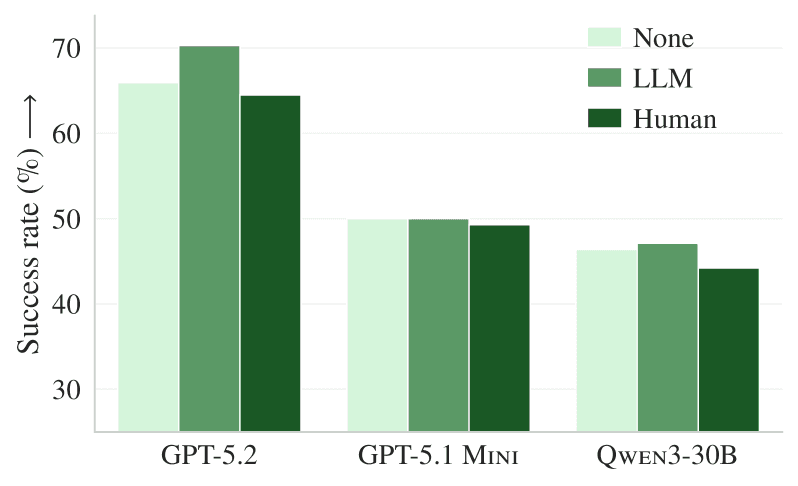

The headline finding is that LLM-generated context files reduce task success rates compared to providing no repository context at all, while increasing inference cost by over 20%.

On SWE-bench Lite, LLM-generated files drop performance by 0.5% on average. On AGENTbench, the drop is 2%. Neither is catastrophic, but this is the wrong direction.

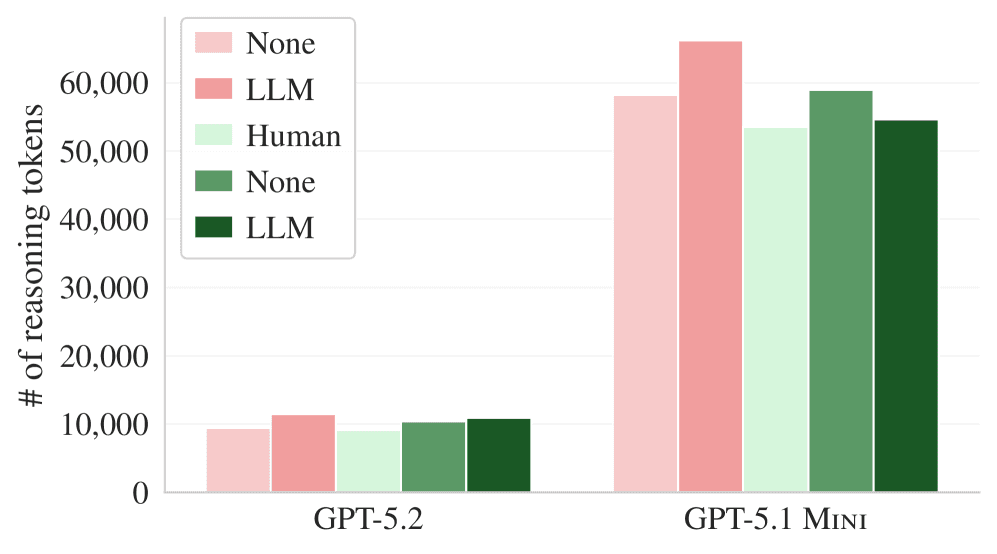

The cost story is consistent across all conditions. Whether the context file is human-written or auto-generated, agents spend 14-22% more reasoning tokens and take 2-4 additional steps to complete tasks. Following instructions costs compute, regardless of whether those instructions help.

Human-written context files tell a different story, producing a 4% improvement over no context on average across both benchmarks. That’s a meaningful gain, and it’s the number that explains why context files persist. On the right benchmark, with the right files, they do work.

But there’s a catch worth examining.

The Exploration Paradox

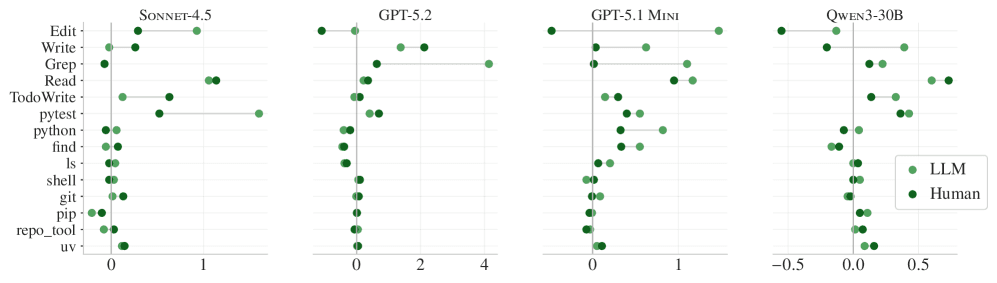

Agents follow context file instructions faithfully. That part is not in question. When a context file mentions using uv as the package manager, uv usage jumps to 1.6 times per instance on average, compared to fewer than 0.01 times without it. When it specifies a testing framework, agents switch to it. The instruction-following works.

What doesn’t follow is that instruction-following translates to success. Agents that receive context files run more tests, search more files, traverse more of the repository, and generate more reasoning output. They explore more thoroughly. But thorough exploration isn’t the same as correct exploration.

The paper’s analysis of traces shows that detailed directory enumerations and codebase overviews, which 100% of LLM-generated context files include, don’t meaningfully reduce the number of steps before agents reach the relevant files. The agent still has to find the right place in the code. A map of the whole city doesn’t tell you which building to walk into.

This is the core tension. Agents are instruction-following systems. Give them more instructions, and they’ll follow more instructions. But more activity isn’t the same as better activity.

Why Human Files Win (On Their Turf)

The difference between human-written and auto-generated context files comes down to redundancy.

LLM-generated files tend to reproduce information already available elsewhere in the repository, like READMEs, documentation folders, and existing CONTRIBUTING.md files. The paper tested this directly. When documentation files (.md files, docs/) were removed from repositories before generating context files, LLM-generated files improved by 2.7% and actually outperformed human-written ones. The content that made auto-generated files counterproductive was redundant content.

Human-written context files, by contrast, tend to contain information that doesn’t exist elsewhere. Maintainers write them to capture things that aren’t obvious from the code, like the specific tooling decisions they’ve made, the quirks of their CI setup, and the non-default conventions they’ve adopted. This is additive information.

The practical implication is that context files are useful to the extent they tell agents something they couldn’t figure out from the repository itself. Codebase overviews and workflow summaries don’t clear that bar. Specific tooling requirements often do.

Current Limitations

The evaluation is limited to Python repositories. Whether these patterns hold for TypeScript, Rust, or multi-language codebases is an open question.

The benchmarks also only measure issue resolution. Context files might have other effects that aren’t captured here, such as security, consistency, and adherence to project-specific conventions that don’t show up in whether a PR gets merged. A context file that reduces hallucinated library usage might be valuable even if it doesn’t move the success rate number.

The longitudinal picture is missing by necessity. Context files are recent enough that you can’t study how their quality evolves over time, or how agents might improve at using them as training data catches up to their adoption.

What This Means Going Forward

A few threads worth thinking through.

Write for the gap, not the overview. The clearest practical takeaway is that context files should encode what the repository doesn’t already explain. Tool choices that diverge from defaults. Non-obvious test configurations. Constraints that aren’t apparent from reading the code. A CLAUDE.md that restates the README is probably hurting more than helping.

Evaluation methodology matters for context file design. The paper’s finding that auto-generated files hurt on standard repos but human files help on niche repos suggests the effect is highly dependent on the information environment. Teams building repositories with good existing documentation may find context files redundant by default. Teams with sparse documentation or unusual tooling stacks have more to gain.

The cost floor is real. Every context file adds 20% to inference cost, regardless of quality. For high-volume agentic pipelines, that’s not nothing. Whether the performance gains justify the cost depends on the quality of the file and the nature of the tasks.

LLM-generated context files need a different approach. The redundancy problem in auto-generated files is fixable. A generator that explicitly avoids restating existing documentation and focuses instead on extracting non-obvious tooling decisions and conventions would likely perform meaningfully better. This is an obvious engineering improvement that the current generation of generators hasn’t made.

The deeper question the paper raises is about what instruction-following actually means for agents that are trying to accomplish tasks rather than just comply with directives. An agent that spends extra steps carefully following context file guidance about testing conventions, and then fails to fix the bug, has prioritized process over outcome. Getting that balance right is as much a training problem as a context file design problem.

Final Words and Resources

Context files are not magic, but they’re also not useless. The paper’s findings land in a genuinely useful place. Human-written files with specific, non-redundant information improve performance. Auto-generated files that reproduce existing documentation hurt performance. The mechanism in both cases is the same. Agents follow instructions, and the quality of the outcome depends entirely on the quality of the instructions.

For anyone writing AGENTS.md files regularly, the practical recommendation is to keep them minimal and specific. Describe the tools and conventions that aren’t obvious from the code. Leave out what’s already in the README.

Resources

Full paper: Evaluating AGENTS.md: Are Repository-Level Context Files Helpful for Coding Agents?

AGENTbench dataset: github.com/eth-sri/agentbench

The instruction-following paradox here is wild. Agents do exactly what you tell them to do but that doesn't mean they solve the problem faster.

I've noticed this with my own AGENTS.md files. Some of the agent spends more time checking boxes than figuring out where the actual bug lives, or some will try to complete every step of the designed agenda without making the judgement whether a step is necessary or not.

And yes to the finding about LLM-generated files just restating README content. I think I've been guilty of that. Really need to keep it minimal and specific.

Thanks for sharing this!

The anchoring effect surprised me the most out of all of this. Agents that saw uv mentioned in context files used it 160x more, whether it was the right tool or not. I dug into all three failure mechanisms and the practical fixes too: https://sulat.com/p/agents-md-hurting-you