🤖 AI Agents Weekly: Thinking Machines Interaction Models, Is Grep All You Need?, Codex Mobile + Hooks, Cursor Cloud Agents, Ring-2.6-1T, and More

Thinking Machines Interaction Models, Is Grep All You Need?, Codex Mobile + Hooks, Cursor Cloud Agents, Ring-2.6-1T, and More

In today’s issue:

Thinking Machines unveils interaction models

Is Grep All You Need? challenges vector RAG

OpenAI ships Codex mobile and hooks

Cursor adds cloud agent dev environments

Ring-2.6-1T open trillion-scale agent model

Recursive Superintelligence emerges with $650M

LangChain Labs targets continual learning

xAI launches Grok Build CLI

Claude Code adds agent view

Prime Intellect agents beat nanoGPT speedrun

World Labs open-sources image-blaster

Isomorphic Labs raises $2.1B Series B

Beyond Individual Intelligence multi-agent survey

LongMemEval-V2 raises the memory bar

And all the top AI dev news, papers, and tools.

Top Stories

Thinking Machines Introduces Interaction Models

Mira Murati’s Thinking Machines Lab released its first research preview: a new class of models trained from scratch for real-time interaction across audio, video, and text, instead of bolting streaming onto a turn-based stack. The frontier shifts from “answer faster” to “stay engaged while you think.”

Time-aligned micro-turns: Input and output are treated as continuous 200ms streams, so the model can listen, look, and speak in parallel rather than waiting for full user turns.

TML-Interaction-Small: A 276B parameter MoE with 12B active, using encoder-free early fusion, streaming inference sessions, and batch-invariant kernels for stable training.

Background reasoning model: A separate async model handles complex reasoning, freeing the interaction model to stay responsive in the foreground loop.

FD-bench v1.5: Scores 77.8 on a new interactivity benchmark versus 39.0-54.3 for competitors, with real-time speech, visual proactivity, and interrupt handling that turn-based systems cannot match.

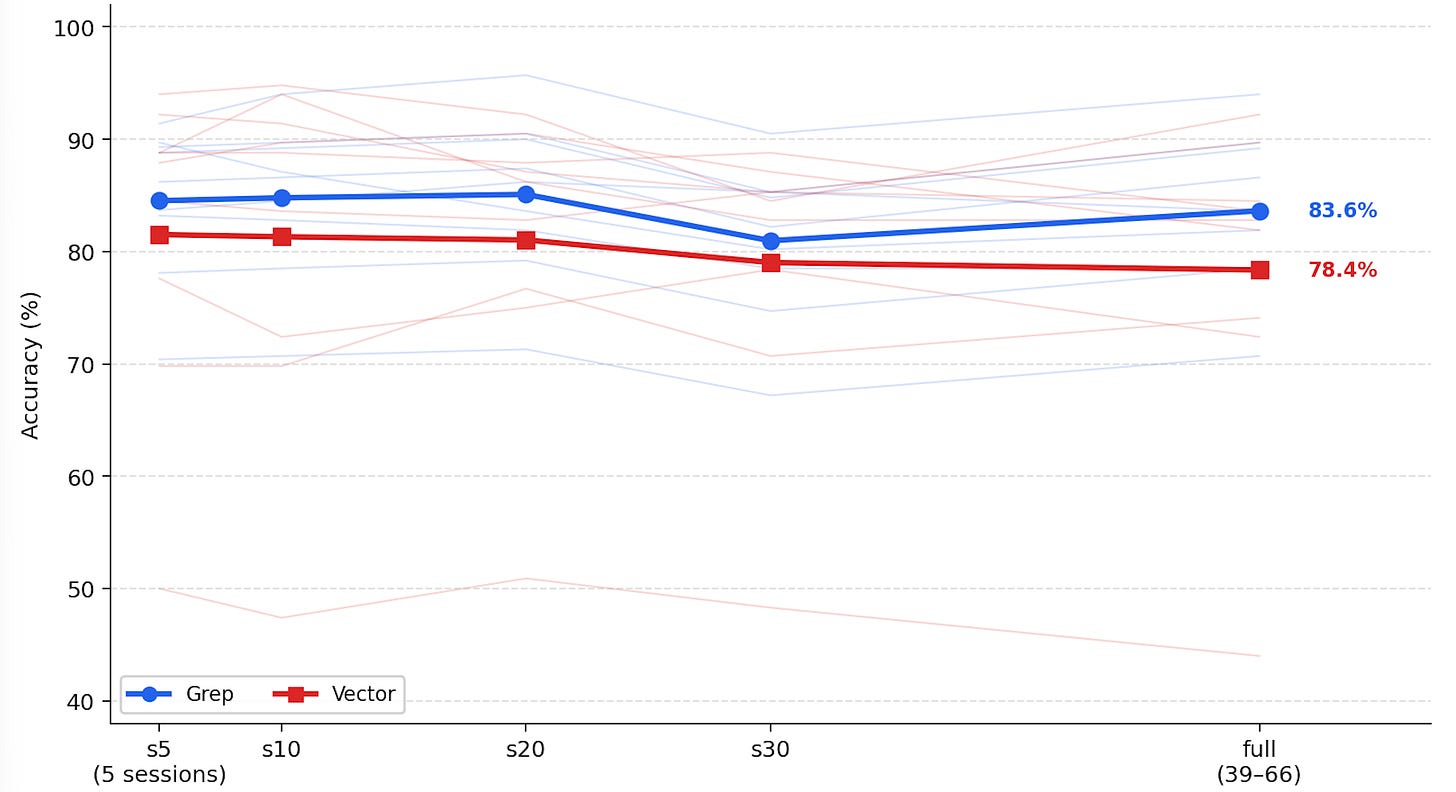

Is Grep All You Need? Harness Beats Vector RAG for Coding Agents

A controlled study makes the empirical case that grep-style text search, wrapped in the right agent harness, matches or beats embedding-based retrieval on real coding-agent tasks. The deeper claim is that harness design (which meta-tools, in what order) explains more variance in agent performance than the retrieval algorithm itself.

Head-to-head retrieval: Across coding benchmarks, grep + light ranking ties or exceeds vector-DB retrieval, with much lower latency, cost, and infra overhead than embedding-based stacks.

Harness > algorithm: The order and shape of search/read/edit meta-tools dominate the result, suggesting “RAG quality” is mostly a harness problem dressed up as a retrieval problem.

Implications for tooling: Reinforces the broader move from vector DBs to file-search primitives inside Codex, Claude Code, and Cursor, where ranking quality matters more than the underlying retrieval algorithm.

Why it matters: The strongest empirical pushback yet against vector-DB-for-coding-agents, and a useful prior for anyone deciding whether to invest in retrieval infra or harness engineering.