🤖 AI Agents Weekly: Meta FAIR Autodata, ZAYA1-8B, SubQ 12M Context, Natural Language Autoencoders, Claude Managed Agents Dreaming, and More

Meta FAIR Autodata, ZAYA1-8B, SubQ 12M Context, Natural Language Autoencoders, Claude Managed Agents Dreaming, and More

In today’s issue:

Meta FAIR introduces Autodata

Zyphra releases ZAYA1-8B

SubQ ships a 12M-token frontier model

Anthropic introduces Natural Language Autoencoders

Claude Managed Agents adds dreaming and multi-agent

Printing Press: an agent CLI factory

Flue agent harness framework launches

Anthropic adds keyless auth

AlphaEvolve marks one year of impact

Goodfire opens a neural geometry series

Firefox hardened with Claude Mythos

And all the top AI dev news, papers, and tools.

Top Stories

Autodata: An Agentic Data Scientist From Meta FAIR

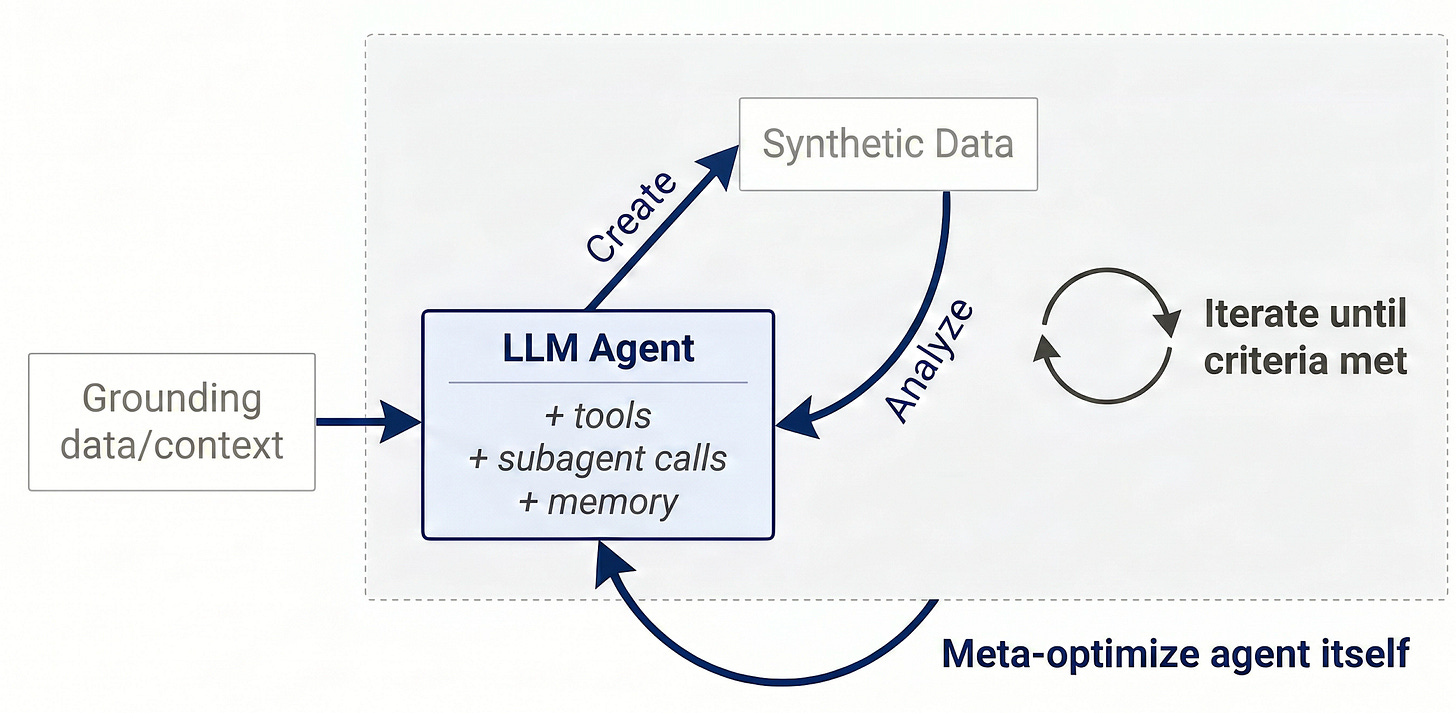

Meta FAIR (Jason Weston et al.) introduced Autodata, an agentic data scientist that builds high-quality training and evaluation data autonomously. The framing is that inference compute can be converted into model quality if the data pipeline itself is an agent.

Agentic Self-Instruct loop: A planner-executor agent generates, critiques, and refines training and eval examples in a closed loop, replacing static seed sets with a process that keeps producing harder data as the model improves.

34-point weak-to-strong gap: On a CS research QA task, Autodata data opens a 34-point accuracy gap between weak and strong models, a much larger separation than off-the-shelf instruction sets achieve.

Inference compute as a quality lever: The work reframes synthetic data as the place where inference budget pays off, an angle that lines up with Microsoft’s FaraGen and the broader synthetic-environments thread.

Why it matters: Pairs naturally with self-improving agent runtimes (Claude Managed Agents Outcomes loop, ACE, AHE), giving teams a credible recipe for the data half of the self-improvement story.