🤖 AI Agents Weekly: Hyperagents, Multi-Agent Harness Design, Chroma Context-1, Composer 2, ARC-AGI-3, and More

Hyperagents, Multi-Agent Harness Design, Chroma Context-1, Composer 2, ARC-AGI-3, and More

In today’s issue:

Hyperagents: self-improving agents that improve how they improve

Anthropic publishes multi-agent harness design

Chroma ships Context-1 open-source search agent

Cursor releases Composer 2 technical report

ARC-AGI-3 launches with sub-1% AI scores

Codex ships plugins for Slack, Figma, Notion

Gemini 3.1 Flash Live enables realtime voice agents

Claude Code auto mode skips permissions safely

AI Scientist published in Nature

Anthropic Economic Index tracks learning curves

Junyang Lin frames reasoning vs. agentic thinking

Cohere ships open-source Transcribe model

Agent-to-agent pair programming with Claude and Codex

Claude Code ships cloud-scheduled tasks

Cursor builds Instant Grep for millisecond search

OpenSpace: self-evolving agent skills via MCP

And all the top AI dev news, papers, and tools.

Top Stories

Hyperagents: Self-Improving Agents That Improve How They Improve

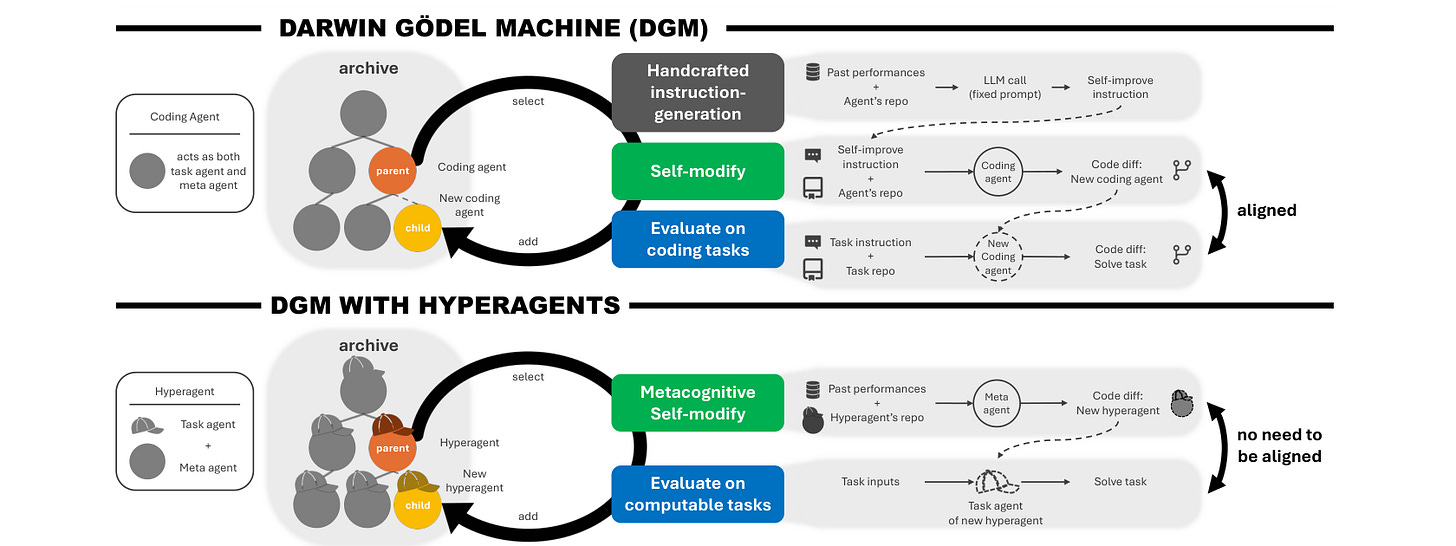

A team from Microsoft Research, Oxford, and the University of British Columbia introduced Hyperagents, self-referential agents that integrate a task agent and a meta agent into a single editable program. Built on the Darwin Godel Machine framework, DGM-Hyperagents enable metacognitive self-modification where the system improves not just task performance but the very mechanism that generates future improvements.

Recursive self-improvement: Unlike standard self-improving systems that optimize task-level behavior, Hyperagents make the improvement procedure itself editable. The meta agent can rewrite its own modification strategy, enabling compounding gains across successive runs.

Domain-general design: The framework eliminates domain-specific alignment assumptions found in prior self-improving systems. By operating over editable code rather than domain-locked prompts, Hyperagents generalize self-improvement to any computable task.

Transferable meta-level gains: Improvements discovered in one domain, such as memory management and performance tracking routines, persist and transfer when the agent is deployed on entirely different problem types, suggesting durable architectural gains rather than task-specific shortcuts.

Outperforms prior self-improving systems: DGM-Hyperagents consistently outperform both non-self-improving baselines and prior self-improving agents across diverse evaluation domains, with performance continuing to increase over longer run horizons.

Multi-Agent Harness Design for Long-Running Apps

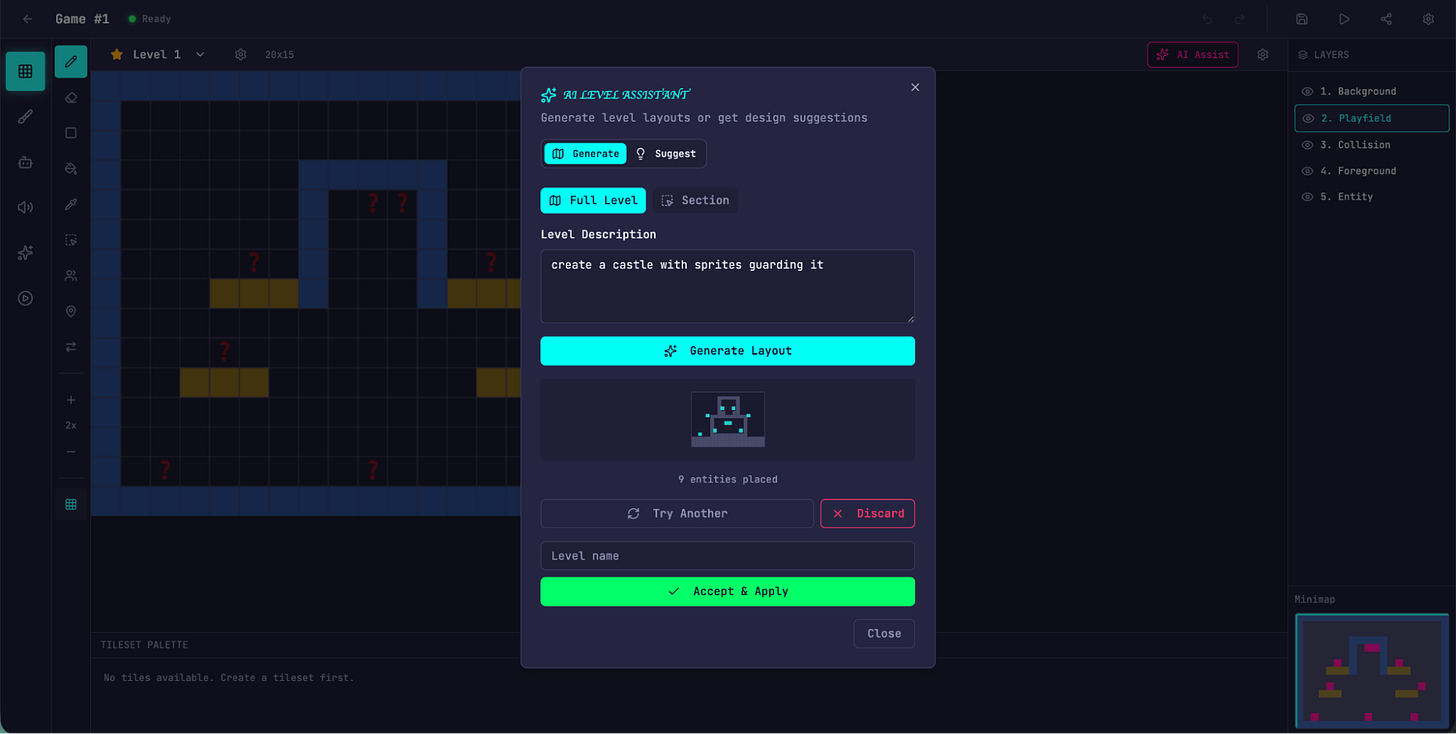

Anthropic published a detailed engineering blog on how it uses a multi-agent harness to push Claude further in frontend design and long-running autonomous software engineering. The architecture separates generation from evaluation using a GAN-inspired system, with specialized planner, generator, and evaluator agents operating in fresh context windows.

Three-agent architecture: A Planner expands brief prompts into detailed product specifications, a Generator implements features incrementally using React, FastAPI, and SQLite, and an Evaluator tests functionality using Playwright against agreed contracts.

Separation of concerns: Separating the agent doing the work from the agent judging it proved to be the strongest lever for improving output quality, more tractable than making agents self-critical within a single context.

Fresh context windows: Rather than relying on context compaction alone, the harness gives each agent a clean context window per iteration, eliminating “context anxiety” where models prematurely wrap up long tasks.

Quality at cost: A complex retro game maker built with the full harness demonstrated substantially better quality than solo attempts, with working features, coherent design, and integrated AI capabilities, despite 20x higher costs.