🤖 AI Agents Weekly: Cursor 3, Gemma 4, Qwen3.6-Plus, GLM-5V-Turbo, Claude Code Source Leak, Emotion Concepts in LLMs, and More

Cursor 3, Gemma 4, Qwen3.6-Plus, GLM-5V-Turbo, Claude Code Source Leak, Emotion Concepts in LLMs, and More

In today’s issue:

Cursor 3 ships agent-first IDE redesign

Google drops Gemma 4 open models (Apache 2.0)

Qwen3.6-Plus targets real-world agents

GLM-5V-Turbo turns designs into code

Claude Code source code leaks via npm

Anthropic maps emotion concepts in Claude

Codex plugin bridges Claude Code and Codex

AI Agent Traps maps six attack surfaces

CORAL agents self-organize, beat fixed topologies

And all the top AI dev news, papers, and tools.

Top Stories

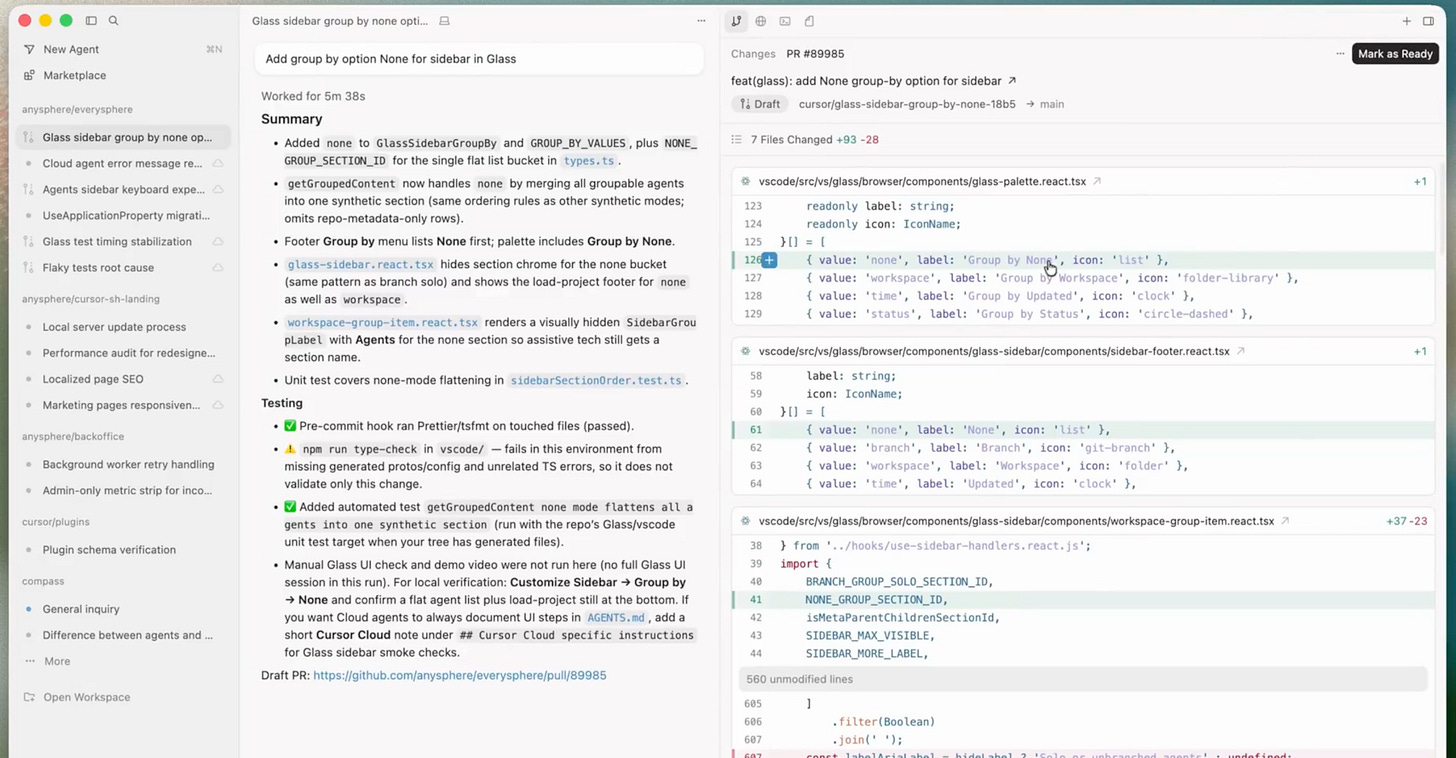

Cursor 3: Agent-First IDE

Cursor released Cursor 3, a ground-up redesign that replaces the VS Code-based editor with a unified workspace built for agent-driven development. The new interface treats agents as first-class citizens, with a single sidebar managing local and cloud agents launched from desktop, mobile, web, Slack, GitHub, or Linear.

Multi-agent parallelism: Developers can run unlimited agents simultaneously across local worktrees, remote SSH, and cloud environments, each operating independently with full task isolation.

Seamless environment handoff: Agent sessions can migrate bidirectionally between cloud and local, letting developers move long-running cloud tasks to their desktop for editing or push local sessions to cloud infrastructure for overnight execution.

Unified diff and commit workflow: A simplified interface integrates editing, reviewing, staging, committing, and PR management into a single flow, with full LSP support for code navigation and an integrated browser for testing local web apps.

Marketplace ecosystem: Hundreds of plugins extend agent capabilities through MCP servers, skills, and subagents, with support for team-specific private marketplaces.

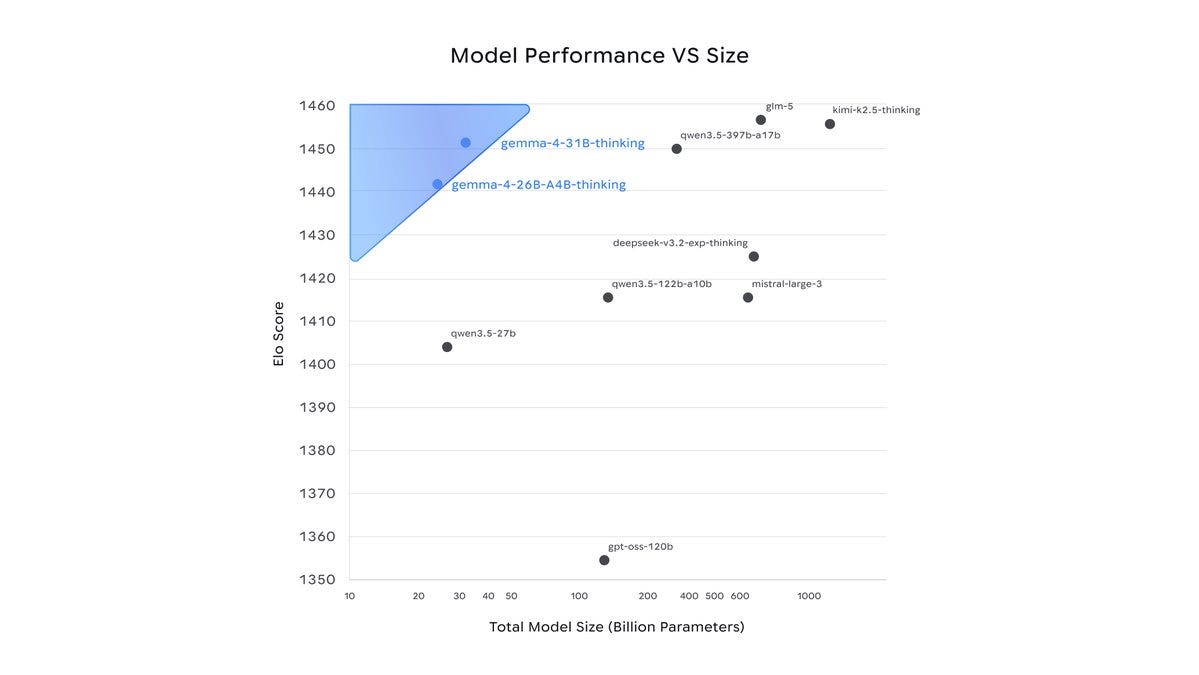

Gemma 4: Most Capable Open Models

Google released Gemma 4, a family of open-weight models (Apache 2.0) designed to run on phones, laptops, and desktops while delivering frontier-level intelligence. The series includes a 26B Mixture-of-Experts and a 31B Dense model, both purpose-built for advanced reasoning and agentic workflows.

On-device frontier intelligence: Gemma 4 models are optimized to run locally on consumer hardware while matching or exceeding the capabilities of much larger cloud-deployed models, reducing latency and enabling private, offline agent deployments.

Agentic workflow support: The models are designed for multi-step tool use, function calling, and structured output generation, making them directly applicable to agent pipelines that need reliable local execution.

Apache 2.0 license: Full open-weight release with no usage restrictions, enabling commercial deployment, fine-tuning, and integration into existing agent frameworks without licensing concerns.

Multi-format availability: Models are available on Kaggle, Hugging Face, and through Google AI Studio, with native support for popular inference frameworks.